Thirteenth

Measuring Broadband America

Fixed Broadband Report

A Report on Consumer Fixed Broadband Performance

in the United States

Federal Communications Commission

Office of Engineering and Technology

Table of Contents

- Chart 1: Weighted mean advertised download speed among the top 80% service tiers offered by each ISP

- Chart 2: Weighted mean advertised download speed among the top 80% service tiers based on technology

- Chart 3: Consumer migration to higher advertised download speeds

- Chart 4: The ratio of weighted median speed (download and upload) to advertised speed for each ISP

- Chart 5: The ratio of 80/80 consistent median download speed to advertised download speed

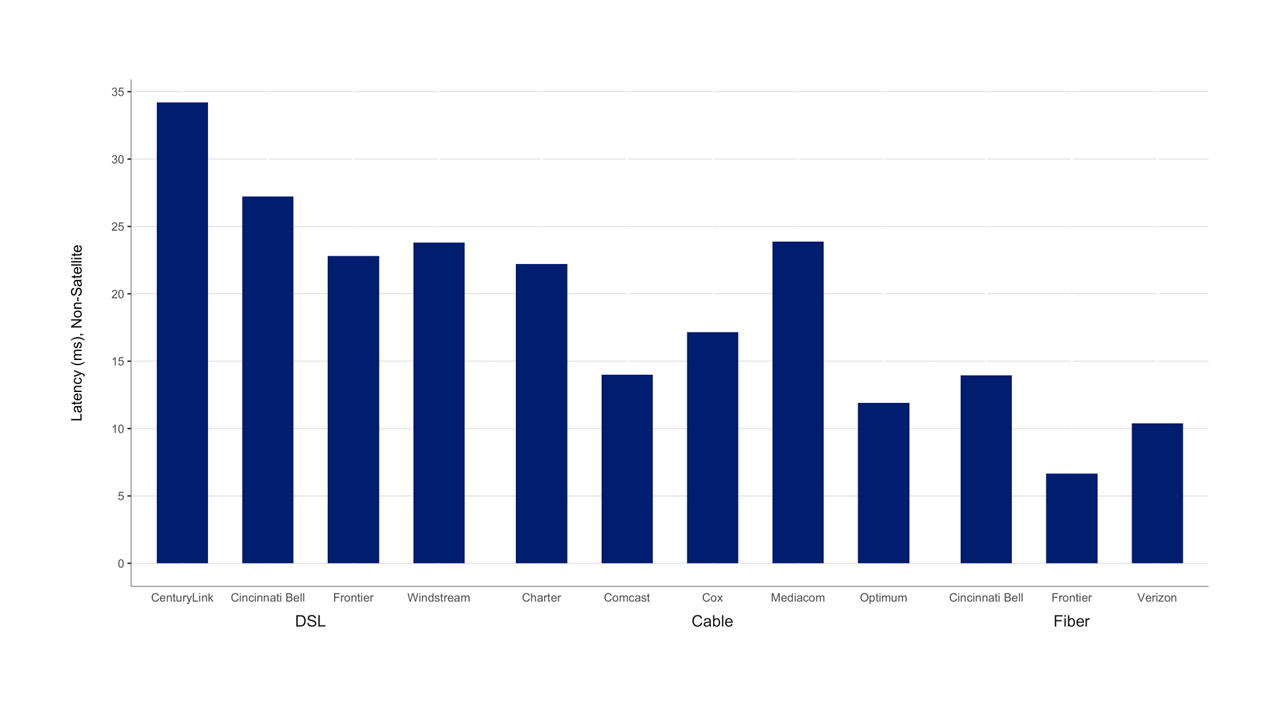

- Chart 6: Idle Latency by ISP

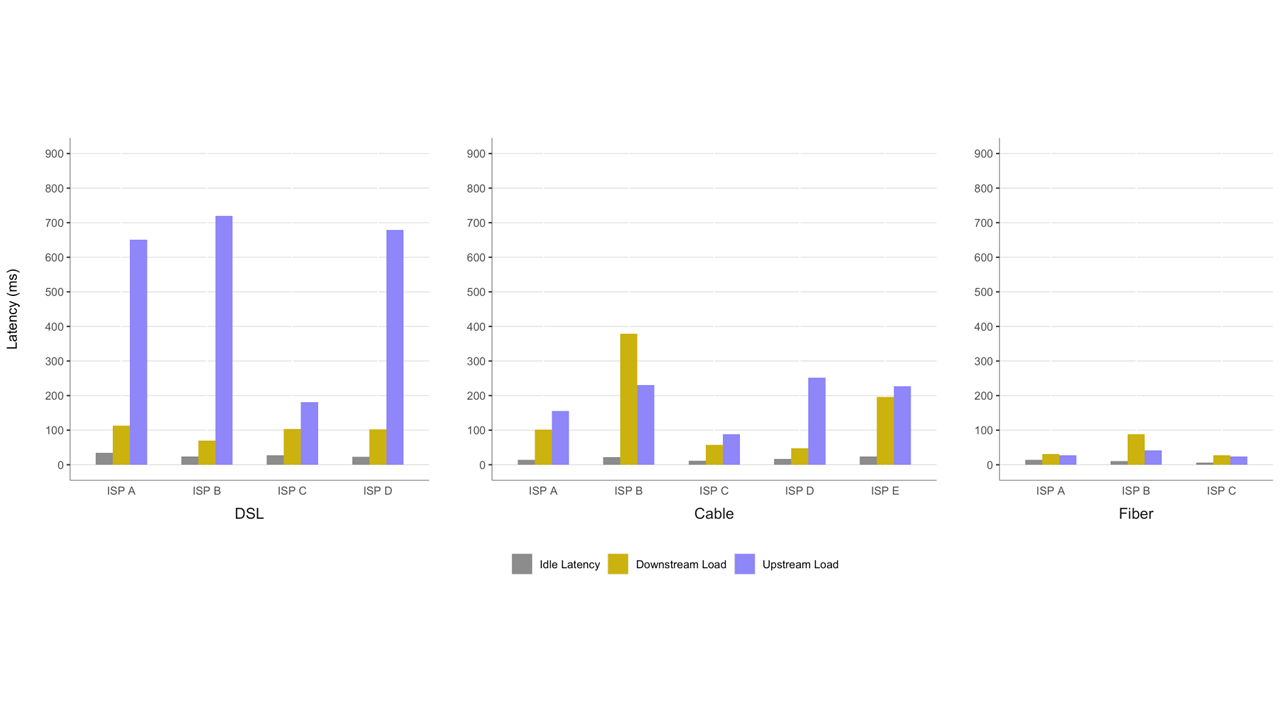

- Chart 7: ISP weighted median Idle latency compared to latency under downstream traffic load and latency under upstream traffic load

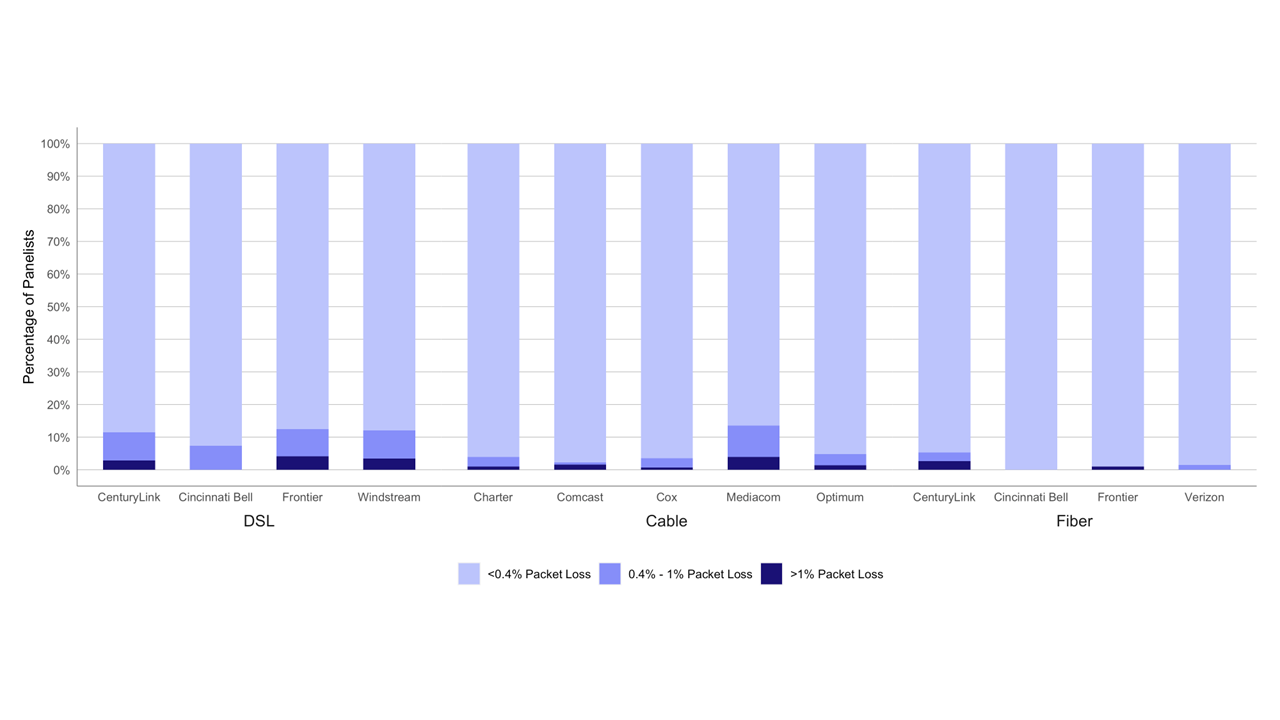

- Chart 8: Percentage of consumers whose peak-period packet loss was less than 0.4%, between 0.4% to 1%, and greater than 1%

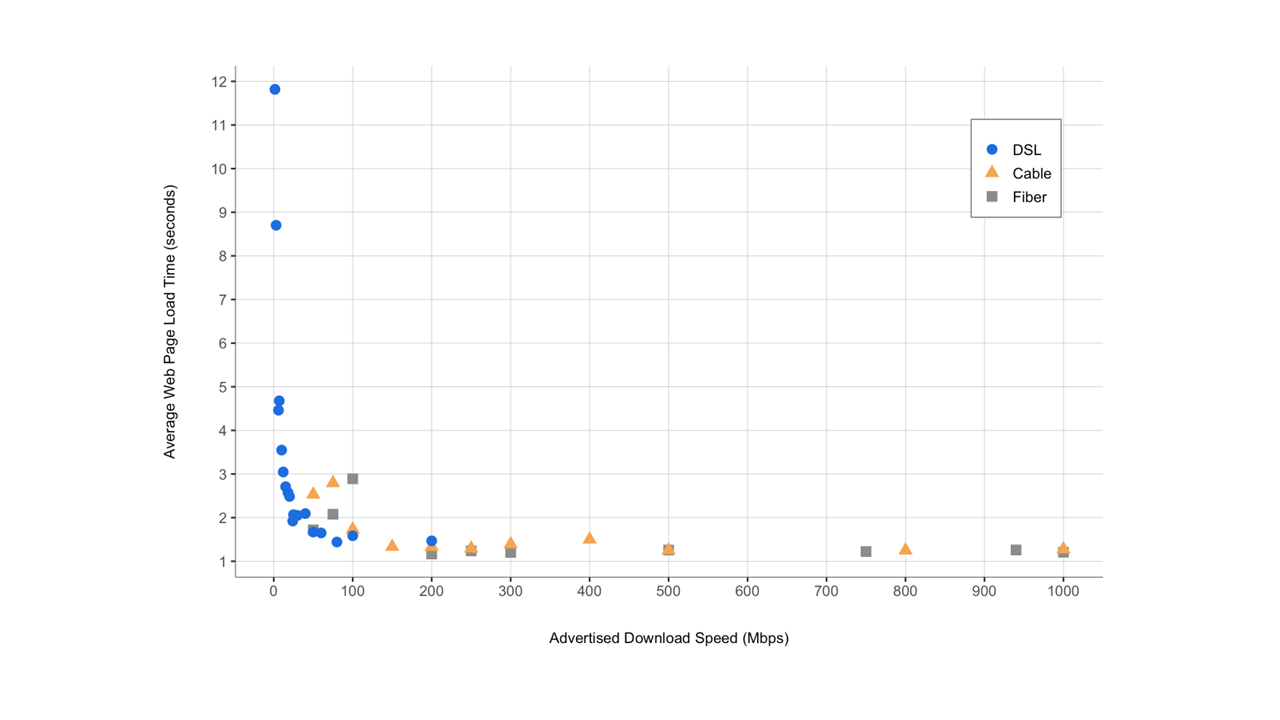

- Chart 9: Average webpage download time, by advertised download speed

- Chart 10.1: Weighted mean advertised upload speed among the top 80% service tiers offered by each ISP.

- Chart 10.2: Weighted mean advertised upload speed offered by ISPs using DSL technology

- Chart 10.3: Weighted mean advertised upload speed offered by ISPs using Cable technology.

- Chart 11: Weighted mean advertised upload speed among the top 80% service tiers based on technology.

- Chart 12.1: Complementary cumulative distribution of the ratio of median download speed to advertised download speed (DSL)

- Chart 12.2: Complementary cumulative distribution of the ratio of median download speed to advertised download speed (cable and fiber)

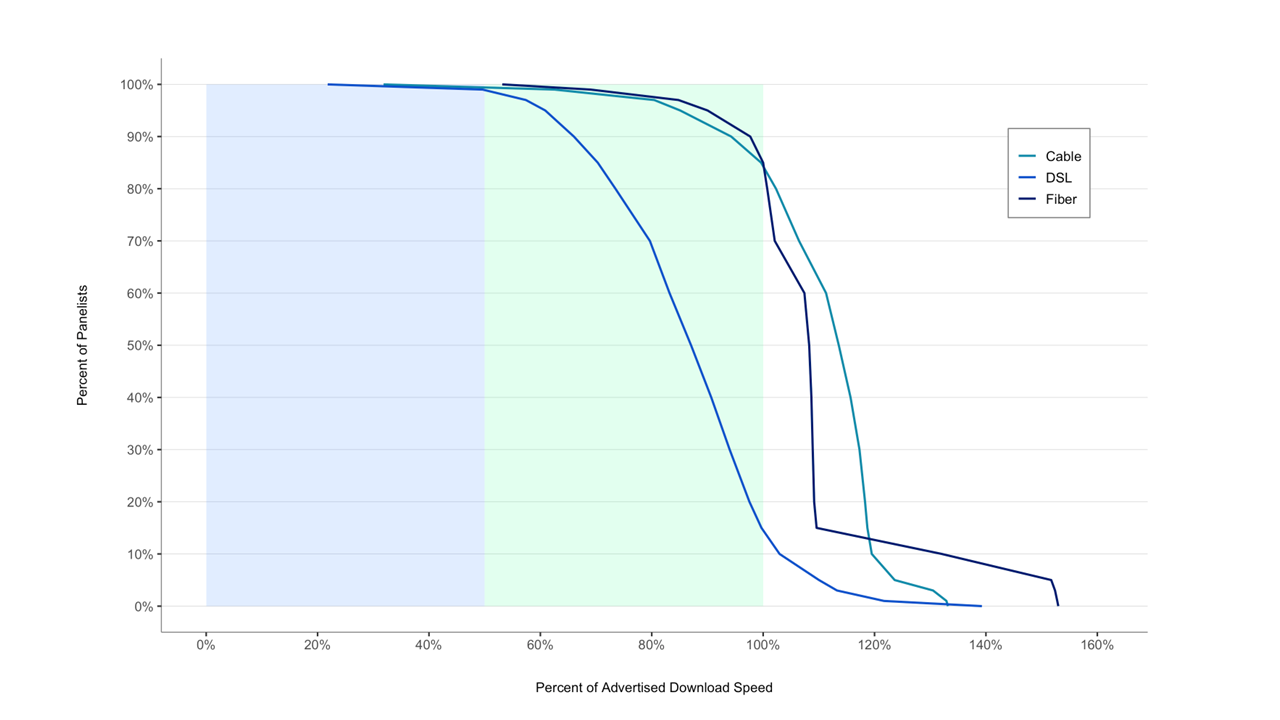

- Chart 12.3: Complementary cumulative distribution of the ratio of median download speed to advertised download speed, by technology

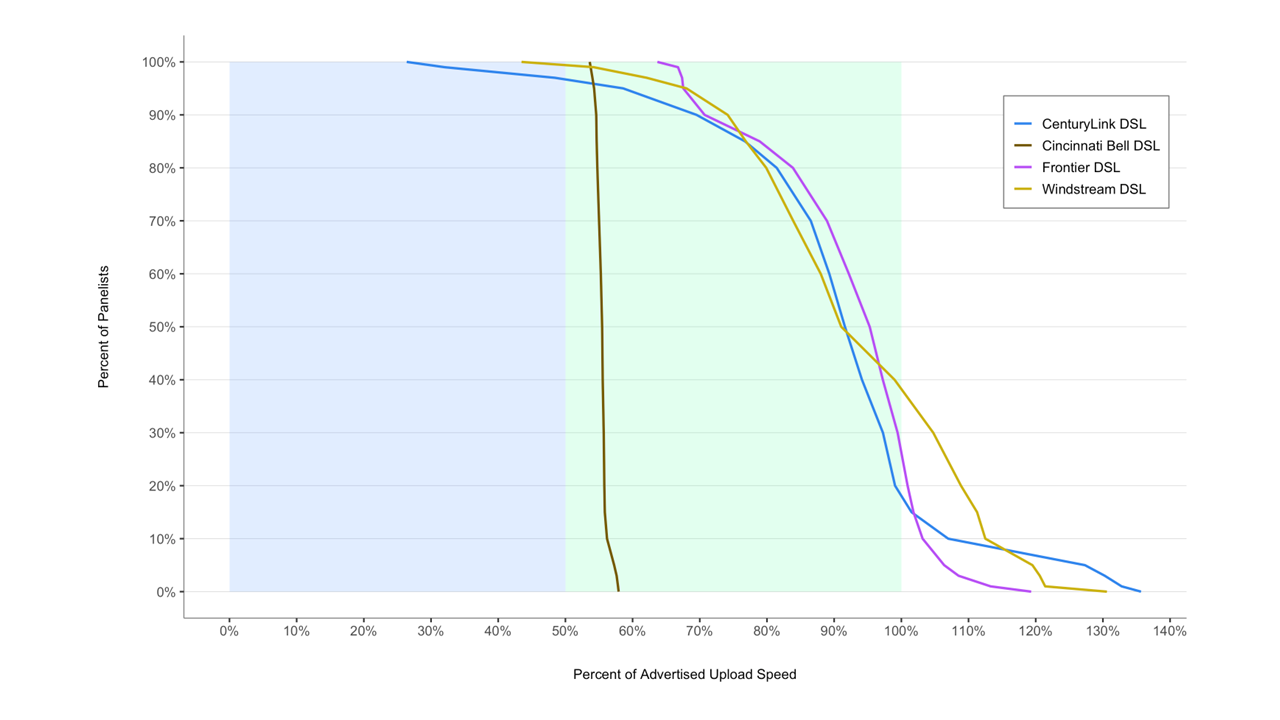

- Chart 12.4: Complementary cumulative distribution of the ratio of median upload speed to advertised upload speed (DSL)

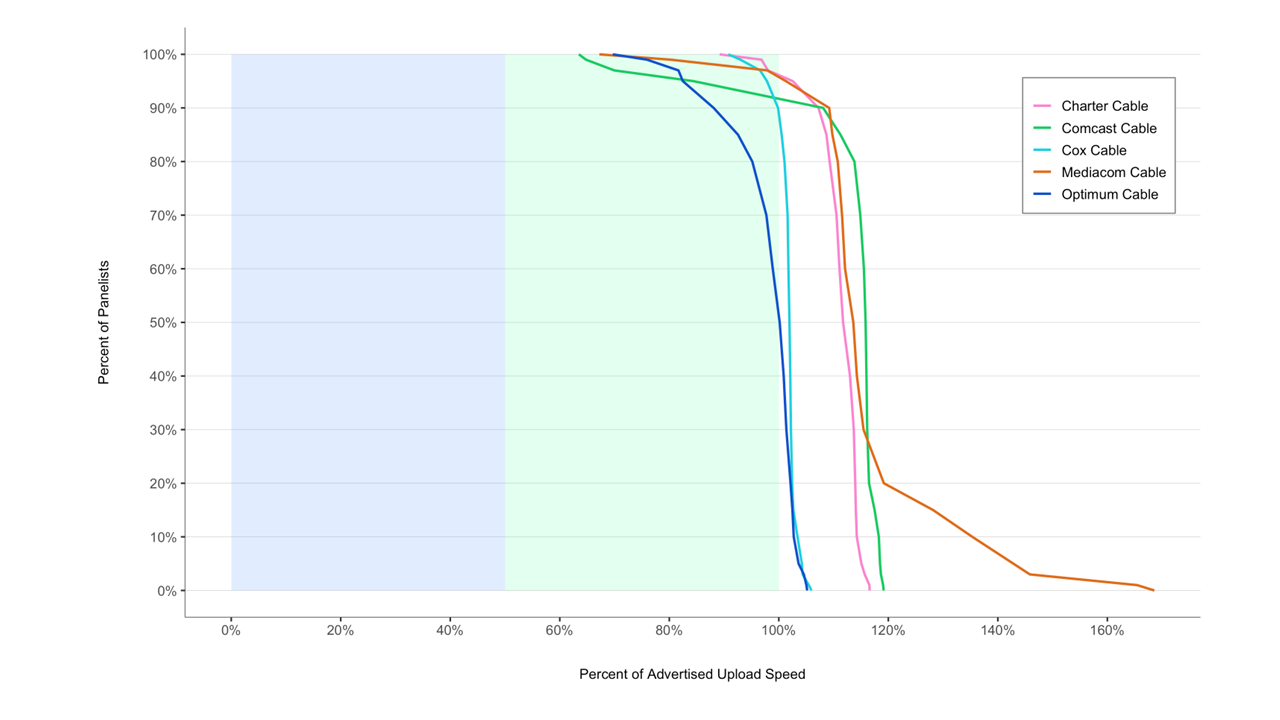

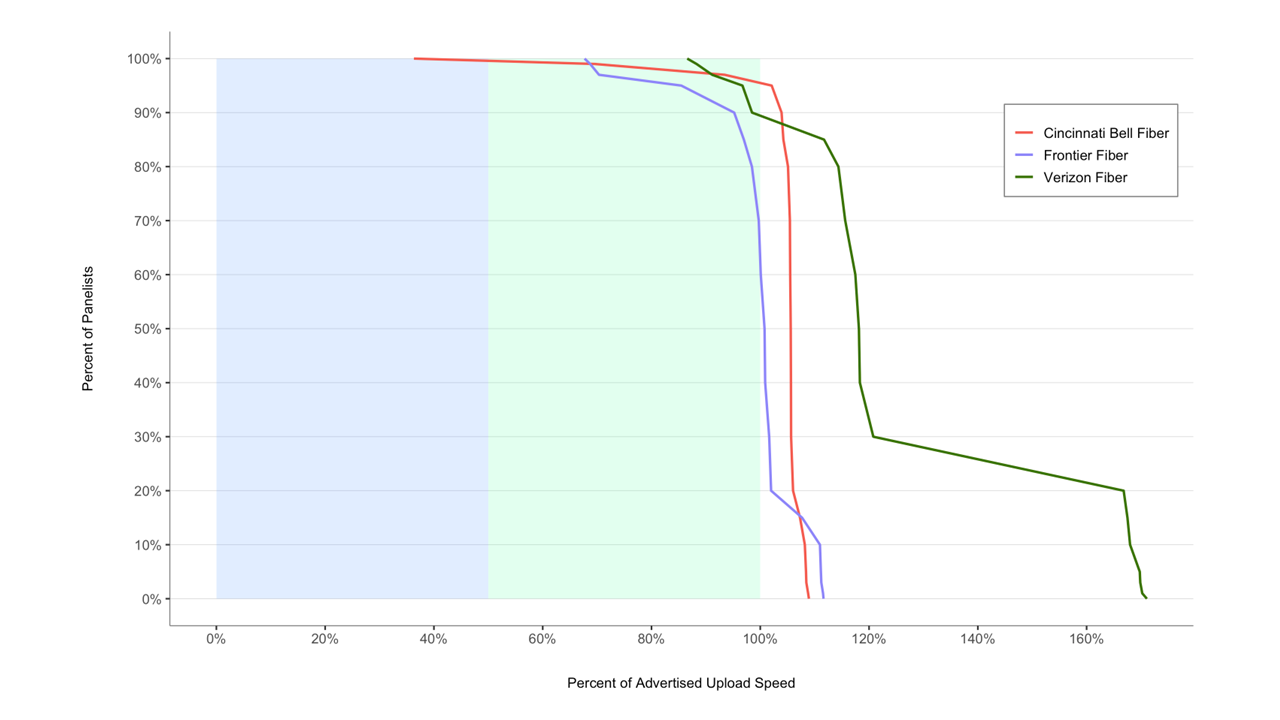

- Chart 12.5: Complementary cumulative distribution of the ratio of median upload speed to advertised upload speed (cable and fiber)

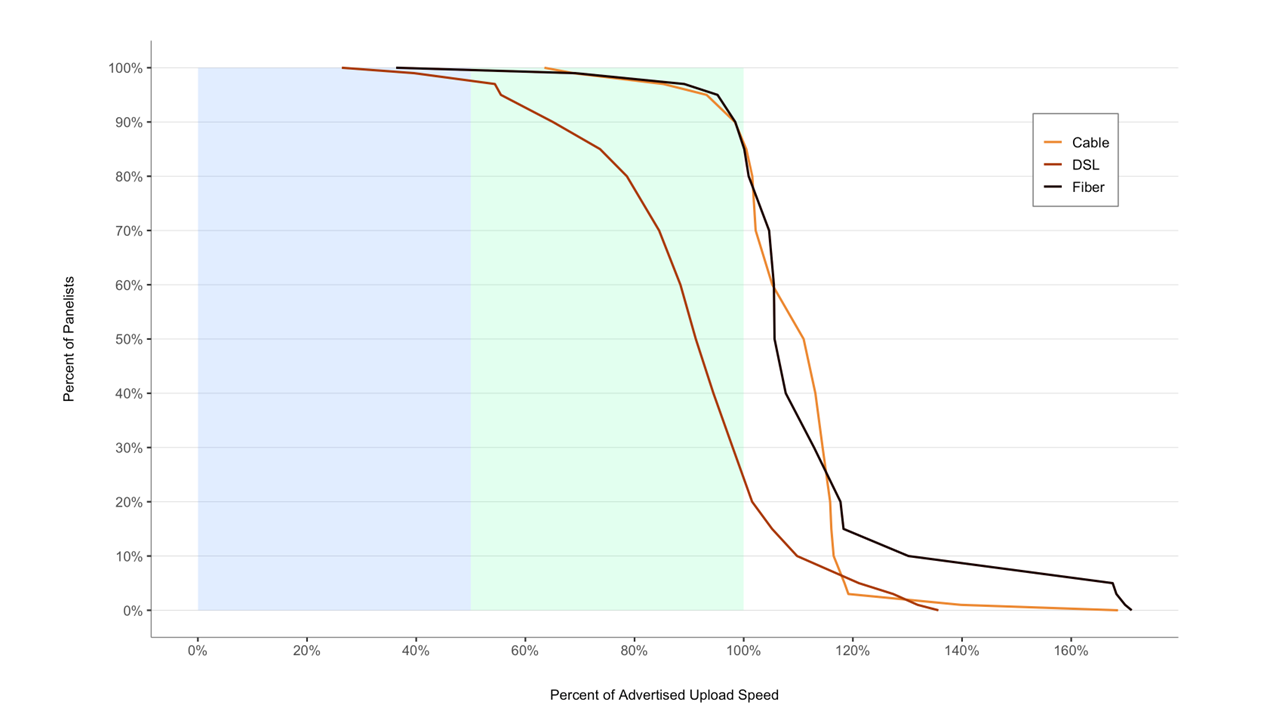

- Chart 12.6: Complementary cumulative distribution of the ratio of median upload speed to advertised upload speed, by technology

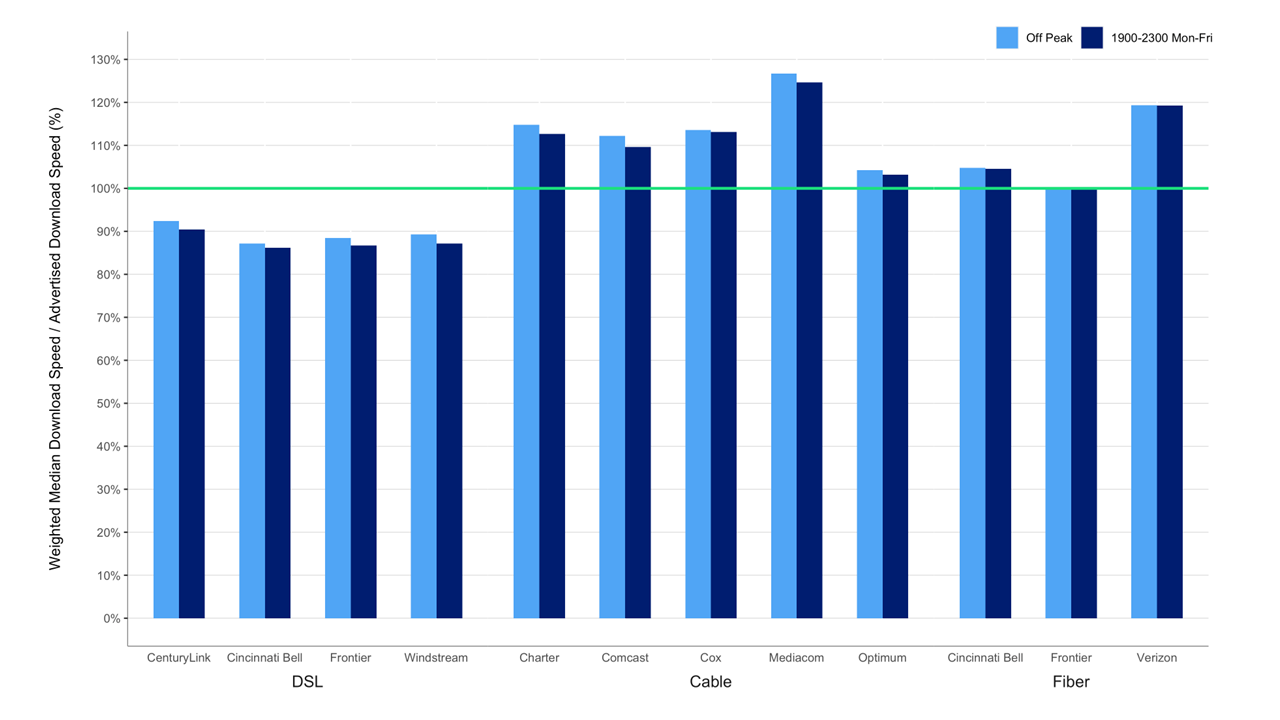

- Chart 13.1: The ratio of weighted median download speed to advertised download speed, peak hours versus off-peak hours.

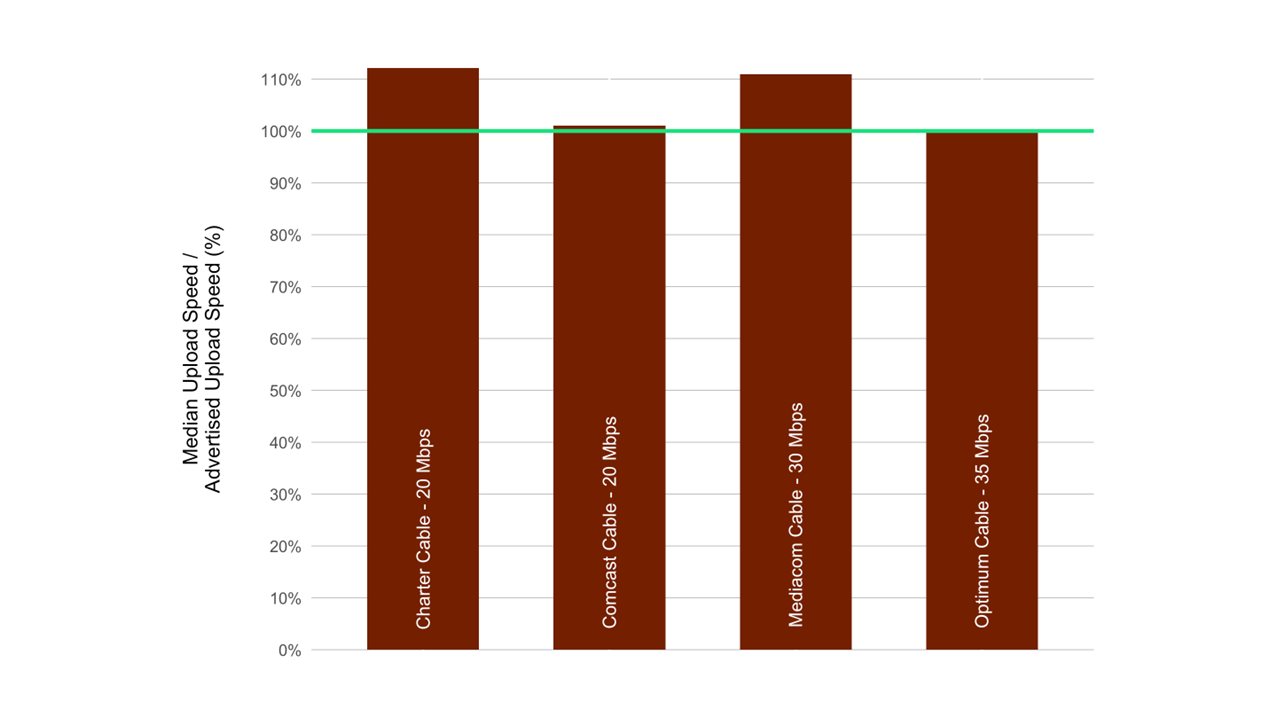

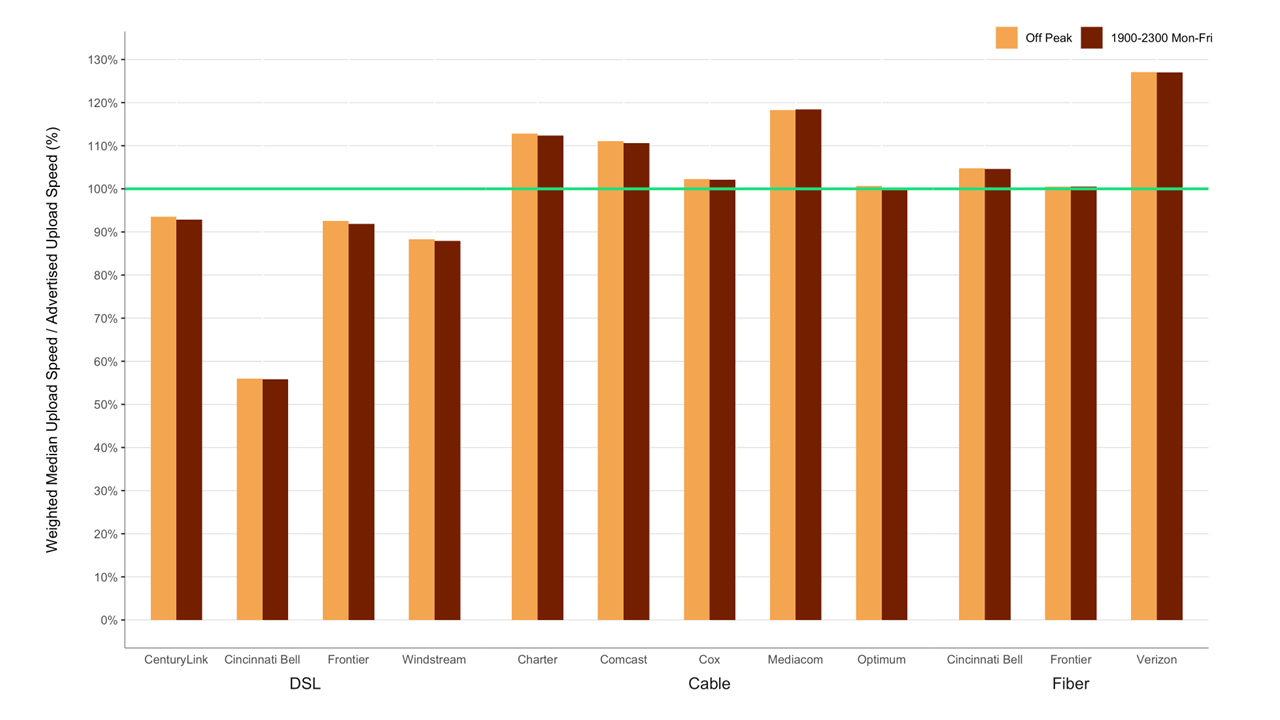

- Chart 13.2: The ratio of weighted median upload speed to advertised upload speed, peak versus off-peak

- Chart 14: The ratio of median download speed to advertised download speed, Monday-to-Friday, two-hour time blocks, terrestrial ISPs.

- Chart 15.1: The ratio of 80/80 consistent upload speed to advertised upload speed.

- Chart 15.2: The ratio of 70/70 consistent download speed to advertised download speed.

- Chart 15.3: The ratio of 70/70 consistent upload speed to advertised upload speed.

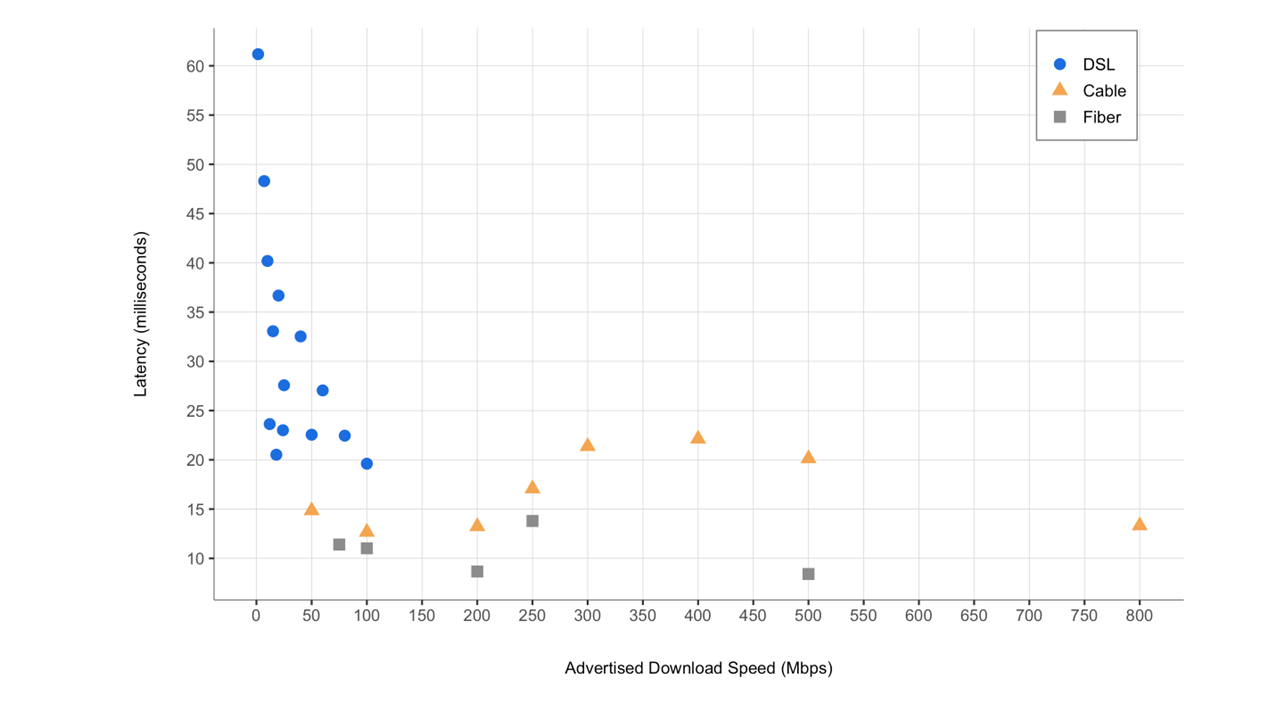

- Chart 16: Latency for Terrestrial ISPs, by technology, and by advertised download speed.

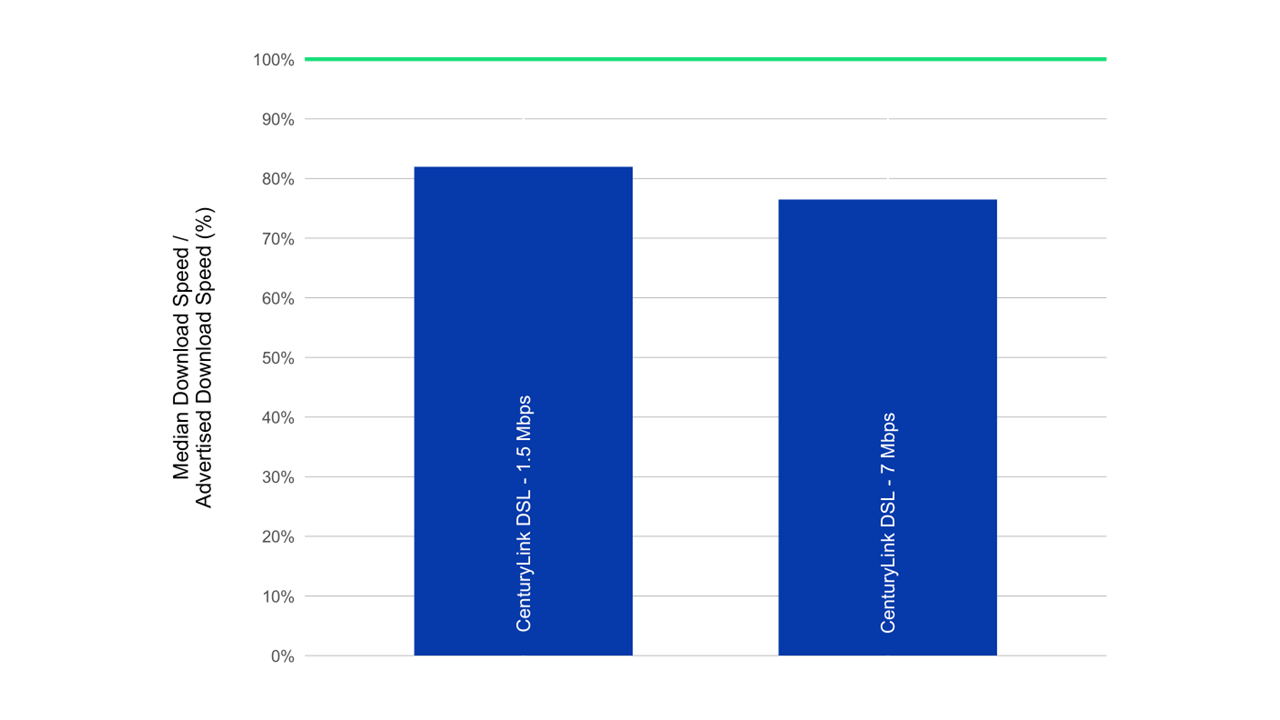

- Chart 17.1: The ratio of median download speed to advertised download speed, by ISP (1.5-7 Mbps).

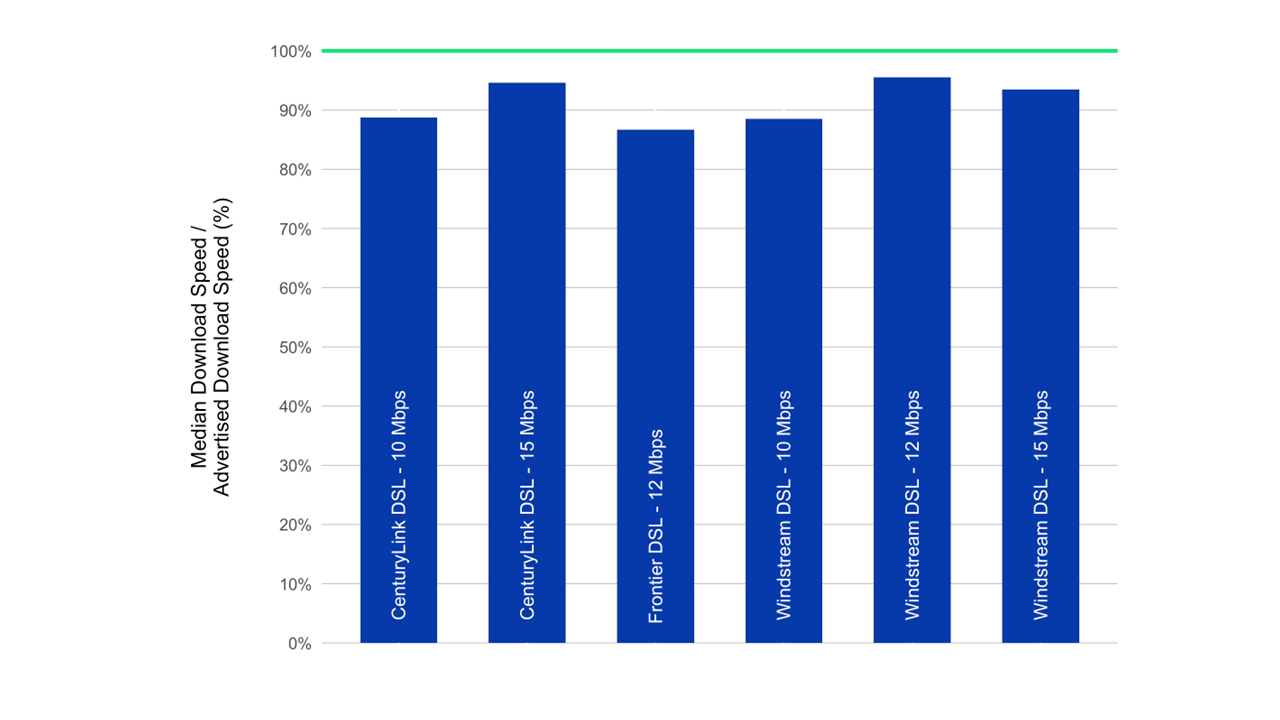

- Chart 17.2: The ratio of median download speed to advertised download speed, by ISP (10-15 Mbps).

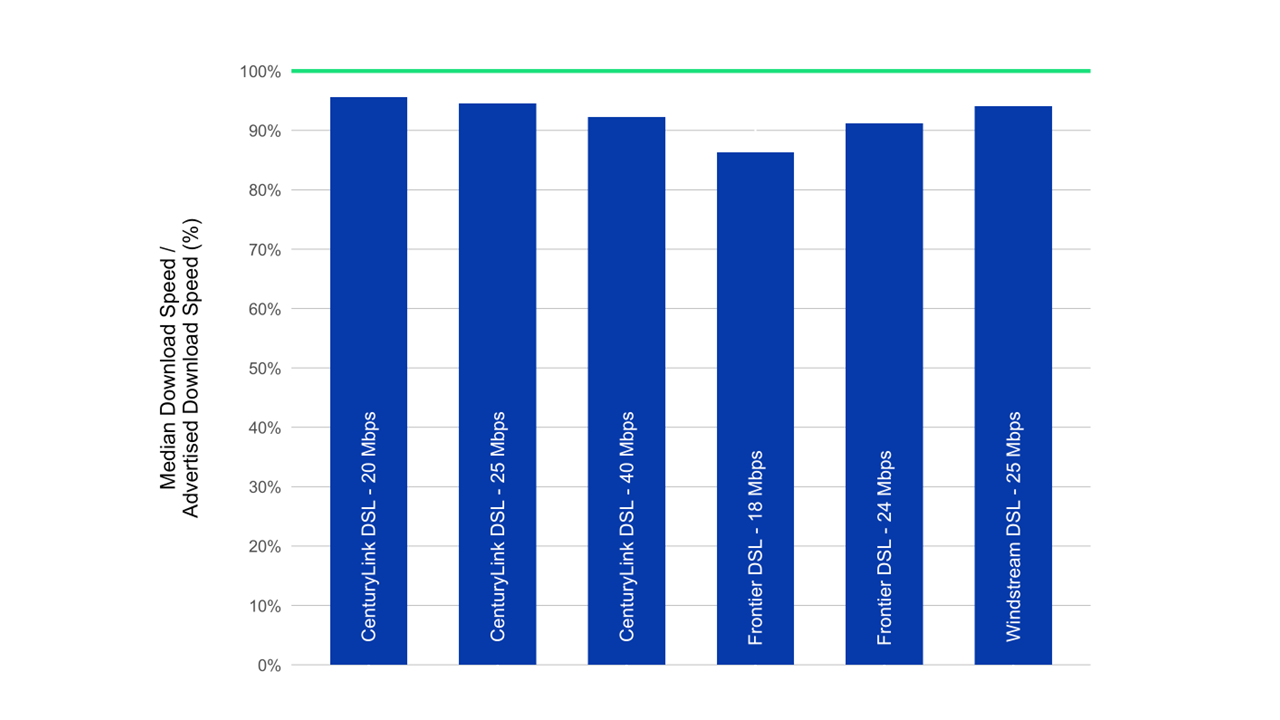

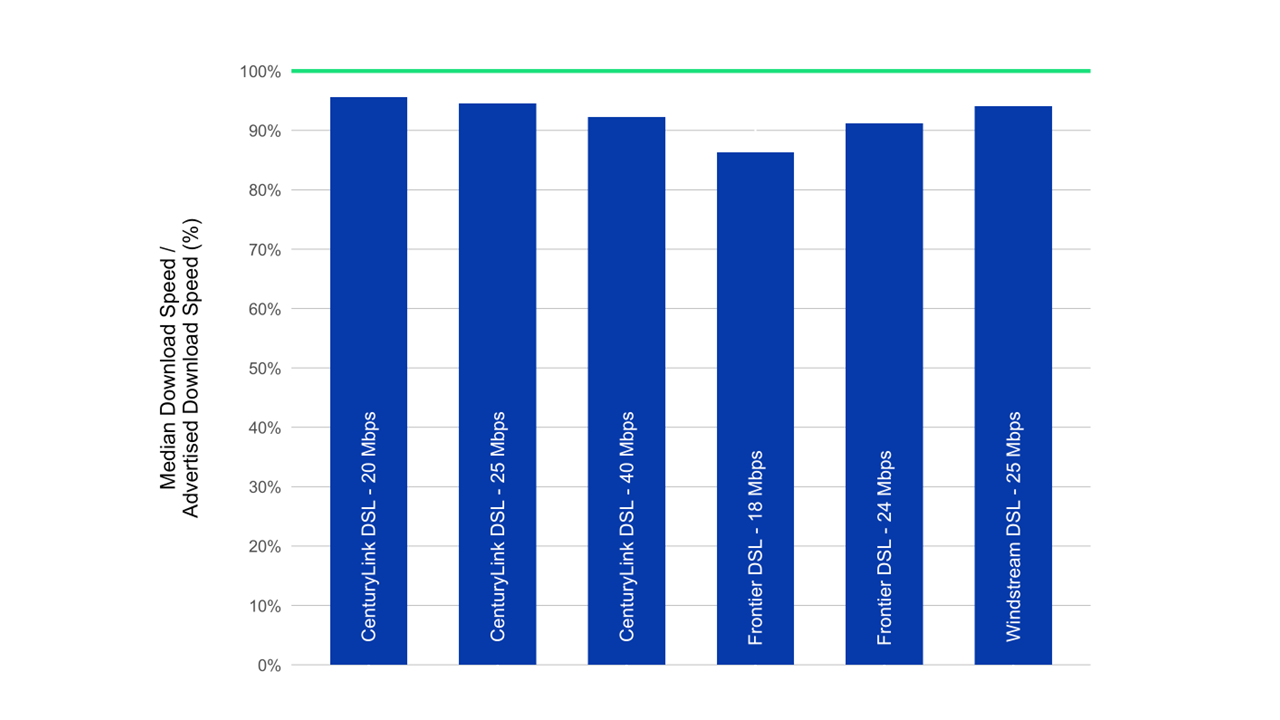

- Chart 17.3: The ratio of median download speed to advertised download speed, by ISP (18-40 Mbps).

- Chart 17.4: The ratio of median download speed to advertised download speed, by ISP (50-75 Mbps).

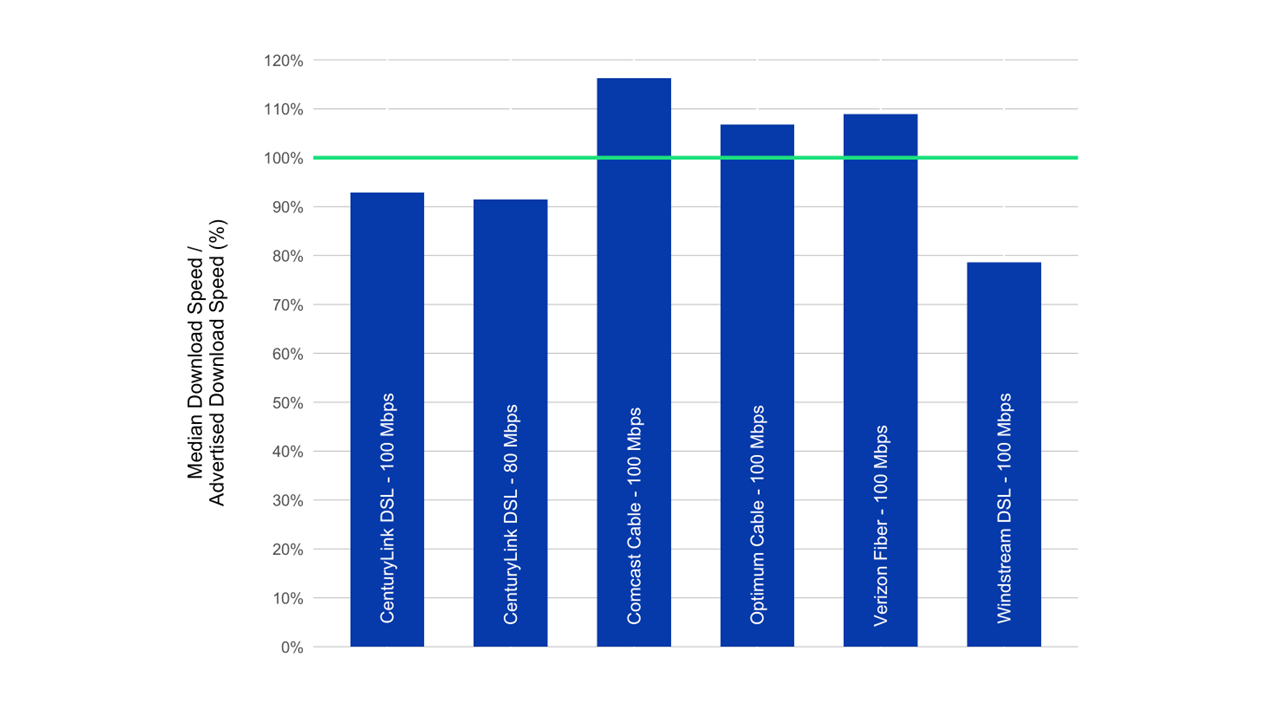

- Chart 17.5: The ratio of median download speed to advertised download speed, by ISP (80-100Mbps).

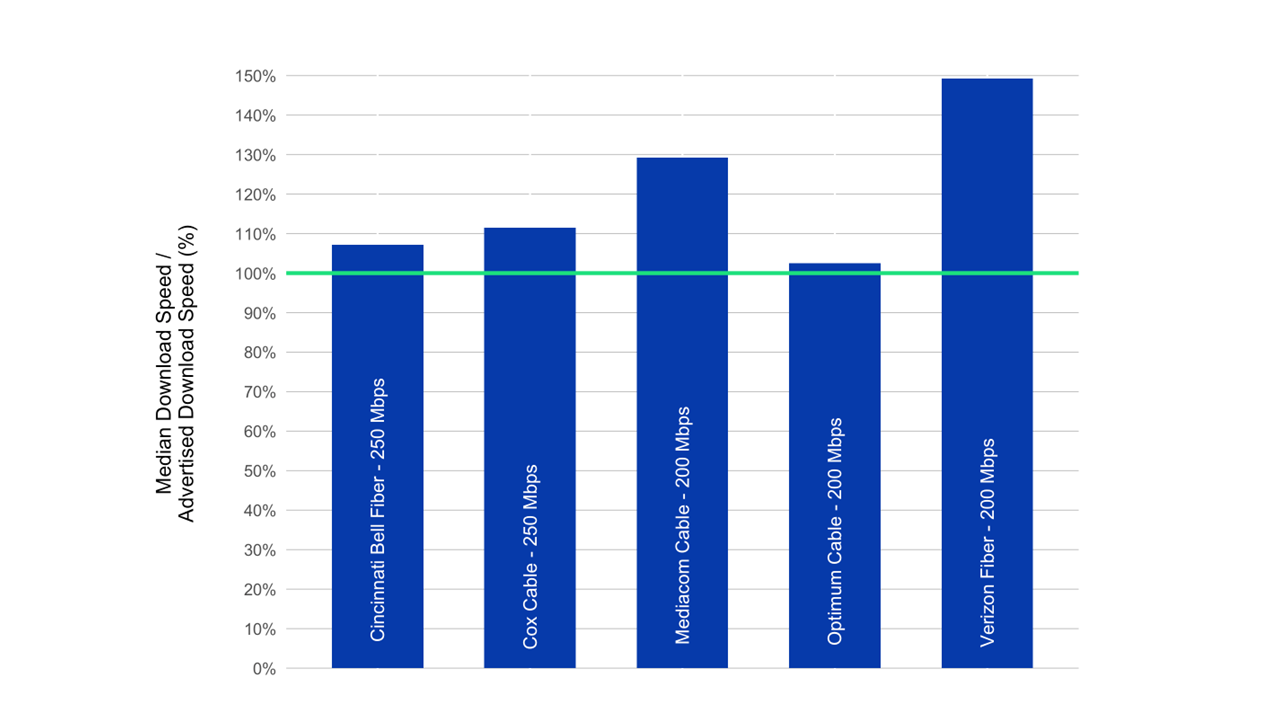

- Chart 17.6: The ratio of median download speed to advertised download speed, by ISP (150-250 Mbps).

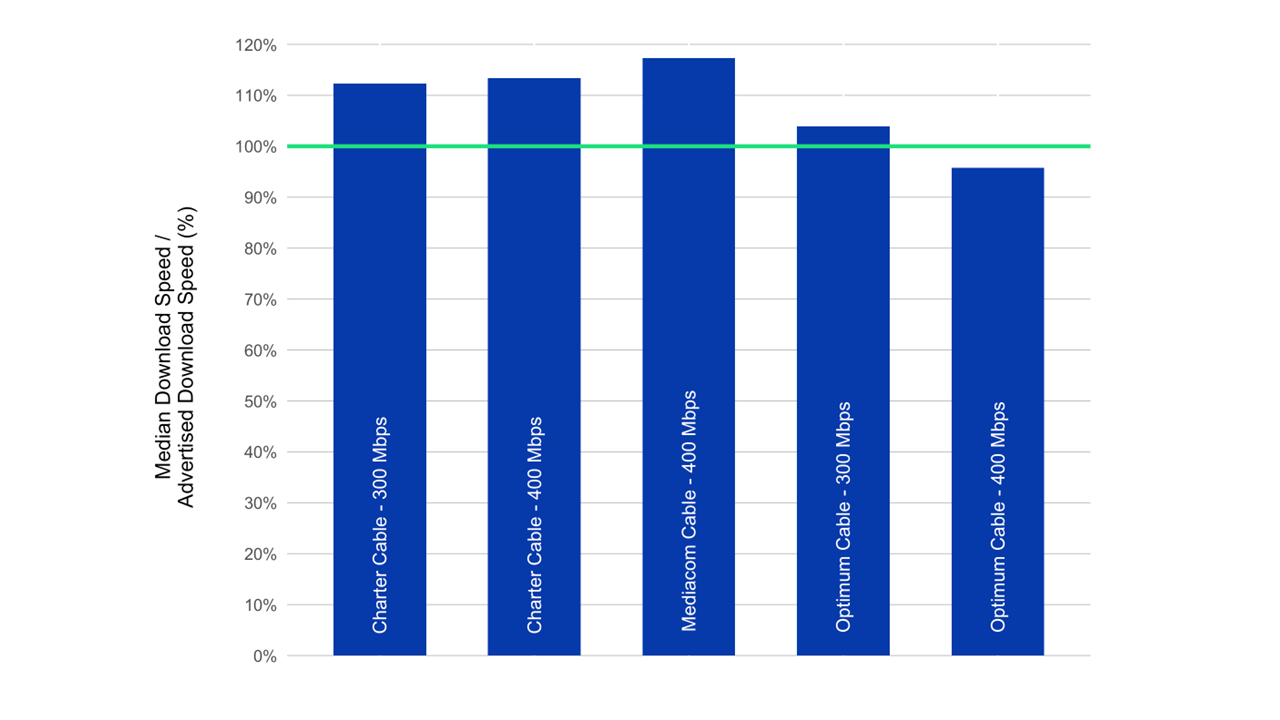

- Chart 17.7: The ratio of median download speed to advertised download speed, by ISP (300-400 Mbps).

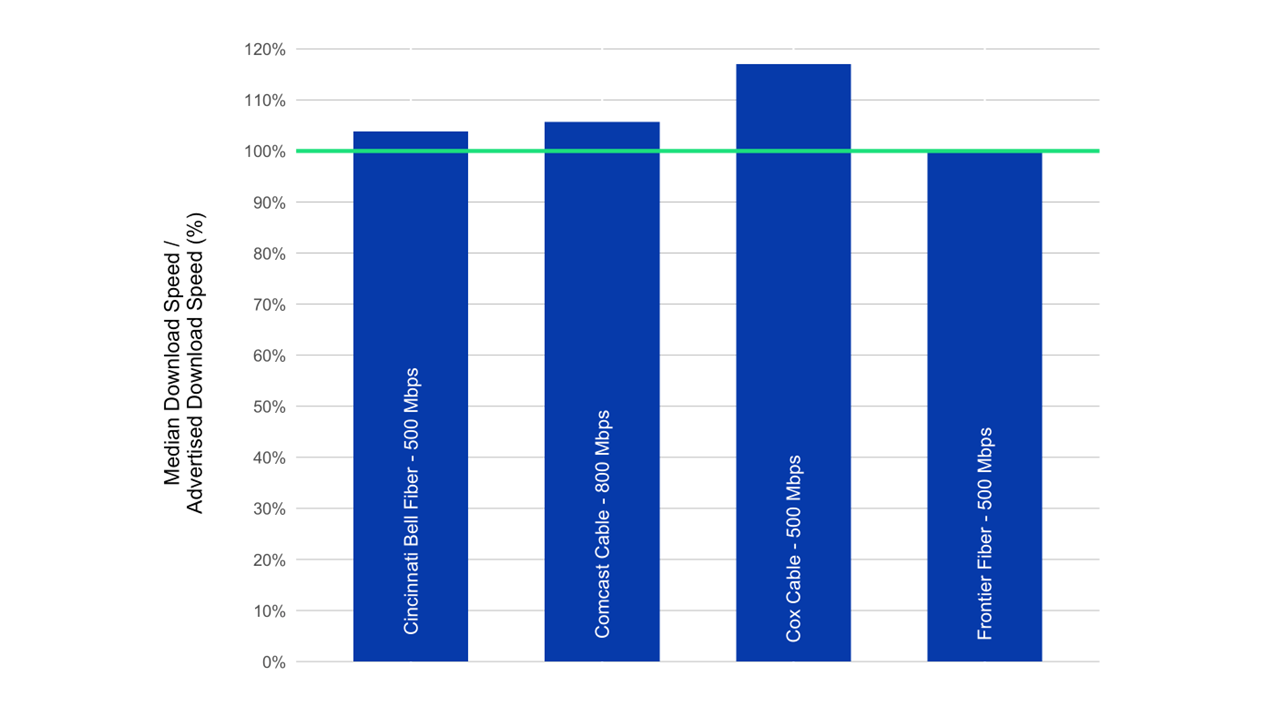

- Chart 17.8: The ratio of median download speed to advertised download speed, by ISP (500-800 Mbps).

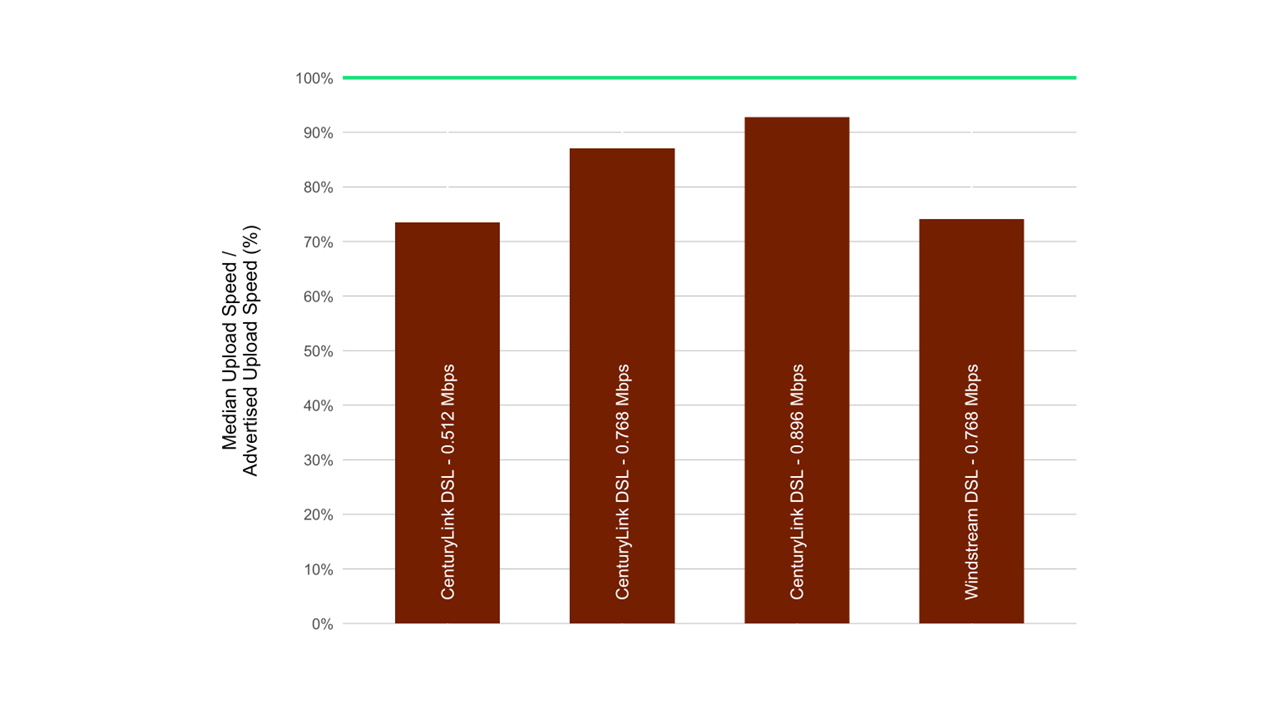

- Chart 18.1: The ratio of median upload speed to advertised upload speed, by ISP (0.384 – 0.896 Mbps).

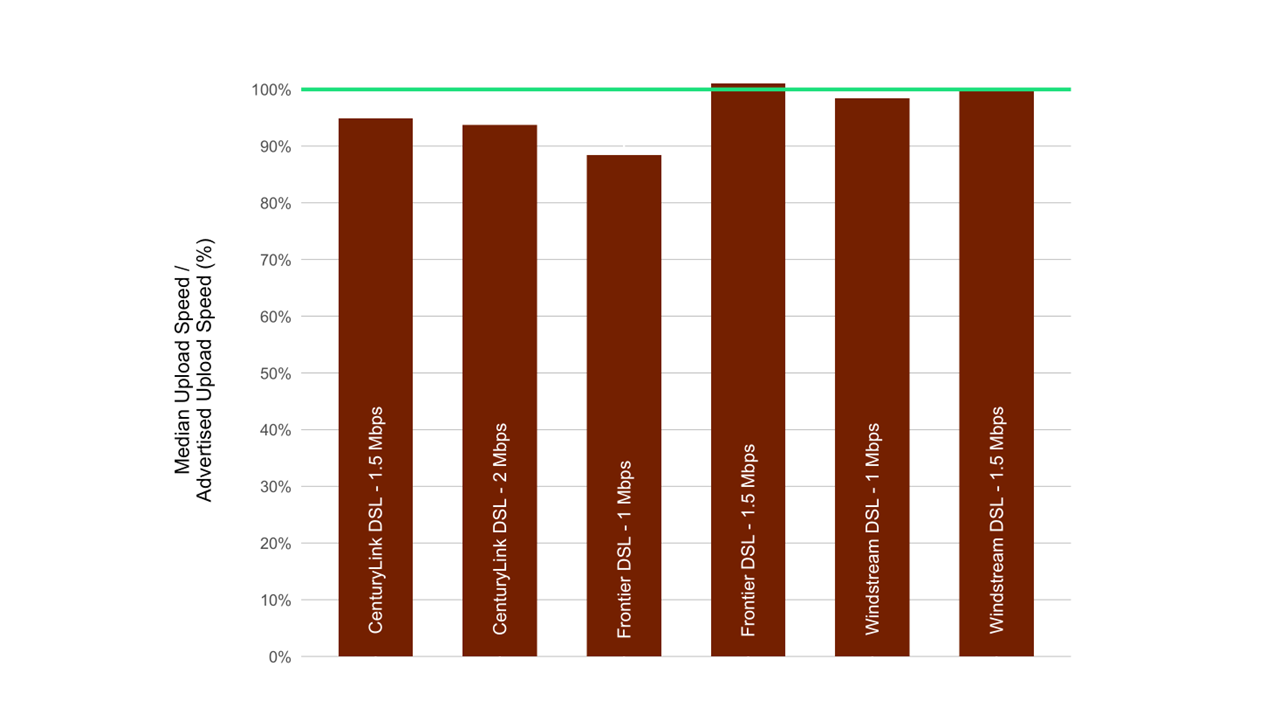

- Chart 18.2: The ratio of median upload speed to advertised upload speed, by ISP (1-3 Mbps).

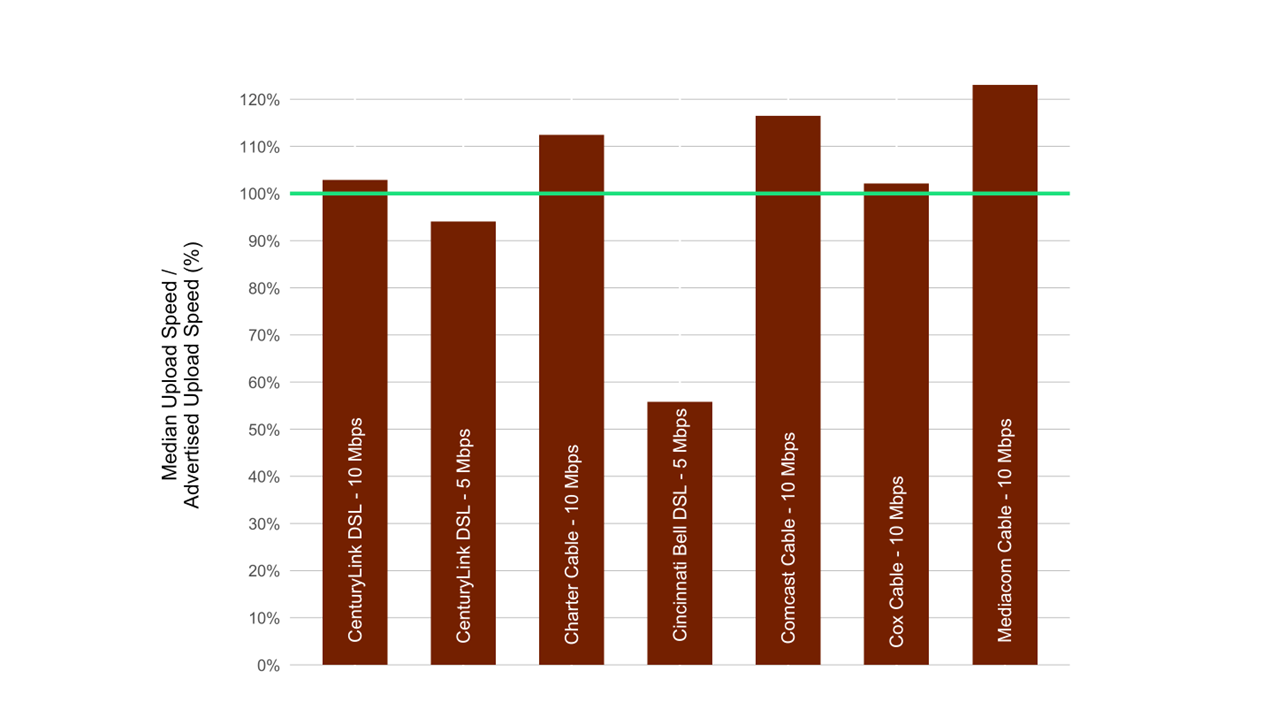

- Chart 18.3: The ratio of median upload speed to advertised upload speed, by ISP (5 -10 Mbps).

- Chart 18.4: The ratio of median upload speed to advertised upload speed, by ISP (20-50 Mbps).

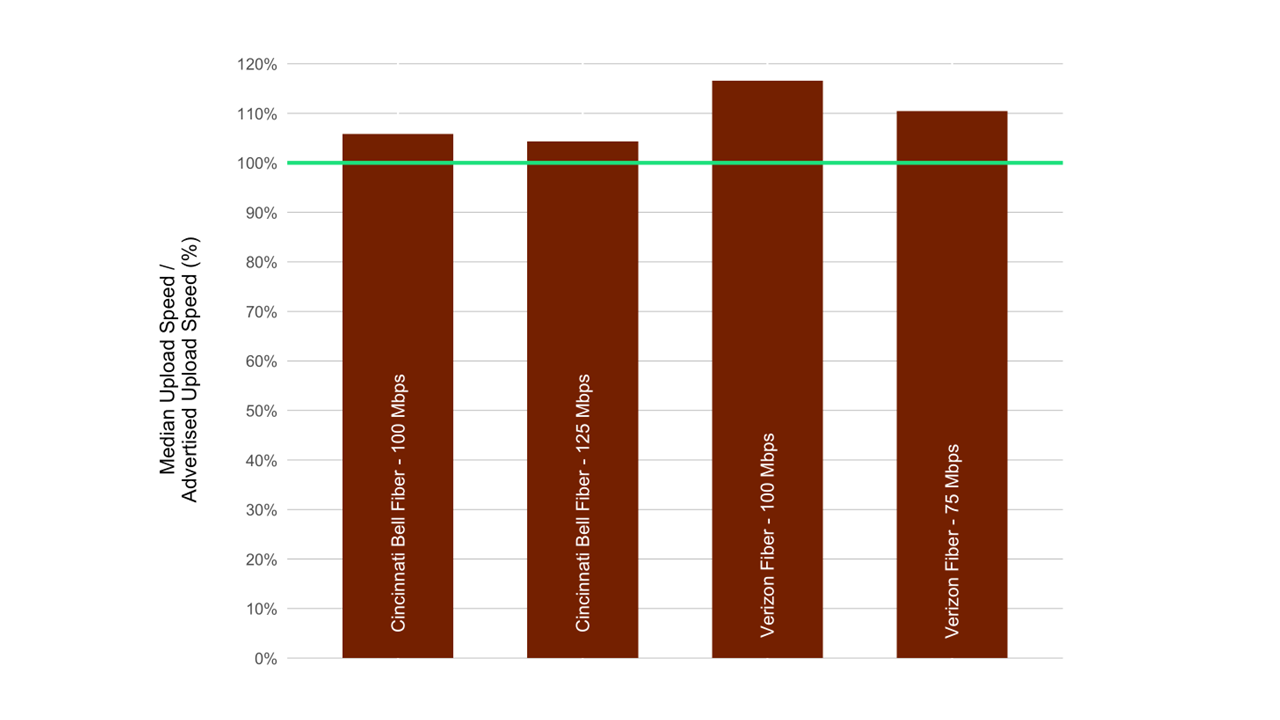

- Chart 18.5: The ratio of median upload speed to advertised upload speed, by ISP (75–125 Mbps).

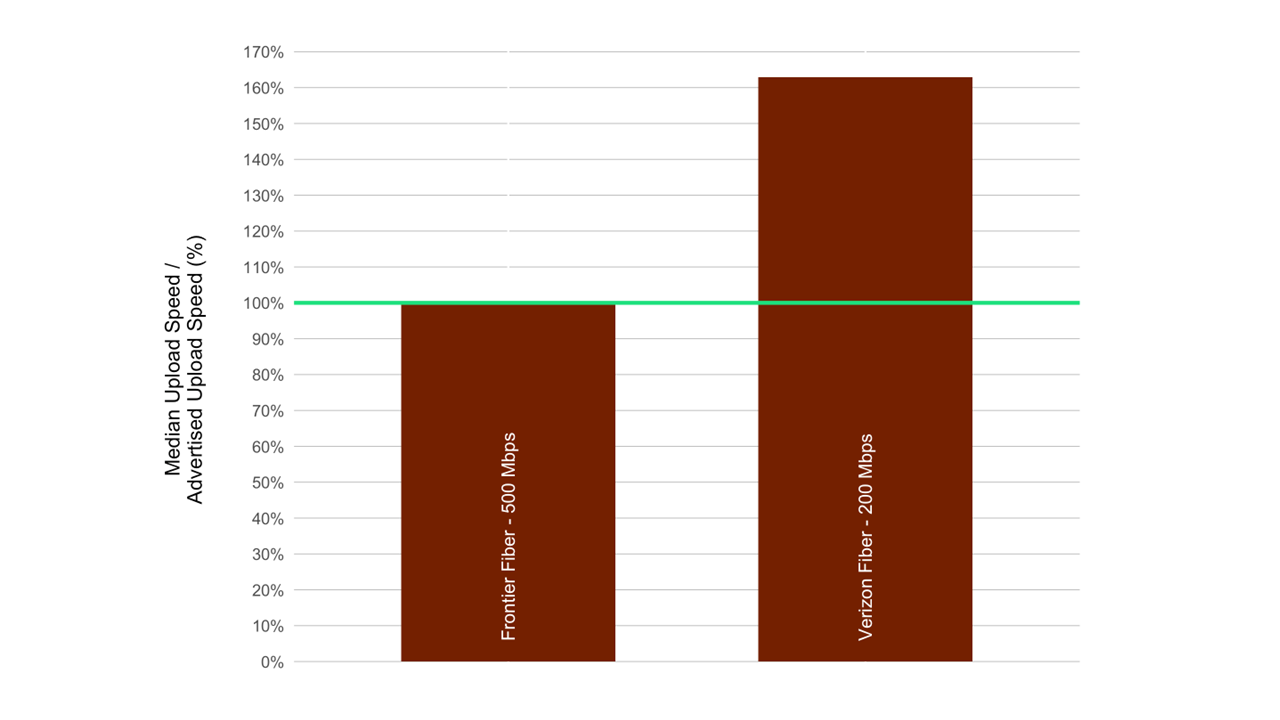

- Chart 18.6: The ratio of median upload speed to advertised upload speed, by ISP (200–1000 Mbps).

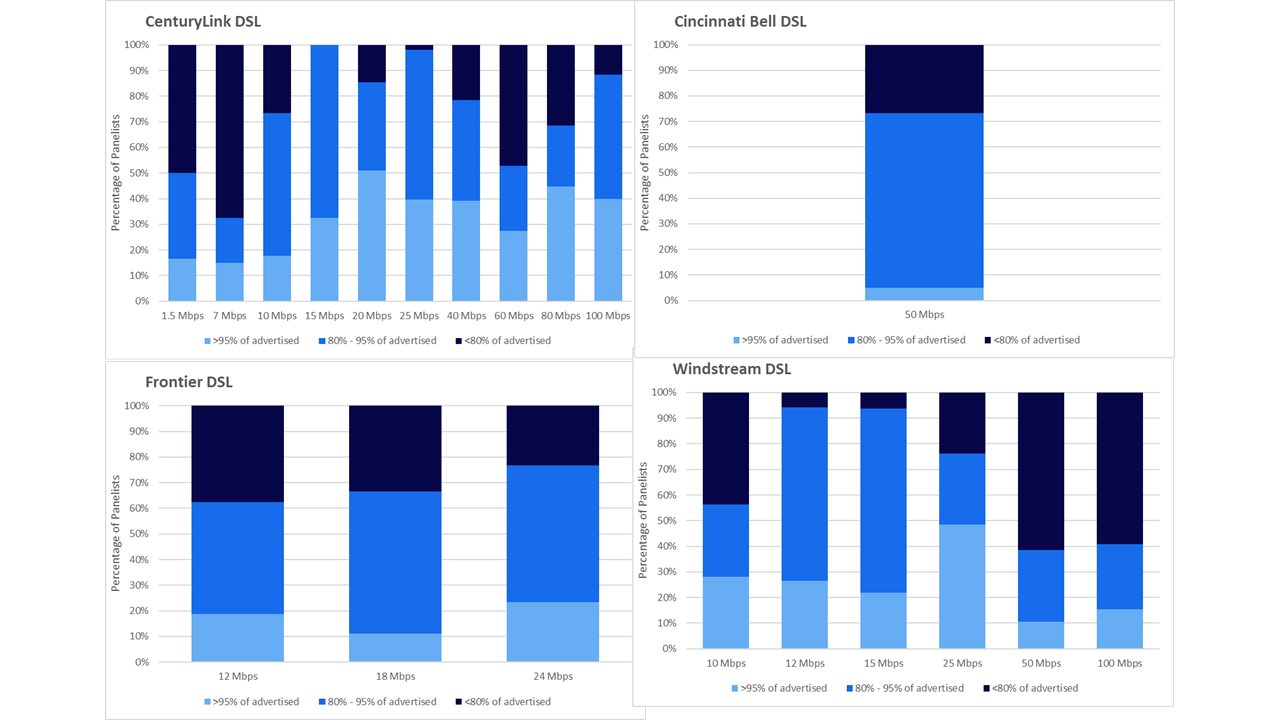

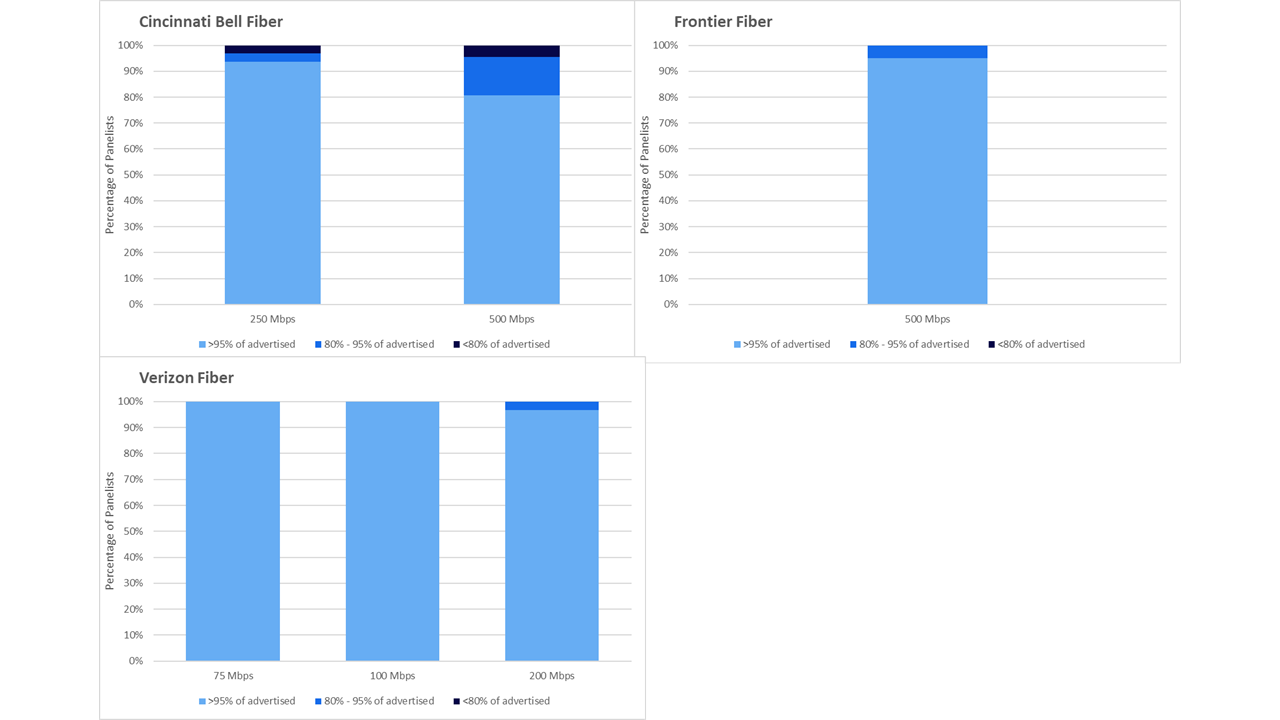

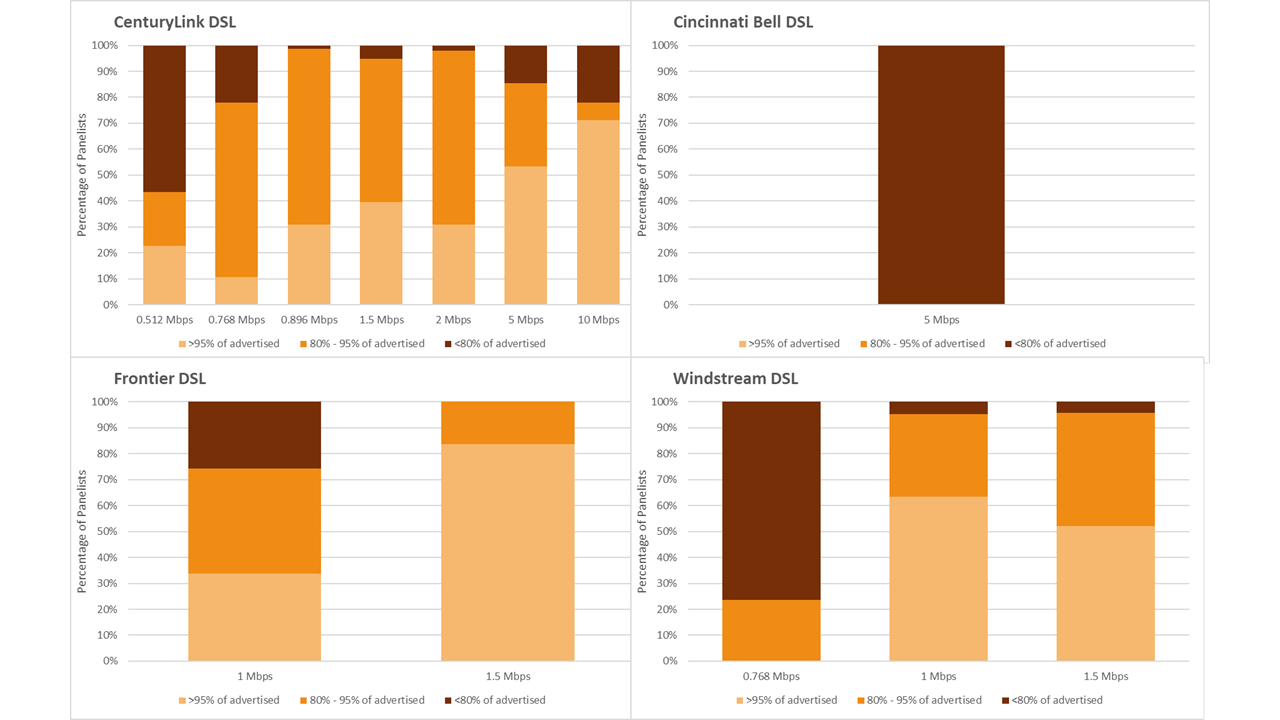

- Chart 19.1: The percentage of consumers whose median download speed was greater than 95%, between 80% and 95%, or less than 80% of the advertised download speed, by service tier (DSL).

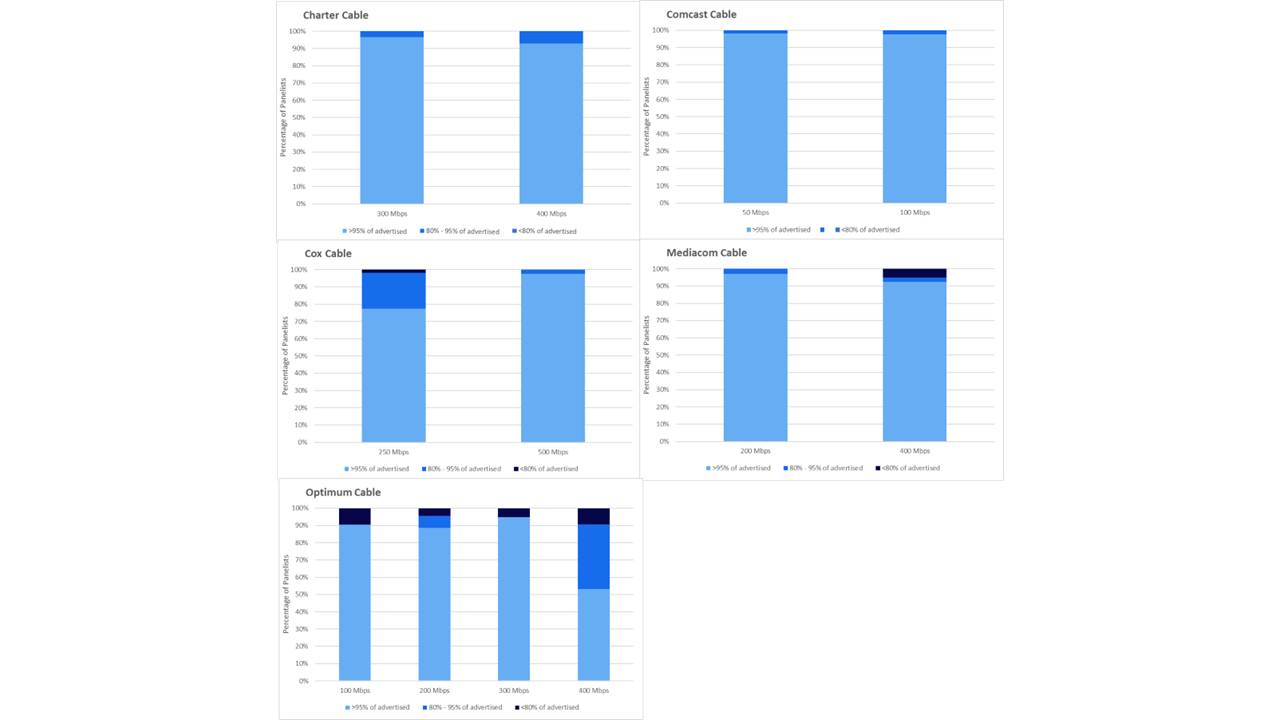

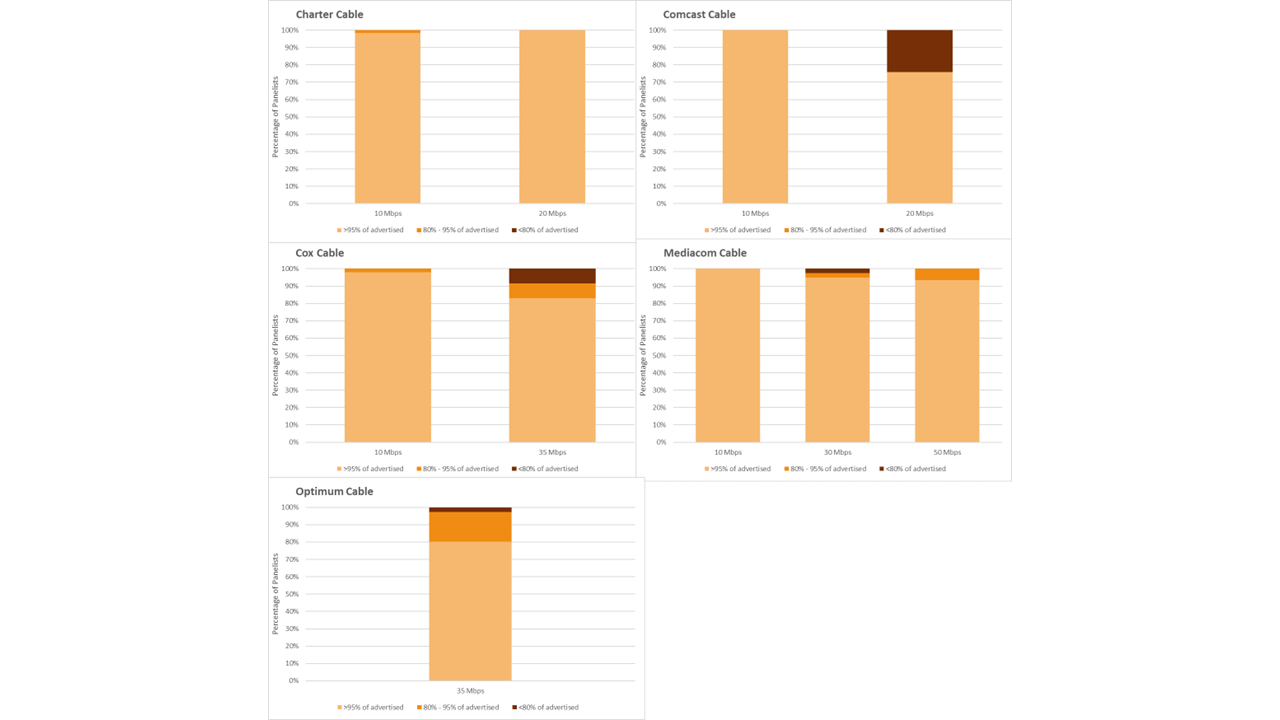

- Chart 19.2: The percentage of consumers whose median download speed was greater than 95%, between 80% and 95%, or less than 80% of the advertised download speed (cable).

- Chart 19.3: The percentage of consumers whose median download speed was greater than 95%, between 80% and 95%, or less than 80% of the advertised download speed (fiber).

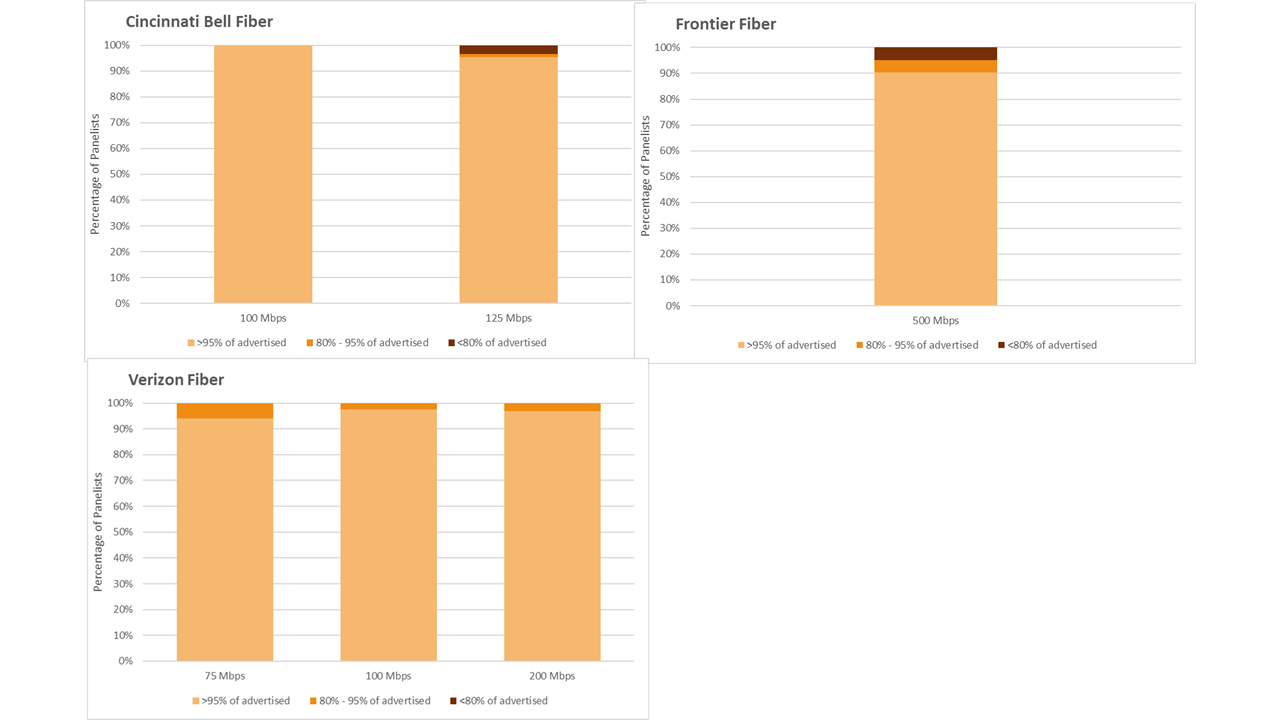

- Chart 20.1: The percentage of consumers whose median upload speed was greater than 95%, between 80% and 95%, or less than 80% of the advertised upload speed, by service tier (DSL)

- Chart 20.2: The percentage of consumers whose median upload speed was greater than 95%, between 80% and 95%, or less than 80% of the advertised upload speed (cable)

- Chart 20.3: The percentage of consumers whose median upload speed was greater than 95%, between 80% and 95%, or less than 80% of the advertised upload speed (fiber)

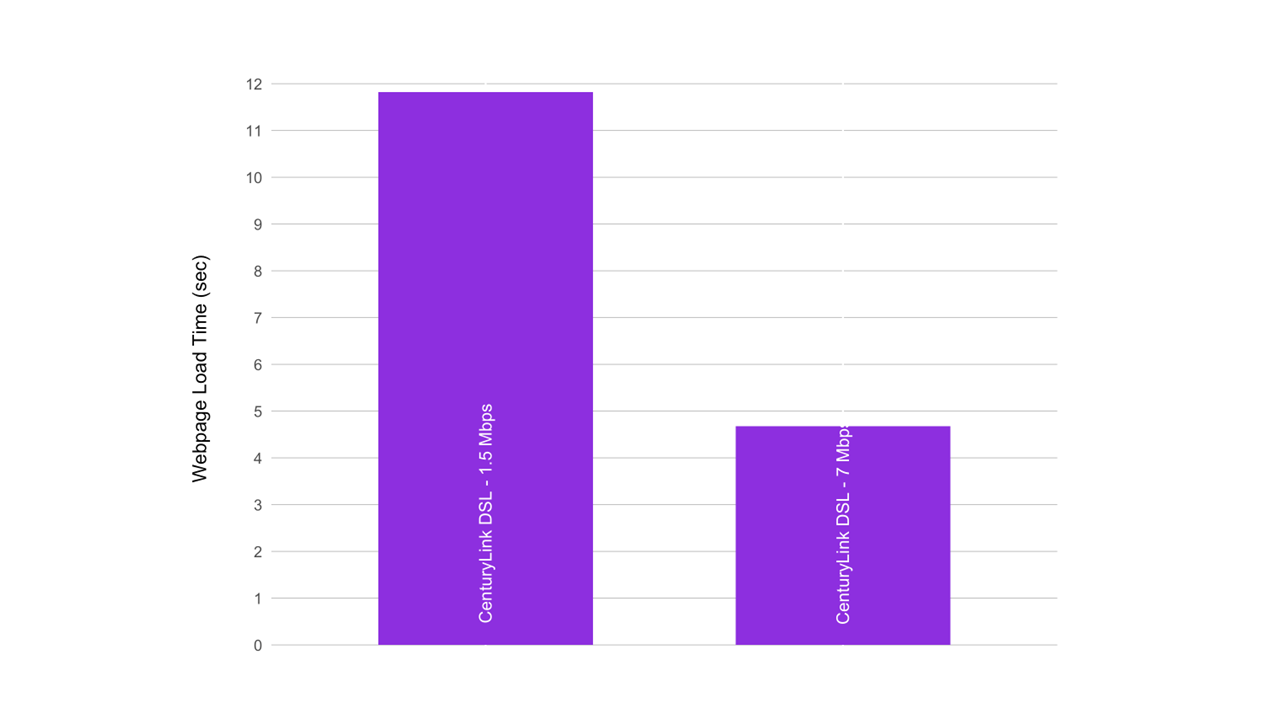

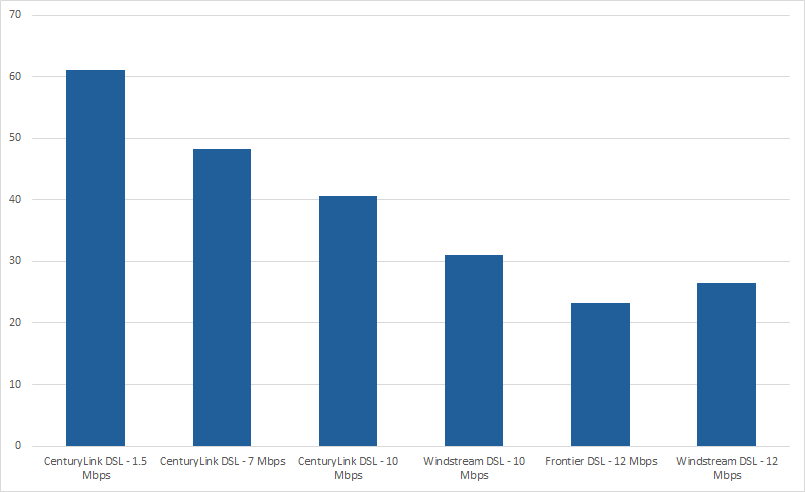

- Chart 21.1: Average webpage download time, by ISP (1.5-7 Mbps)

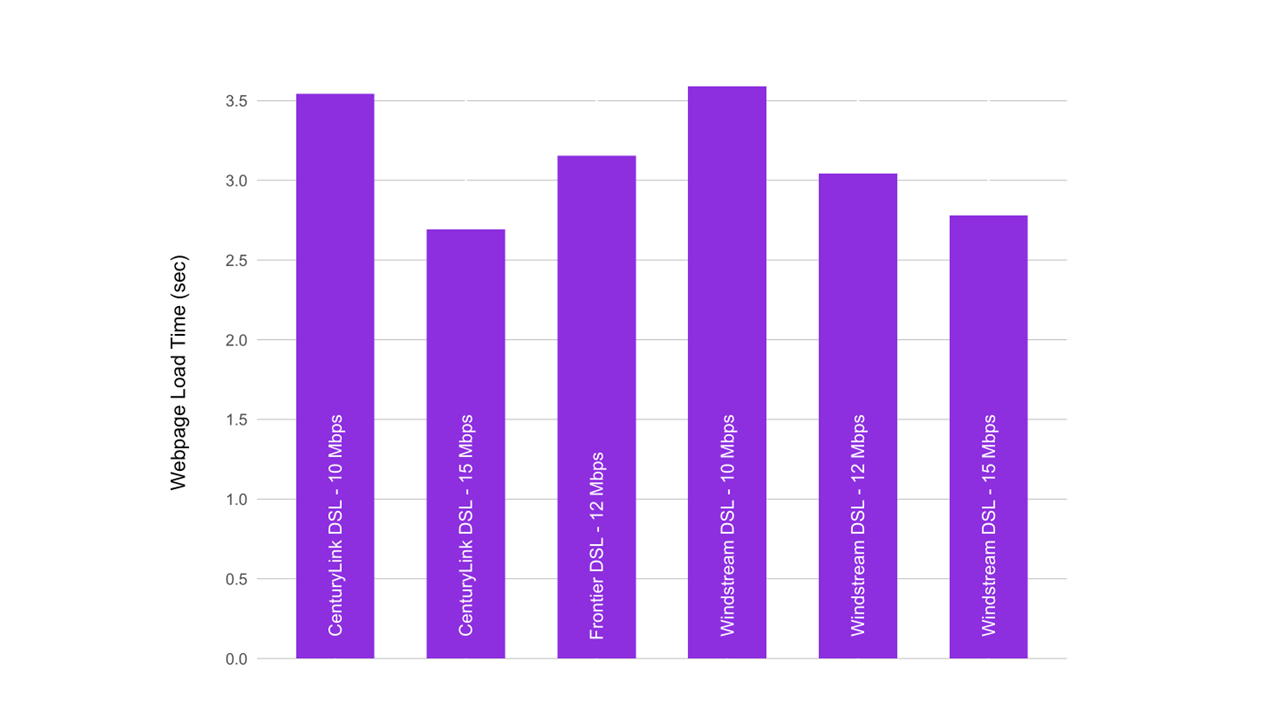

- Chart 21.2: Average webpage download time, by ISP (10-15 Mbps)

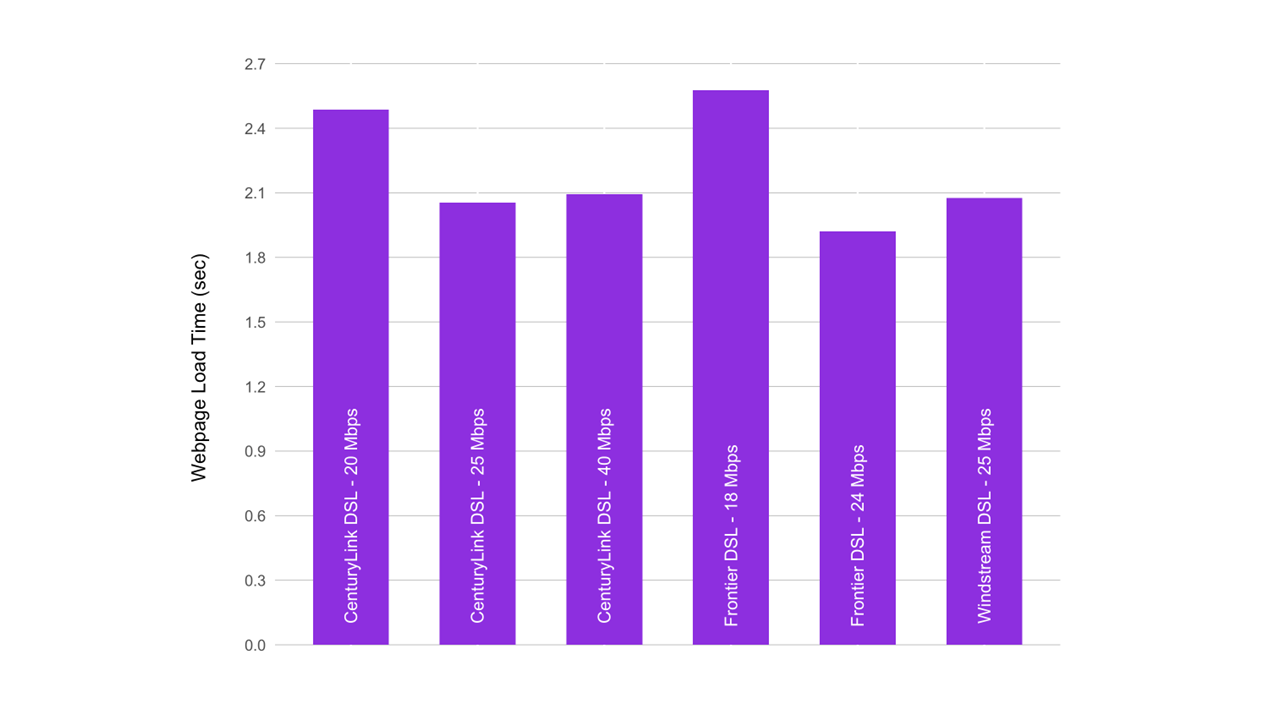

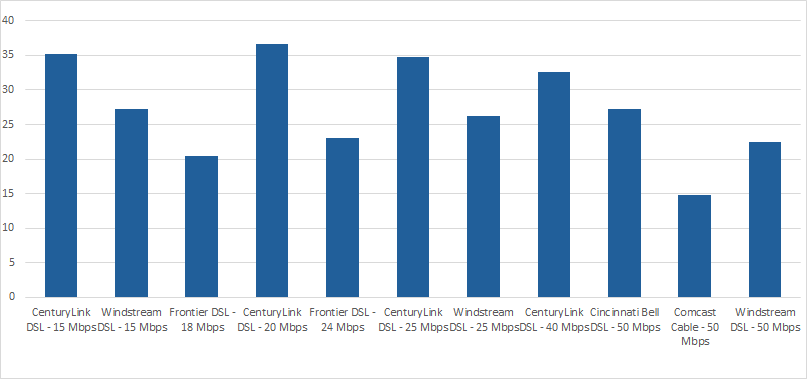

- Chart 21.3: Average webpage download time, by ISP (18-40 Mbps)

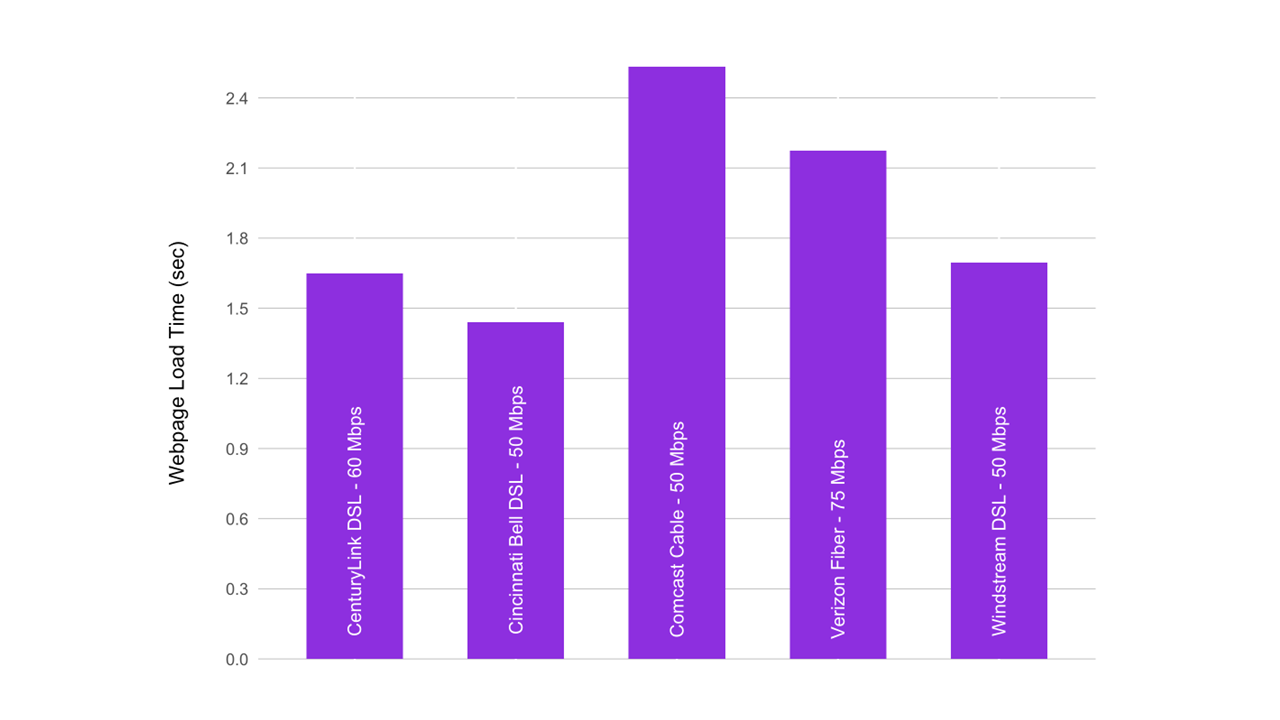

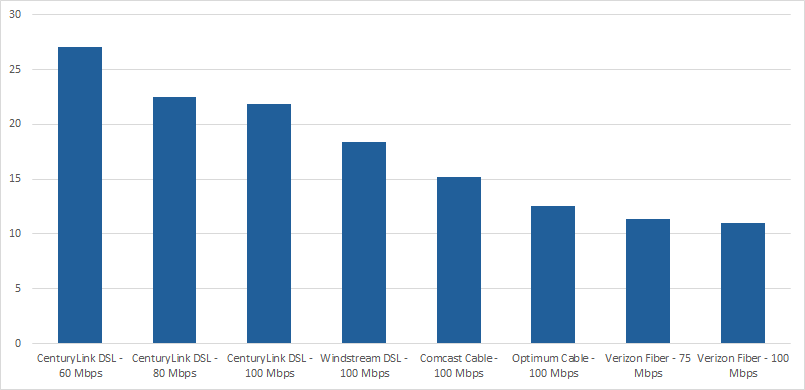

- Chart 21.4: Average webpage download time, by ISP (50-75 Mbps)

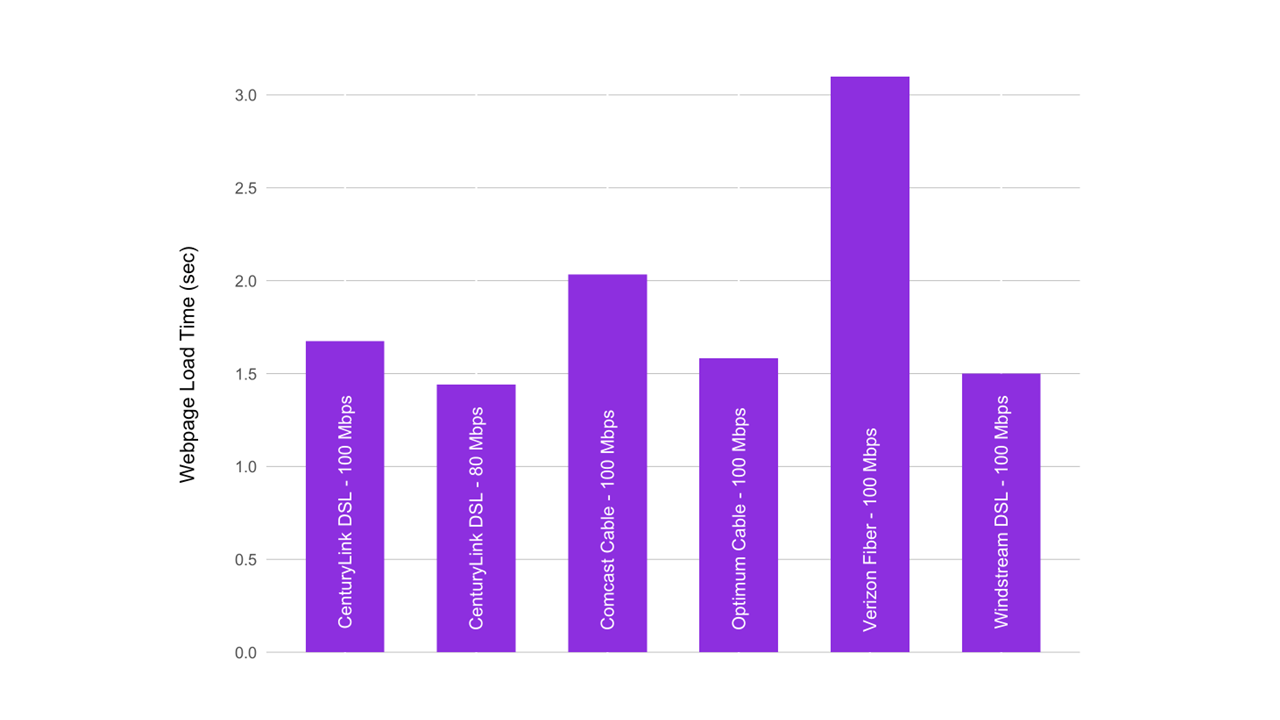

- Chart 21.5: Average webpage download time, by ISP (80 - 100 Mbps)

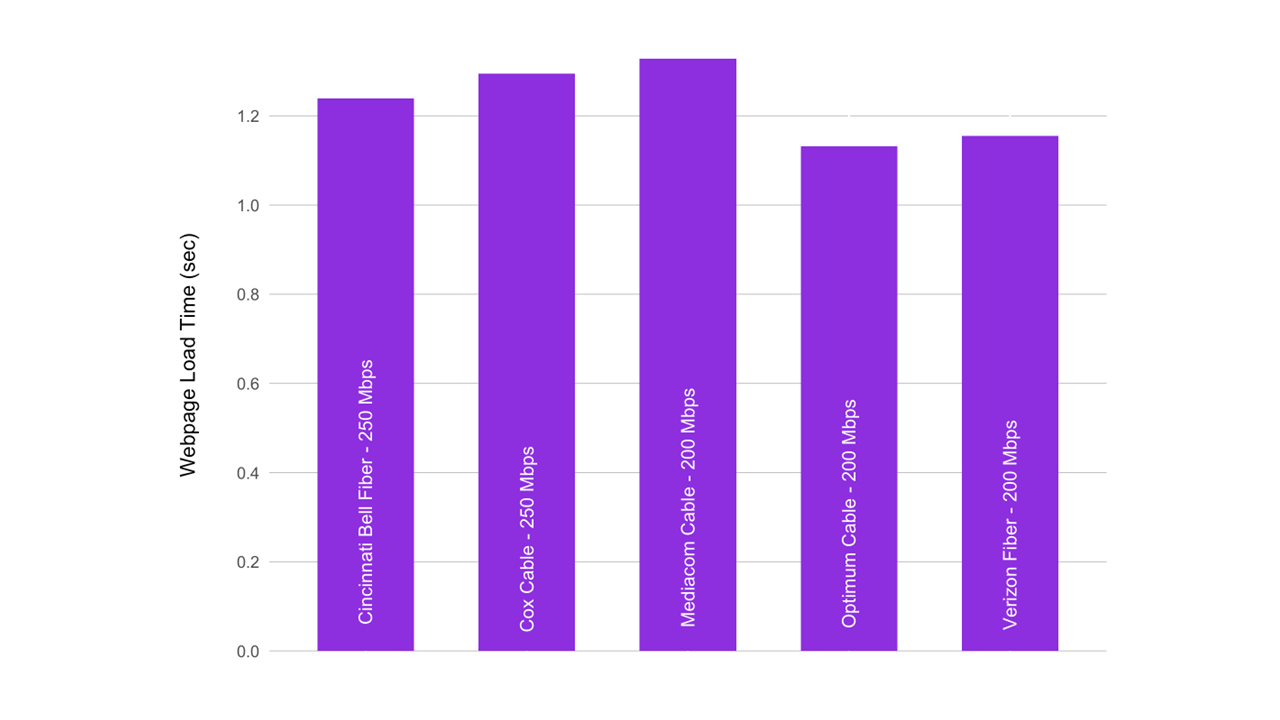

- Chart 21.6: Average webpage download time, by ISP (150 - 250 Mbps)

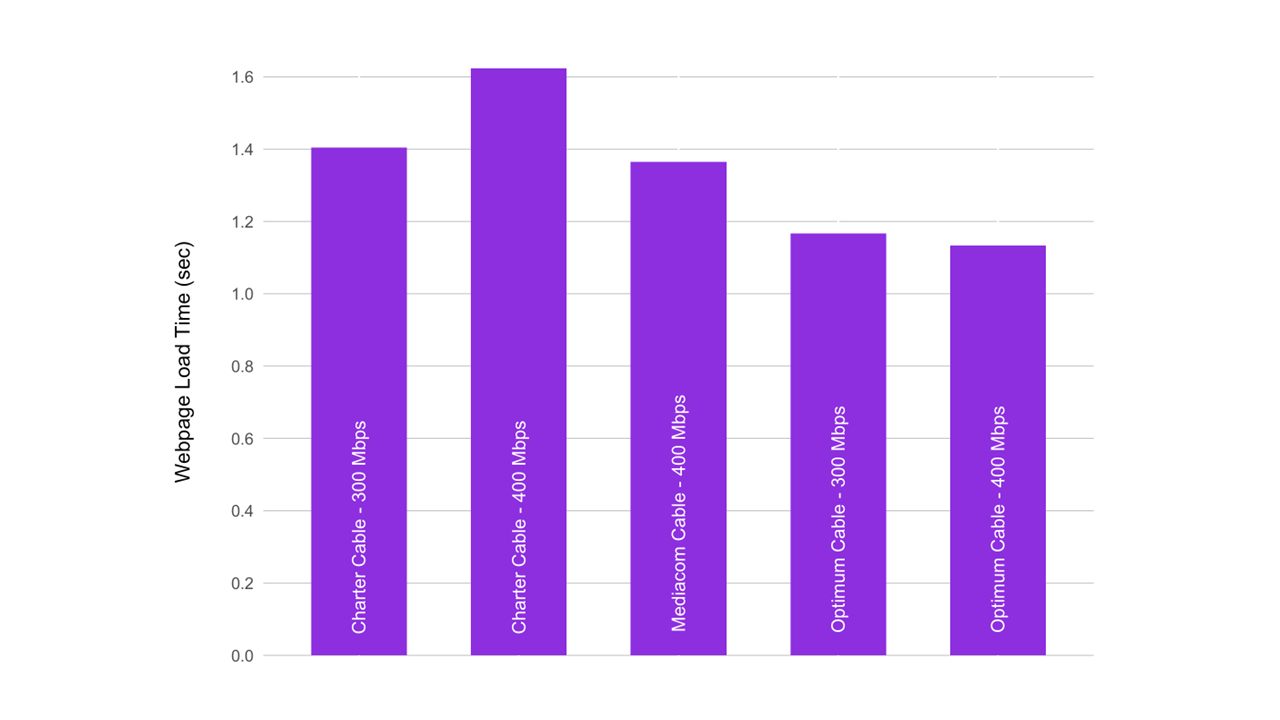

- Chart 21.7: Average webpage download time, by ISP (300 - 400 Mbps)

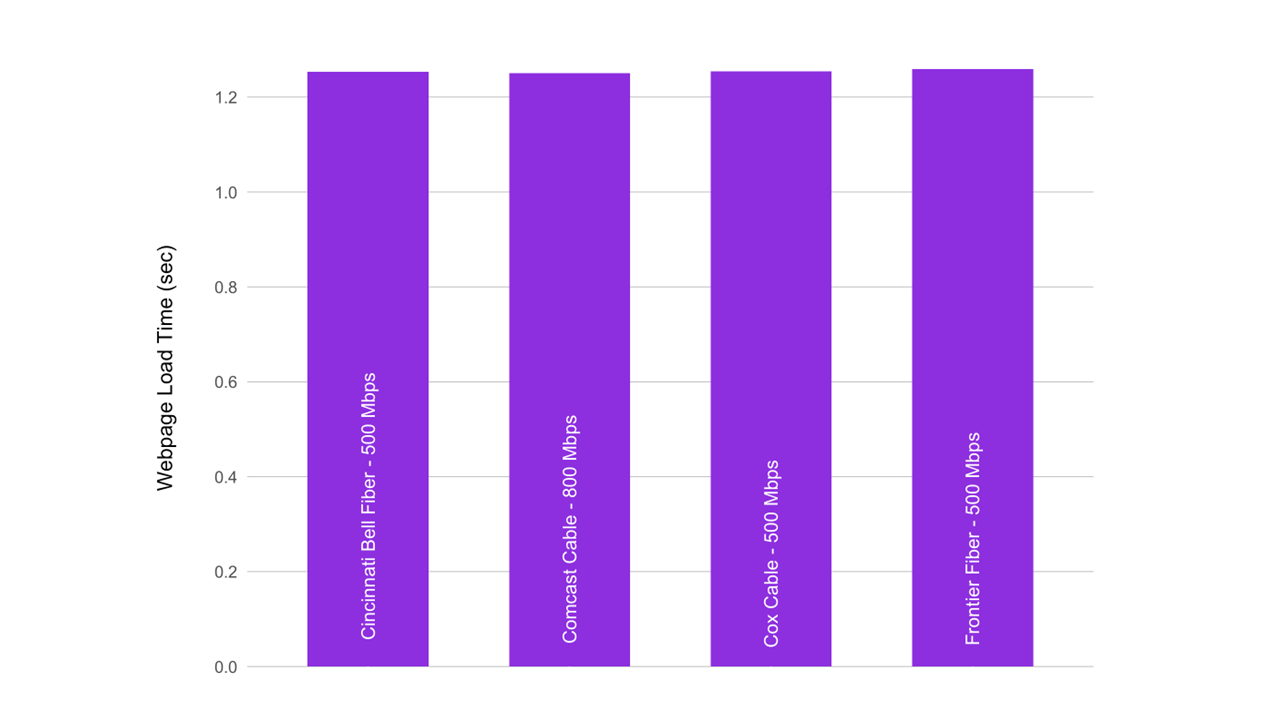

- Chart 21.8: Average webpage download time, by ISP (500 - 800 Mbps)

- Chart 22: Idle Latency by ISP tier

- Chart A.1: Anonymized performance of ISP’s Gigabit tiers

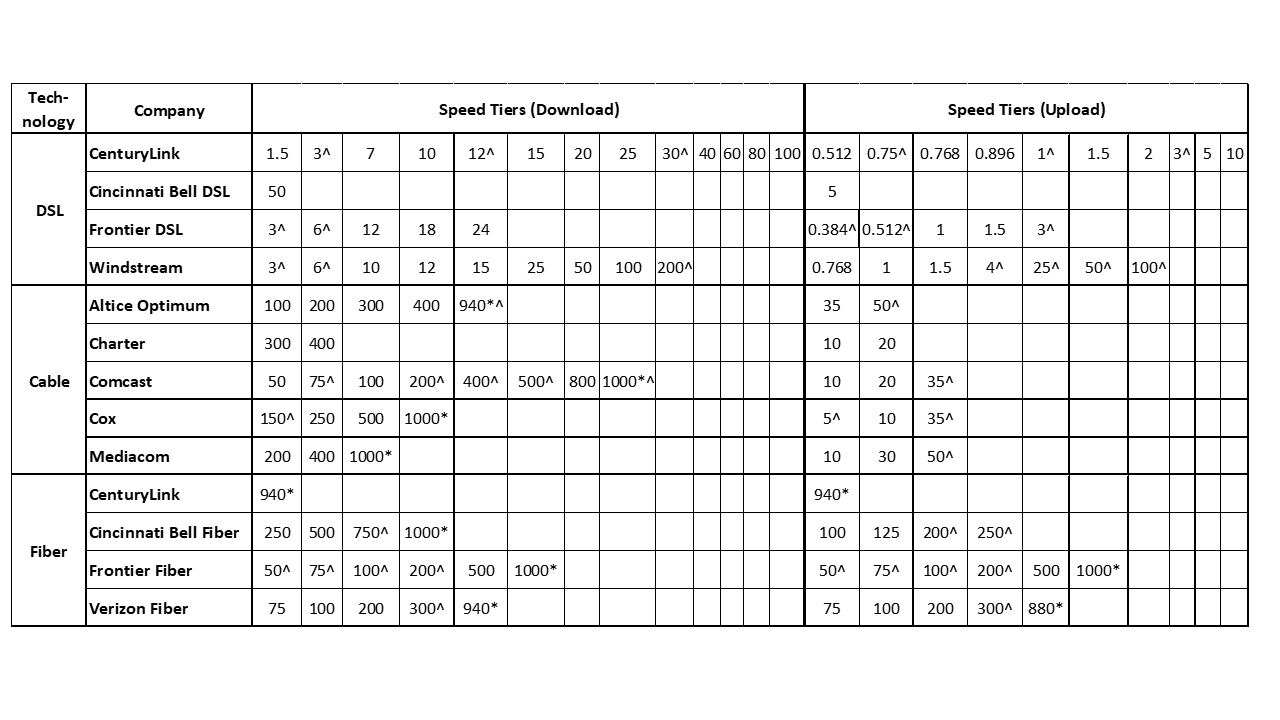

- Table 1: List of ISP service tiers whose broadband performance was measured in this report

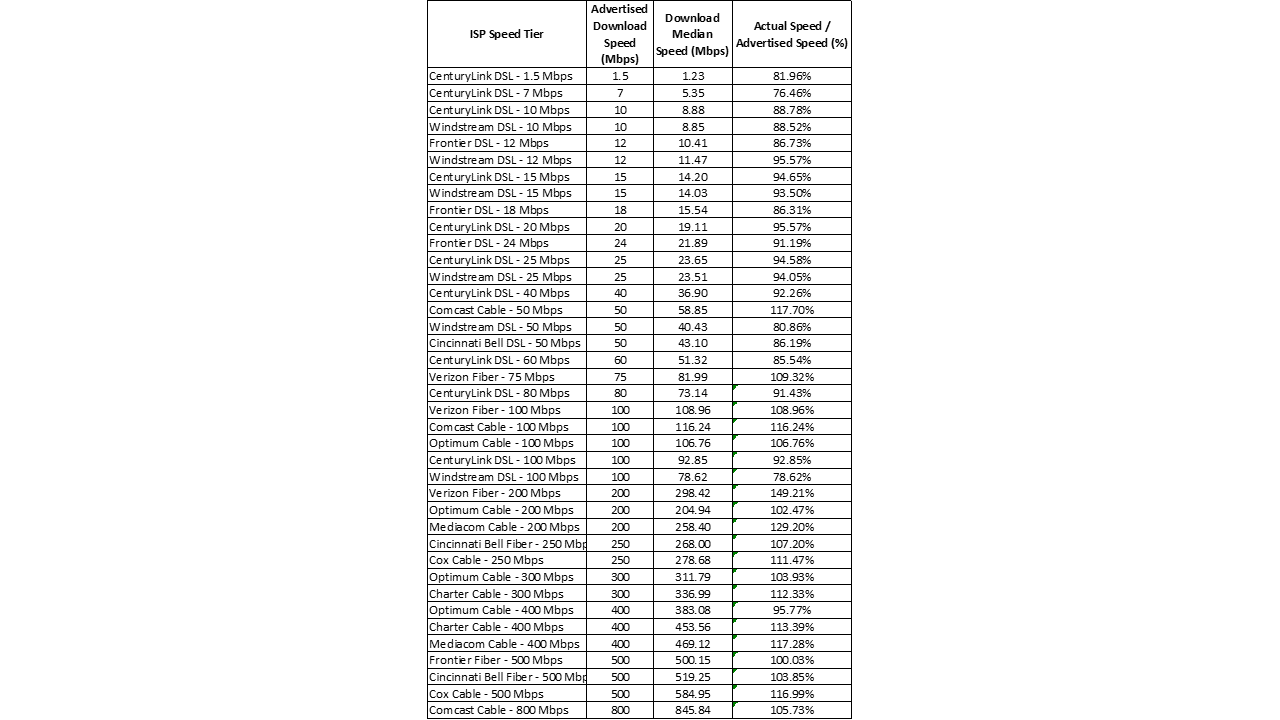

- Table 2: Peak Period Median download speed, by ISP

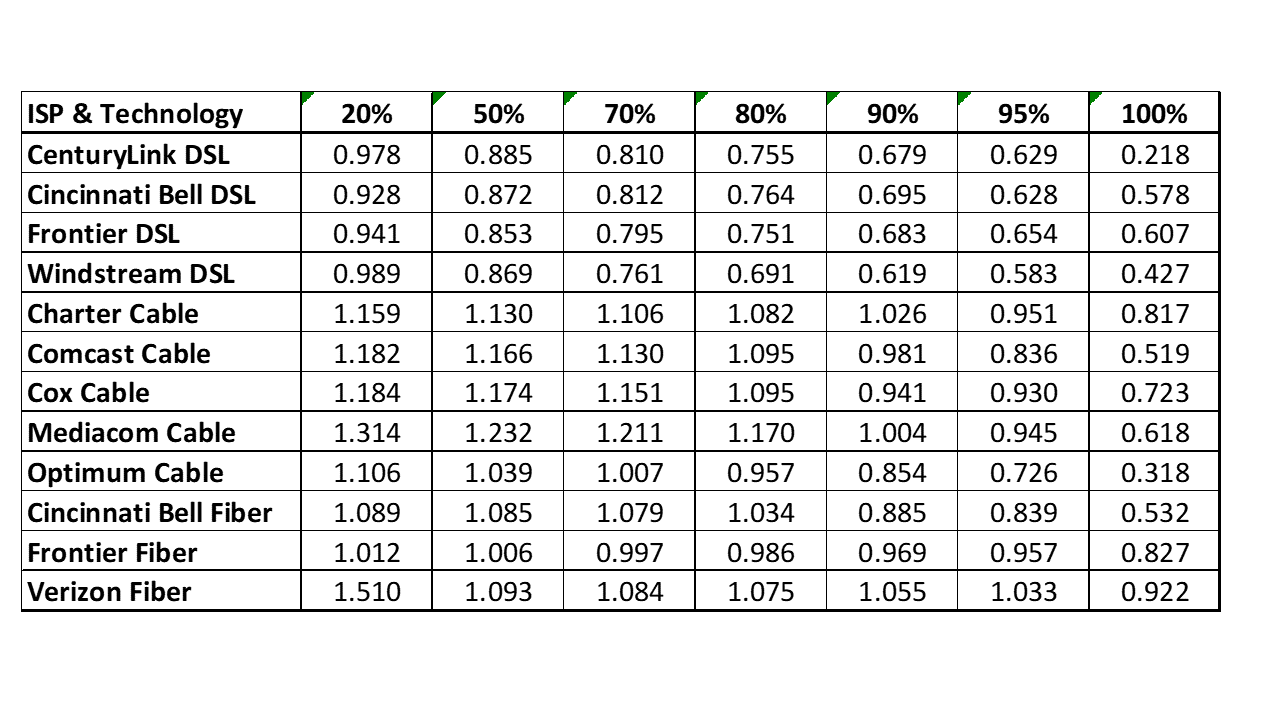

- Table 3: Complementary cumulative distribution of the ratio of median download speed to advertised download speed, by technology, by ISP

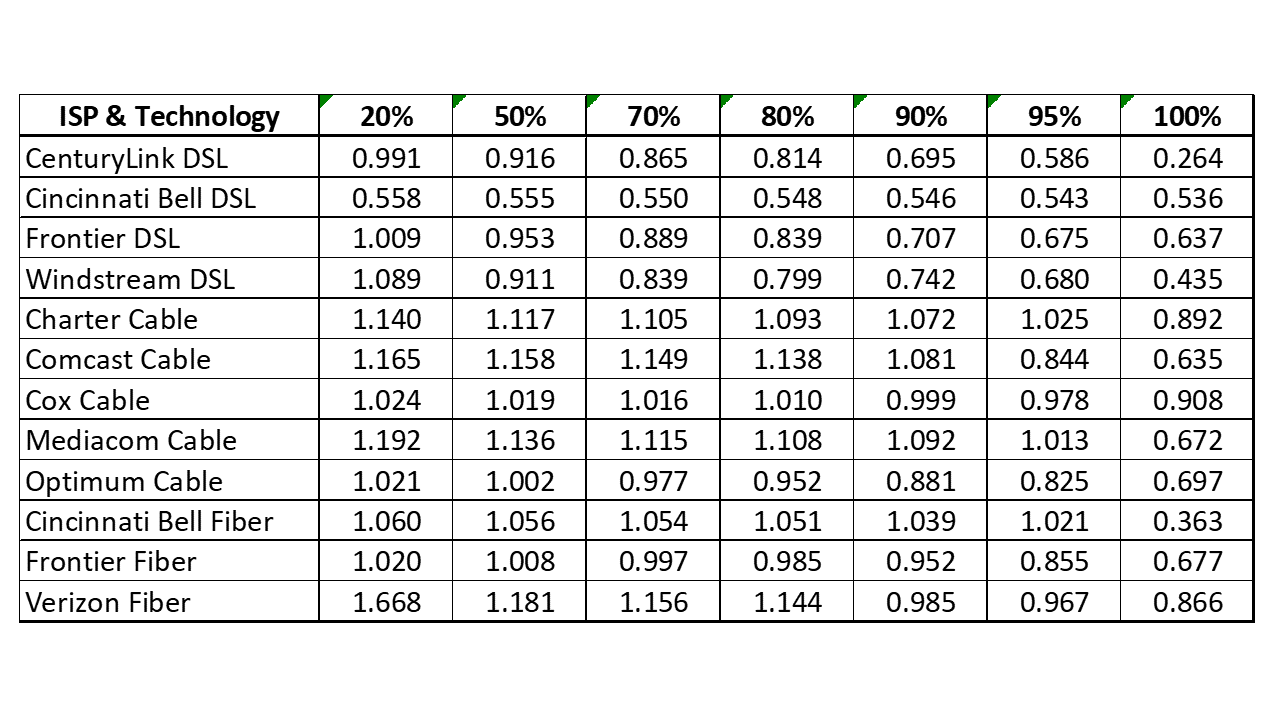

- Table 4: Complementary cumulative distribution of the ratio of median upload speed to advertised upload speed, by technology, by ISP

1. Executive Summary

The Thirteenth Measuring Broadband America Fixed Broadband Report (“Thirteenth Report” or “Report”) contains validated data collected in September and October 2022[1] from fixed Internet Service Providers (ISPs) as part of the Federal Communication Commission (FCC)’s Measuring Broadband America (MBA) program. This program is an ongoing nationwide study of consumer broadband performance in the United States. The goal of this program is to measure the network performance realized on a representative sample of service offerings and the residential broadband consumer demographic across the country.[2] This representative sample is referred to as the MBA “panel.” Thousands of volunteer panelists are drawn from the subscriber bases of ISPs, which collectively serve a large percentage of the residential marketplace.

The initial Measuring Broadband America Fixed Broadband Report was published in August 2011[3] and presented the first broad-scale study of directly measured consumer broadband performance throughout the United States. Including this current Report, thirteen reports have now been issued.[4] These reports provide a snapshot of fixed broadband Internet access service performance in the United States utilizing a comprehensive set of performance metrics. As part of an open data program, all methodologies used in the program are fully documented, and all collected data is published for public use without any restrictions. The collected performance data can be used, if desired, for additional studies and analysis.

The Eleventh and Twelfth Reports (based on data collected in September-October 2020 and 2021) marked a significant change in the way that a large percentage of the workforce used the Internet. The usage pattern shifted from the workforce using the Internet principally at their office locations, to working remotely from their homes, driven by the coronavirus pandemic. For the period covered by the current (Thirteenth) Report, a significant number of people continued to work from home. This has continued to place increased traffic on residential service providers’ networks. Despite these changes, the peak operating hours have remained the same as was the case pre-pandemic; i.e., 7 P.M. to 11 P.M.

A. Major Findings Of The Thirteenth Report

The key findings of this report are:

- Ten ISPs were evaluated in this report. Of these, CenturyLink, Cincinnati Bell, and Frontier employed multiple broadband technologies across the U.S, whereas the rest were single technology providers. Overall, 12 different ISP/technology configurations were evaluated in this report.

- The maximum advertised download speeds amongst the service tiers offered by ISPs and measured by the FCC ranged from 200 Mbps to 1,000 Mbps for the period covered by this report.

- The weighted average[5] advertised download speed of the participating ISPs was 467 Mbps, representing an increase of 52% from the Twelfth Report and 141% from the Eleventh Report.

- Of the major broadband provider/technologies that were tested, eight measured download speeds were 100% or better than advertised speeds during the peak hours (7 p.m. to 11 p.m. local time). The four other ISP/technologies provided between 86% to 90% of their advertised speed.

- In addition to providing download and upload speed measurements of ISPs, this report also provides a measure of consistency of measured-to-advertised speeds of ISPs with the use of our “80/80” metric. The 80/80 metric measures the minimum percentage of the advertised speed experienced by at least 80% of subscribers for at least 80% of the time over peak periods. By this measure, six of the 12 ISP/technology configurations performed at or better than their advertised speed during the peak hours. The lowest performing technology, by this measure, was DSL, with providers achieving between 63% to 72% of the advertised speed.

- This report also provides ISP tiers’ latency under load measurement results, also referred to as working latency, to measure the average time of delay for packets to reach their destination while a service is saturated. This report shows that, as expected, working latency measurement results are higher than idle latency measurements, which tests the average time of delay when there is minimal or no home internet (LAN) Internet traffic for the measurement duration.

- An Appendix showing the performance of Gigabit tiers has been included.

B. Speed Performance Metrics

Speed (both download and upload) performance continues to be one of the key metrics reported by the MBA. The data presented includes each ISP’s broadband performance as the median [6] of speeds experienced by panelists within a specific service tier. These reports mainly focus on common service tiers used by an ISP’s subscribers.[7]

Additionally, consistent with previous Reports, we compute average per-ISP performance by weighting the median speed for each service tier by the number of subscribers in that tier. Similarly, in calculating the composite average speed, taking into account all ISPs in a specific year, the median speed of each ISP is used and weighted by the number of subscribers of that ISP as a fraction of the total number of subscribers across all ISPs.

In calculating these weighted medians, we draw on two sources for determining the number of subscribers per service tier. ISPs may voluntarily contribute subscription demographics per surveyed service tier as the most recent and authoritative data. Many ISPs have chosen to do so.[8] When such information is not provided by an ISP, we instead rely on the FCC’s Form 477 data.[9] All facilities-based broadband providers are required to file data with the FCC twice a year via Form 477 regarding deployment of broadband services, including subscriber counts. For this report, we used Form 477 data from June preceding the testing period..

As in our previous reports, we found that, for a large number of ISPs, the measured speeds experienced by subscribers either nearly met or exceeded advertised service tier speeds. However, since we started the MBA program, consumers have changed their Internet usage habits. In 2011, consumers mainly browsed the web and downloaded files; thus, we reported mean broadband speeds since these statistics were likely to closely mirror user experience. Consumer internet usage has now become dominated by video consumption, with consumers regularly streaming video for entertainment and education.[10] Our network performance analytics were expanded in 2014 to use consistency in service metrics. Using both median measured speed and consistency in service metrics better reflects the consumer’s Internet access service performance.

We use two kinds of metrics to reflect the consistency of service delivered to the consumer. First, we report the percentage of advertised speed experienced by at least 80% of panelists during at least 80% of the daily peak usage period (“80/80 consistent speed” measure). Second, we show each fraction of consumers who, respectively, obtain median speeds greater than 95%, between 80% and 95%, and less than 80% of advertised speeds.

C. Use Of Other Performance Metrics

Although download and upload speeds remain the network performance metric of greatest interest to the typical consumer, we also spotlight two other key network performance metrics in this report: latency and packet loss. These metrics can affect significantly the customer’s overall service experience.

In previous years we reported only on the idle latency of each of the ISP speed tiers (testing under the condition when there is minimal or no home internet (LAN) traffic for the measurement duration, as we do for other performance measurements). Latency, however, can increase significantly under conditions of heavy traffic stressing the network buffers.[11] With the increase in data traffic within homes and increased use of roundtrip latency-sensitive real-time applications such as for videoconferencing, the latency under load metric has become increasingly important. This year’s report, therefore, includes the results of latency under load, or working latency in the presence of downstream traffic and upstream traffic.

Packet loss measures the fraction of data packets sent that fail to be delivered to the intended destination. Packet loss may affect the perceived quality of applications that do not incorporate retransmission of lost packets, such as phone calls over the Internet, video chat, certain online multiplayer games, and certain video streaming services. During network congestion, both latency and packet loss typically increase. High packet loss degrades the achievable throughput and reliability of download and streaming applications. However, packet loss of a few tenths of a percent, for example, is common and is unlikely to affect significantly the perceived quality of most Internet applications.

The Internet continually evolves in its architecture, performance, and services. Accordingly, we continue to adapt our measurement methods and analysis methodologies to further improve the collective understanding of performance characteristics of broadband Internet access. By doing so we aim to help the community of interest across the board, including consumers, technologists, service providers, and regulators.

2. Summary of Key Findings

A. Most Popular Advertised Service Tiers

A list of the ISP download and upload speed service tiers that were measured in this report are shown in Table 1. It should be noted that while upload and download speeds are measured independently and shown separately, they typically are offered by an ISP in a paired configuration. The service tiers that are included for reporting represent the top 80% (therefore “most popular”) of an ISP’s set of tiers based on subscriber numbers. Taken in aggregate, these plans serve the majority of the subscription base of the ISPs across the USA. While Gigabit tiers are shown in this Table, they were not included in each ISP’s weighted performance averages. Performance results for Gigabit tiers are included separately in the Appendix and are shown in an anonymized manner. Note also that Table 1 shows a total of 13 different ISP/technology configurations. However, only 12 ISP/Configurations were compared in subsequent charts. CenturyLink’s Fiber service was omitted in these comparisons because it contained only a single Gigabit tier (i.e., all other ISPs offering fiber services also advertised tiers below Gigabit speeds; e.g., 50 Mbps and above).

Table 1: List of ISP service tiers whose broadband performance was measured in this report.

Note: Cincinnati Bell is now doing business as “Altafiber”.

* Tiers at or near Gigabit speeds previously were included only in charts showing computed average advertised speeds. This year, tiers at or near Gigabit speeds are also included separately in Appendix A. Gigabit tiers have not been included in any of the weighted averages of ISP performance parameters.

^ Tiers that lack sufficient panelists to meet the program’s target sample size.

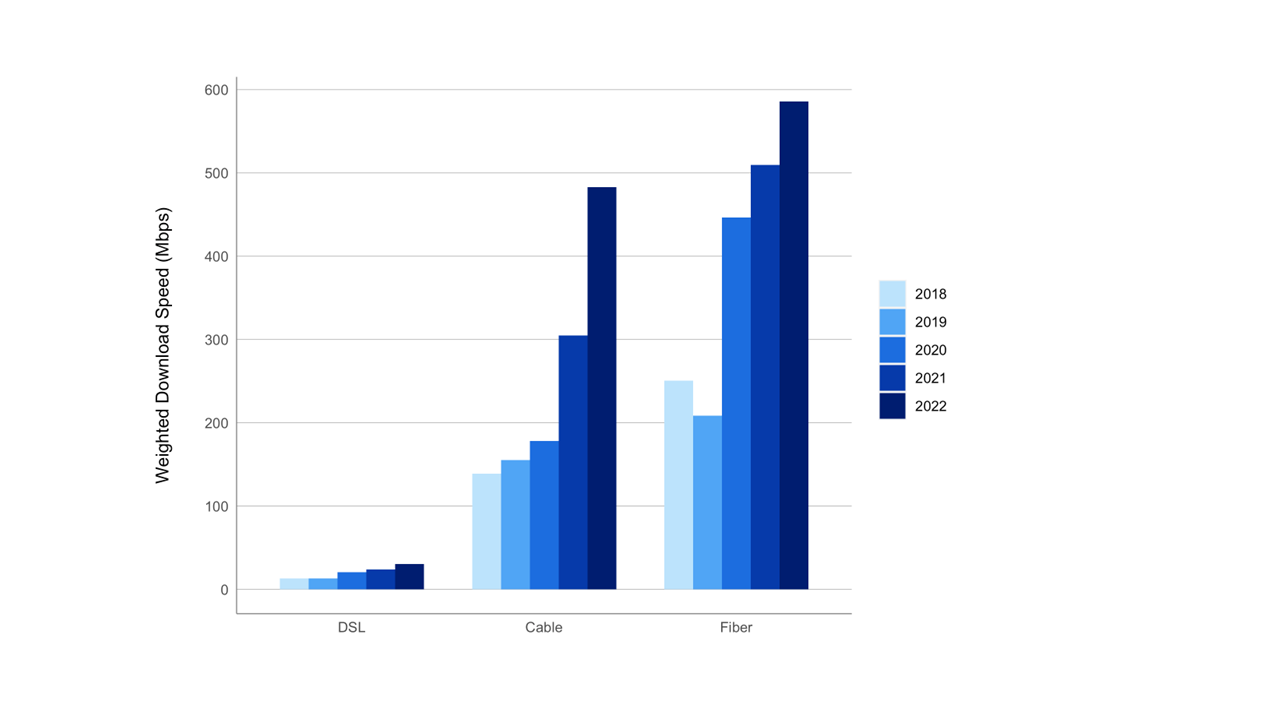

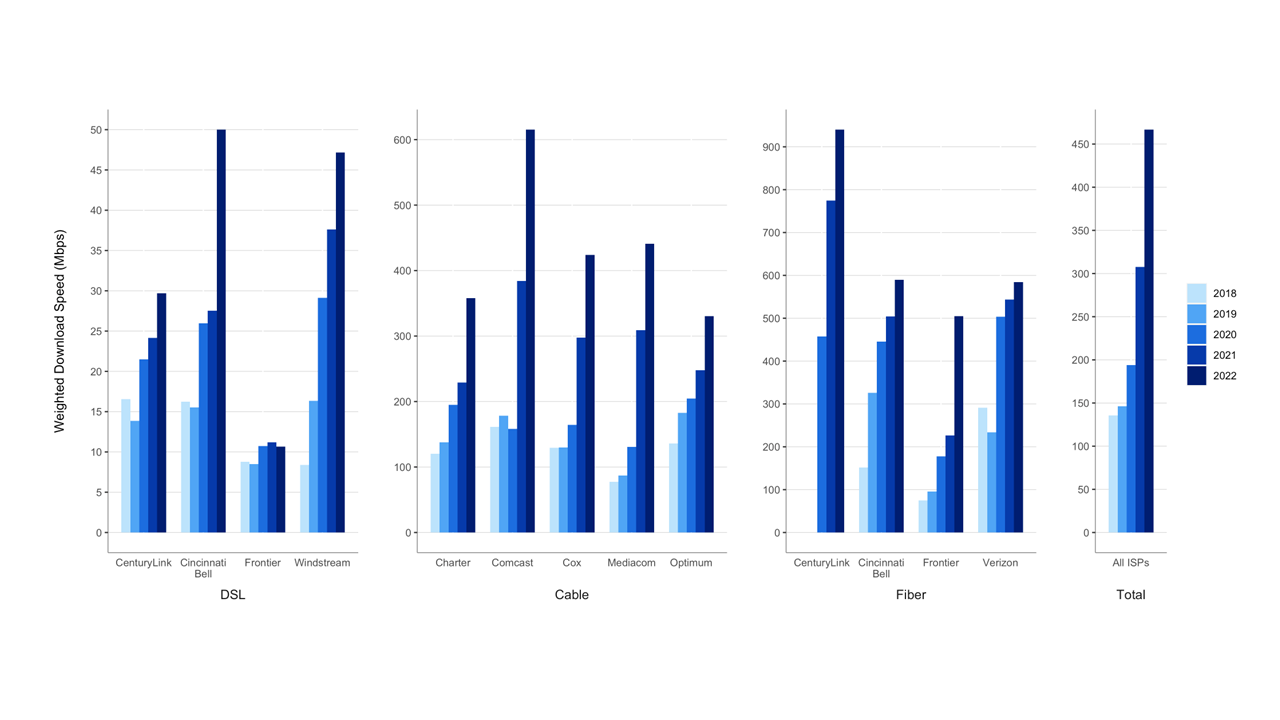

Chart 1 (below) displays the weighted (by subscriber numbers) mean of the top 80% advertised download speed tiers for each participating ISP for the last five years (September 2018 to September-October 2022) grouped by the access technology used to offer the broadband Internet access service (DSL, cable, or fiber). It should be noted that this chart only aims to represent the weighted mean of the ISPs’ advertised speeds as one index to track broadband service evolution; it does not reflect the measured performance of the ISPs. As far as a further composite index across the entirety of the ISPs in the MBA sample, in September-October 2022, the weighted mean advertised download speed was 467.0 Mbps among the measured ISPs, which represents a 52% increase compared to the previous mean, in September-October 2021, of 307.7 Mbps (as in the Twelfth MBA Report), and a 141% increase compared to the 2020 mean of 193.9 Mbps (as shown in the Eleventh MBA Report).

All of the ISPs had higher weighted means of advertised speeds in September-October 2022 as compared to September-October 2021, with the exception of Frontier, which had a slightly reduced weighted mean speed for its DSL technology, but a marked increase in the weighted mean speed for its fiber technology.

Chart 1 also reflects that advertised DSL speeds, generally, lag behind the speed of cable and fiber technologies. Chart 2 below plots the weighted mean of the top 80% ISP tiers by technology for the last five years. All technologies showed increases in the set of advertised download speeds by ISPs. For the September-October 2022 period, the weighted mean advertised speed for DSL technology was 31 Mbps, which lagged considerably behind the weighted mean advertised download speeds for cable and fiber technologies of 483 Mbps and 586 Mbps, respectively. DSL, cable, and fiber technologies showed a 28%, 58%, and 15% increase, respectively, in weighted mean advertised download speeds from 2021 to 2022.

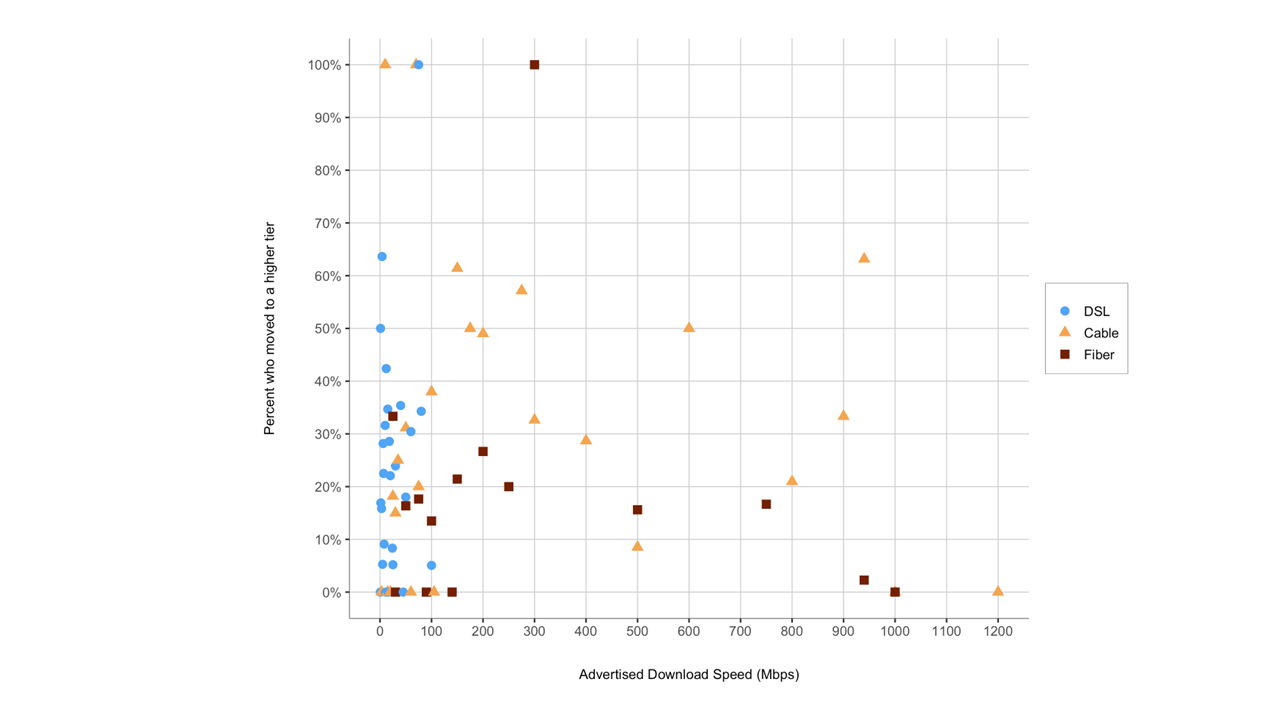

Chart 3 plots the migration of panelists to a higher service tier based on their access technology.[12] Specifically, the horizontal axis of Chart 3 partitions the September-October 2021 panelists by the advertised download speed of the service tier to which they were subscribed. For each such set of panelists who also participated in the September-October 2022 collection of data,[13] the vertical axis of Chart 3 displays the percentage of panelists that migrated by September-October 2022 to a service tier with a higher advertised download speed. There are two ways that such a migration could occur: (1) panelist may have changed their broadband plan during the intervening year to a service tier with a higher advertised download speed, or (2) the panelist’s ISP increased the advertised download speed of the panelist’s existing subscribed plan.[14]

Chart 3: Consumer migration to higher advertised download speeds

B. Median download speeds

Advertised download speeds may differ from the actual (measured) speeds that subscribers experience. Some ISPs meet network service objectives more consistently than others; some meet such objectives unevenly across their geographic coverage area. Also, speeds experienced by a consumer may vary during the day if the aggregate user demand during busy hours causes network congestion. Unless stated otherwise, the data used in this report is based on measurements taken during peak usage periods, which we define as 7 p.m. to 11 p.m. local time.[15]

To compute each ISP’s overall performance inclusive of all sampled tiers, we determine the ratio of the median speed for each tier to the advertised tier speed and then calculate the weighted mean of these based on the subscriber count per tier. Subscriber counts for the weightings were provided from the ISPs themselves or, if unavailable, from FCC Form 477 data.

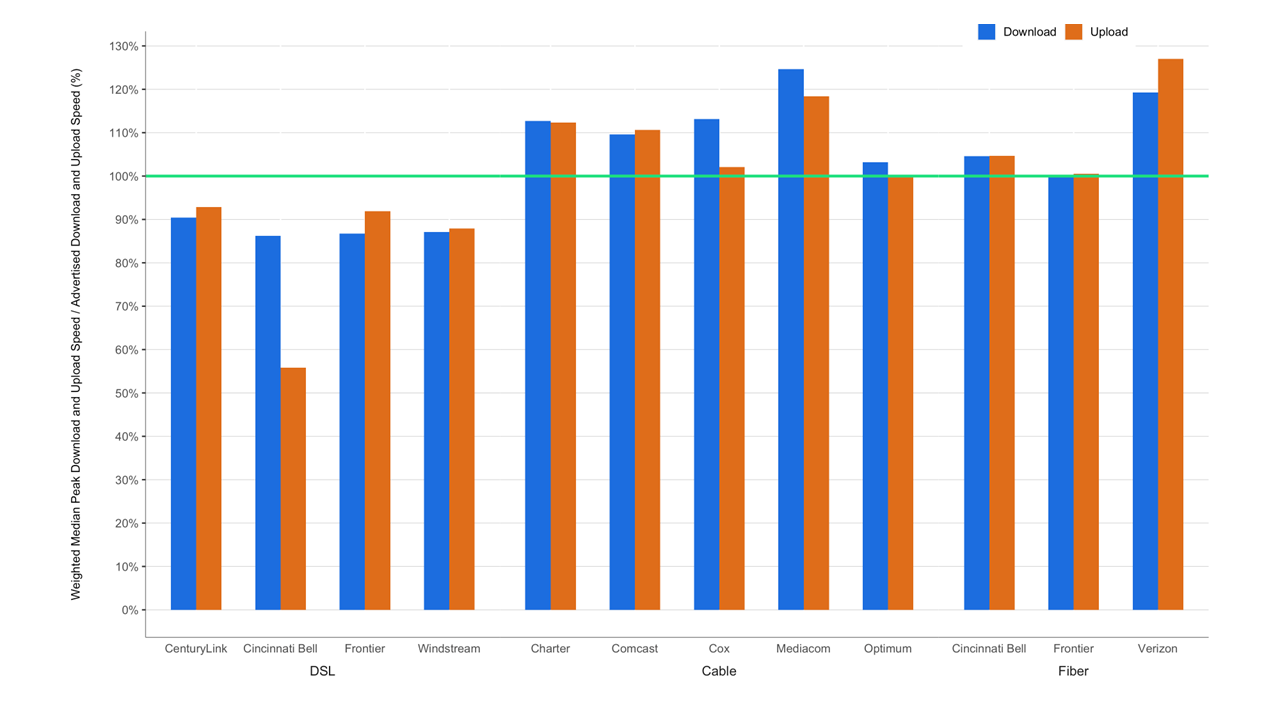

Chart 4 shows the ratio of the measured median download and upload speeds experienced by an ISP’s subscribers to that ISP’s advertised download and upload speeds weighted by the subscribership numbers for the tiers. The measured speeds experienced by the subscribers of most ISPs were close to or exceeded the advertised speeds. DSL broadband ISPs, however, continue to advertise “up-to” speeds that, on average, exceed the actual speeds experienced by their subscribers. Out of the 12 ISP/technology configurations shown, eight met or exceeded their advertised download speeds, while the remaining four were between 86% to 90% of their advertised download speed. Results for Gigabit, or near Gigabit, tiers are shown separately in Annex A.

Chart 4: The ratio of weighted median speed (download and upload) to advertised speed for each ISP.

C. Variations In Performance/Measured speeds

As discussed earlier, actual speeds experienced by individual consumers may vary by location and time of day.

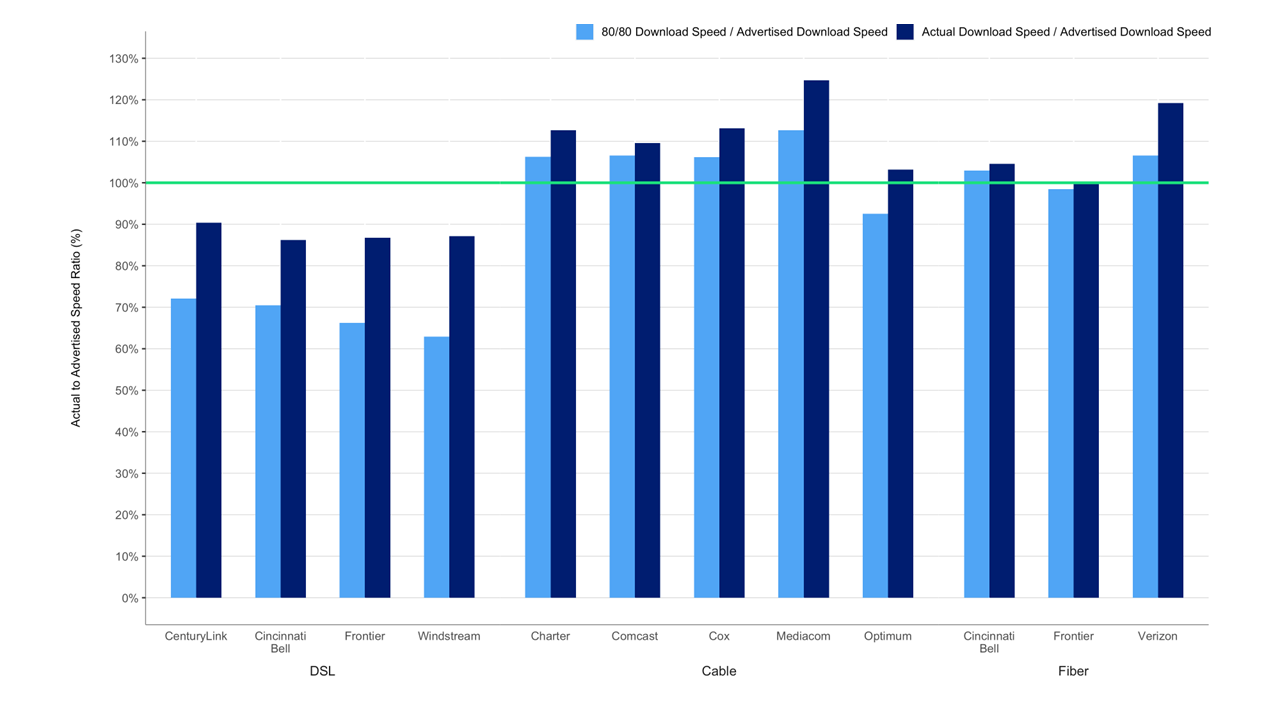

In addition to variations based on a subscriber’s location, speeds experienced by a consumer may fluctuate during the day. This typically is caused by increased traffic demand and the resulting stress on different parts of the network infrastructure. To examine this aspect of performance, we use the term “80/80 consistent speed.” This metric is designed to assess temporal and spatial variations in measured values of a user’s download speed.[16] While consistency of speed is in itself an intrinsically valuable service characteristic, its impact on consumers will hinge on variations in usage patterns and needs. As an example, a good consistency of speed measure is likely to indicate a higher quality of service experience for internet users consuming video content.

Chart 5 summarizes, for each ISP, the ratio of 80/80 consistent median download speed to advertised download speed, and, for comparison, the ratio of median download speed to advertised download speed shown previously in Chart 4. The ratio of 80/80 consistent median download speed to advertised download speed was less than the ratio of median download speed to advertised download speed for all participating ISPs due to congestion periods when median download speeds were lower than the overall average. The size of the difference between these two ratios is indicative of the variability of median download speed. When the difference between these two ratios is small, the median download speed is fairly insensitive to both geography and time. In contrast, when the difference between the two ratios is large, there is a greater variability in median download speed, either across a set of different locations or across different times during the peak usage period at the same location.

Chart 5: The ratio of 80/80 consistent median download speed to advertised download speed.

As can be seen in Chart 5, cable and fiber ISPs generally performed better than DSL ISPs with respect to their provision of consistent speeds. Most customers using cable and fiber technologies experienced median download speeds that were fairly consistent; i.e., these ISPs provided 100% or greater than the advertised speed during peak usage period to more than 80% of their panelists for more than 80% of the time.

D. Latency

The roundtrip latency between any two points in the network is the time it takes for a packet to travel from one point to the other and back. It has a fixed component that depends on the distance, the transmission speed, and transmission technology between the source and destination, and a variable component due to potential variability in routes and queuing delay that could increase as the network path congests with traffic. The MBA program measures latency by measuring the roundtrip time between the consumer’s home and the temporally closest[17] measurement server.

We test for two different types of latency: idle latency and latency under load. For both cases, the test measures the roundtrip time of small UDP packets between the router and the target test server. Each packet consists of an 8-byte sequence number and an 8-byte timestamp. If a packet is not received back within three seconds of sending, it is treated as lost (see packet loss section below). In the case of idle latency, the connection to the test server is established only when there is no other traffic detected in or out of the subscriber’s home. The latency under load test operates for a duration of the 10-second downstream and upstream speed tests. For latency under load test packets are sent more frequently (10 packets/second), whereas for idle latency measurements 2,000 packets are sent every hour; i.e., less than a packet (0.56 packets) per second. The test records the number of packets sent each hour, the average round trip time of these and the total number of packets lost. The test uses the 99th percentile when calculating the minimum, maximum, and average results on the router.

Chart 6 shows the median idle latency for each participating ISP. In general, higher speed service tiers have lower idle latency, as it takes less time to transmit each packet. The measured median latencies ranged from 7ms to 34ms.

Measured idle latencies for DSL (between 23ms to 34 ms) were slightly higher than those for cable (12ms to 24ms), and idle latencies were lowest for fiber ISPs (7 ms to 14 ms).

Chart 7 shows a comparison of idle latency[18] with latency under downstream traffic load as well as under upstream traffic load for different ISPs (shown anonymized) based on the weighted mean of latencies across all the measured tiers of each ISP. As this chart illustrates, the latency under downstream traffic load generally is significantly higher than idle latency, with a more pronounced difference for DSL subscribers. Individual ISP tiers’ idle latencies are provided in Chart 22.

E. Packet Loss

Packet loss is the percentage of packets that are sent by a source but not received at the intended destination. The most common causes of packet loss are congestion leading to buffer overflows or active queue management along the network path. Alternatively, high latency might lead to a packet being counted as lost if it does not arrive within a specified window. A small amount of packet loss is expected, and indeed packet loss commonly is used by some Internet protocols such as TCP to infer Internet congestion and to adjust the sending rate to mitigate the offered load, thus lessening the contribution to congestion and the risk of lost packets. The MBA program uses an active UDP-based packet loss measurement method and considers a packet lost if it is not returned within 3 seconds.

Chart 8 shows the average peak-period packet loss for each participating ISP, separated into three groups based on technology used for the access network. The average peak-period packet loss performance is represented in three bands based on commonly accepted packet loss thresholds for specific services and typical packet loss limits in provider Service Level Agreements (SLAs) for enterprise services (as consumer offerings typically are not subject to SLAs). Separating the packet loss performance into these three bands allows a more granular view of the packet loss performance of the ISP network. The breakpoints for the three bands are: up to 0.4% packet loss, 0.4% - 1% packet loss, and over 1% packet loss. The 1% standard for packet loss is commonly accepted as the point at which highly interactive applications such as VoIP experience significant degradation in quality according to industry publications and international (ITU) standards.[19] The specific value of 0.4%, roughly the middle ground between the highly desirable performance of 0% packet loss described in many documents for Voice of Internet Protocol (VoIP), generally is supported by major ISP SLAs for network. Indeed, most SLAs support 0.1% to 0.3% packet loss guarantees,[20] but these are generally for enterprise level services which entail business-critical applications that require some service guarantees.

Chart 8: Percentage of consumers whose peak-period packet loss was less than 0.4%, between 0.4% to 1%, and greater than 1%.

F. Web browsing performance

The MBA program also conducts a specific test to gauge web browsing performance. The web browsing test accesses nine popular websites that include text and images, but not streaming video. The time required to download a webpage depends on many factors, including the consumer’s in-home network, the download speed within an ISP’s network, the web server’s speed, congestion in other networks outside the consumer’s ISP’s network (if any), and the time required to look up the network address of the webserver. Only some of these factors are under the control of the consumer’s ISP. Chart 9 displays the average webpage download time as a function of the advertised download speed. As shown by this chart, webpage download time decreased as download speed increased, from about 12 seconds at 1.5 Mbps download speed to about 1.7 seconds for 50 Mbps download speed. Subscribers to service tiers exceeding 50 Mbps experienced slightly smaller webpage download times decreasing to 1.3 seconds at 250 Mbps. Beyond 250 Mbps, the webpage download times decreased only by minor amounts. These download times assume that only a single user is using the Internet connection when the webpage is downloaded; our testing does not account for additional common scenarios, such as where multiple users within a household are simultaneously using the Internet connection for viewing web pages, as well as other applications such as real-time gaming or video streaming.

Chart 9: Average webpage download time, by advertised download speed.

3. Methodology

A. Participants

Ten ISPs actively participated in the Fixed MBA program in September-October 2022. They were:

- Altice Optimum

- CenturyLink

- Charter Communications

- Cincinnati Bell

- Comcast

- Cox Communications

- Frontier Communications Company

- Mediacom Communications Corporation

- Verizon

- Windstream Communications

The methodologies and assumptions underlying the measurements described in this Report are reviewed at meetings that are open to all interested parties; these meetings are documented in public ex parte letters filed in the GN Docket No. 12-264. Policy decisions regarding the MBA program are discussed at these meetings prior to adoption, and involve issues such as inclusion of tiers, length of test periods, mitigation of operational issues affecting the measurement infrastructure, and terms of use for notification to panelists. Participation in the MBA program is open and voluntary. Participants include members of academia, consumer equipment vendors, telecommunications vendors, network service providers, consumer policy groups, and, through July, 2023, the FCC’s contractor for this project, SamKnows. In 2022-2023, participants at these meetings (collectively and informally referred to as “the FCC MBA Broadband Collaborative”), included the parties above and the following additional organizations:

- AT&T

- StackPath

- NCTA – The Internet & Television Association (“NCTA”)

- United States Telecom Association (“US Telecom”)

Participants have contributed to the quality of this program and have provided valuable input to FCC decisions for this program. Initial proposals for test metrics and testing platforms were discussed and critiqued within the broadband collaborative. We thank all the participants for their continued contributions to the MBA program.

B. Measurement process

The measurements that provided the underlying data for this report were conducted between MBA measurement clients and MBA measurement servers. The measurement clients (i.e., whiteboxes) were situated in the homes of 4,392 panelists, each of whom received service from one of the 10 evaluated ISPs. The evaluated ISPs collectively represent over 70% of U.S. residential broadband Internet connections. After the measurement data was processed (as described in greater detail in the Technical Appendix), test results from 2,025 panelists were used in this report[21].

The measurement servers used by the MBA program were hosted by StackPath and were located in ten cities (some with multiple locations within each city) across the United States near a point of interconnection between the ISP’s network and the network on which the measurement server resides.

The measurement clients collected data throughout the year, and the data are available as described below. For the purpose of this 13th Report, only the data collected on September 12-13 and 16-21, and October 4-6, 11-13, 15-17, and 19-31 in 2022, referred to as the “September-October 2022 reporting period,” were analyzed to generate the charts and conclusions.[22]

Broadband performance varies diurnally. At peak hours, the network traffic rate increases as more people access the Internet leading to a greater potential for network congestion and, hence, performance degradation in overall experience. Unless otherwise stated, this Report focuses on performance during the peak usage period, which is defined as weeknights between 7:00 p.m. to 11:00 p.m. local time at the subscriber’s location. Focusing on the peak usage period provides the most useful information as it demonstrates what performance users can expect when Internet access services in their local area experience the highest demand from users. As shown in the Annex to this Report, it should be noted that the peak period remained the same despite the increased number of people working from home due to the pandemic.

Our methodology focuses on the performance of each of the participating ISP’s access network. The metrics discussed in this Report are derived from active measurements; i.e., test-generated traffic flowing between a standalone measurement client, located within a panelist’s home, and a measurement server, located outside the ISP’s network. For each panelist, the tests make a periodically-rerun empirical choice as to the measurement server that has the lowest latency to the measurement client. Thus, the metrics measure performance along the path followed by the measurement traffic within each ISP’s network, through points of interconnection between the ISP’s network and the network on which the chosen measurement server is located. The service performance that a consumer experiences; however, could differ from our measured values for any of the following reasons.

First, as noted above, in the course of each test instance, performance is measured only to a single measurement server rather than to multiple servers. This is consistent with the approach chosen by most network measurement tools, although applications may vary. As a point of comparison, the average web page may load its content from a multiplicity of end points.

Second, bottlenecks or congestion points in the entirety of the path traversed by consumer application traffic also impact a consumer’s perception of Internet service performance. These bottlenecks may exist at various points: within the ISP’s network, beyond its network (depending on the network topology encountered en route to the traffic destination), or in the consumer’s home on the Wi-Fi used to connect to the in-home access router. Beyond this, the device used by the customer to access the Internet may also impact perceived performance.

Third, although MBA methodologies/system/measurements are designed to minimize confounding factors outside an access ISP’s control,[23] service within the home often is shared simultaneously among multiple users and applications, and as a result, individual connections may not experience the full performance delivered to the home. Further, connections in the home may not have sufficient capacities to support peak loads, for example, some Wi-Fi connections may be limited to tens of Mbps which would be the maximum achievable throughput to a specific device using that type of connection, independent of whether a consumer may subscribe to a service delivering; e.g., hundreds of Mbps to the home.[24]

Fourth, consumers’ perception of ISP performance typically depends on the set of applications they utilize. The overall performance of an application depends not only on the network performance (i.e., raw speed, latency, or packet loss), but also on the application’s architecture and implementation and the platform (operating system and hardware) on which it runs. While network performance is considered in this Report, application performance is not. To the extent possible, the MBA focuses on performance within an ISP’s network.

C. Tests And Performance Metrics

This Report is based on the following measurement tests:

- Download speed: This test measures the download speed of each whitebox over a 10-second period, once per hour during peak hours (7 p.m. to 11 p.m.) and once during each of the following periods: midnight to 6 a.m., 6 a.m. to noon, and noon to 6 p.m. The download speed measurement results from each whitebox are then averaged across the measurement month, and the median value for these average speeds across the entire set of whiteboxes on a given tier is used to determine the median measured download speed for that tier. The overall ISP download speed is computed as the weighted median for each service tier, using the subscriber counts for the tiers as weights.

- Upload speed: This test measures the upload speed of each whitebox over a 10-second period, which is the same measurement interval as the download speed. The upload speed measured in the last five seconds of the 10-second interval is retained, the results of each whitebox are then averaged over the measurement period, and the median value for the average speed taken over the entire set of whiteboxes is used to determine the median upload speed for a service tier. The ISP upload speed is computed in the same manner as the download speed.

- Latency and packet loss: Idle latency[25] tests measure the round-trip times for approximately 2,000 packets per hour sent at randomly distributed intervals. In contrast, working latency (also known as “latency under load”) measurements operate during the 10 second download or upload speed test and are sent more frequently (10 packets/second). Results less than three seconds are used to determine the latency. If the whitebox does not receive a response within three seconds, the packet is counted as lost. SamKnows test servers are hosted at 10 major public interconnects(IXPs) in or near major metropolitan areas. The use of these servers for measurements provides a consistent and objective basis of comparison across all ISPs for the MBA metrics specified in the Technical Appendix. However, ISPs also serve communities that may be geographically distant from the IXP locations used.[26]

- Web browsing: The web browsing test, performed once every hour, measures the total time it takes to request and receive webpages, including the text and images, from nine popular websites. The measurement includes the time required to translate the web server name (URL) into the webserver’s network (IP) address.

This Report focuses on three key performance metrics of interest to consumers of broadband Internet access service, as these metrics are likely to influence how well a wide range of consumer applications work: download and upload speed, latency, and packet loss. Download and upload speeds also are the primary network performance characteristic advertised by ISPs. However, as discussed above, the performance observed by a user in any given circumstance depends not only on their ISP’s network, but also on the performance of other parts of the Internet and of the application platform used. The Technical Appendix to this Report describes each test in more detail, including additional tests not utilized for the purpose of compiling this Report.

D. MBA data coverage and quality

The MBA panel sample used in the reporting period is validated (i.e., upload and download tiers of the whiteboxes are verified with providers), and the measurement results are carefully inspected to eliminate any statistical outliers. This leads to a “validated data set” that accompanies each report. The Validated Data Set[27] on which this Report is based, as well as the full results of all tests, are available at https://www.fcc.gov/measuring-broadband-america. For interested parties, as tests were run 24x7x365, we also provide raw data (referred to as such because validation cross-checks are not done except in the test period used for the report, thus subscriber tier changes may be missed) for the reference month and the rest of the year.[28] Previous reports of the MBA program, as well as the data used to produce them, also are available at the site listed above.

4. Test Results

A. Most Popular Advertised Service Tiers

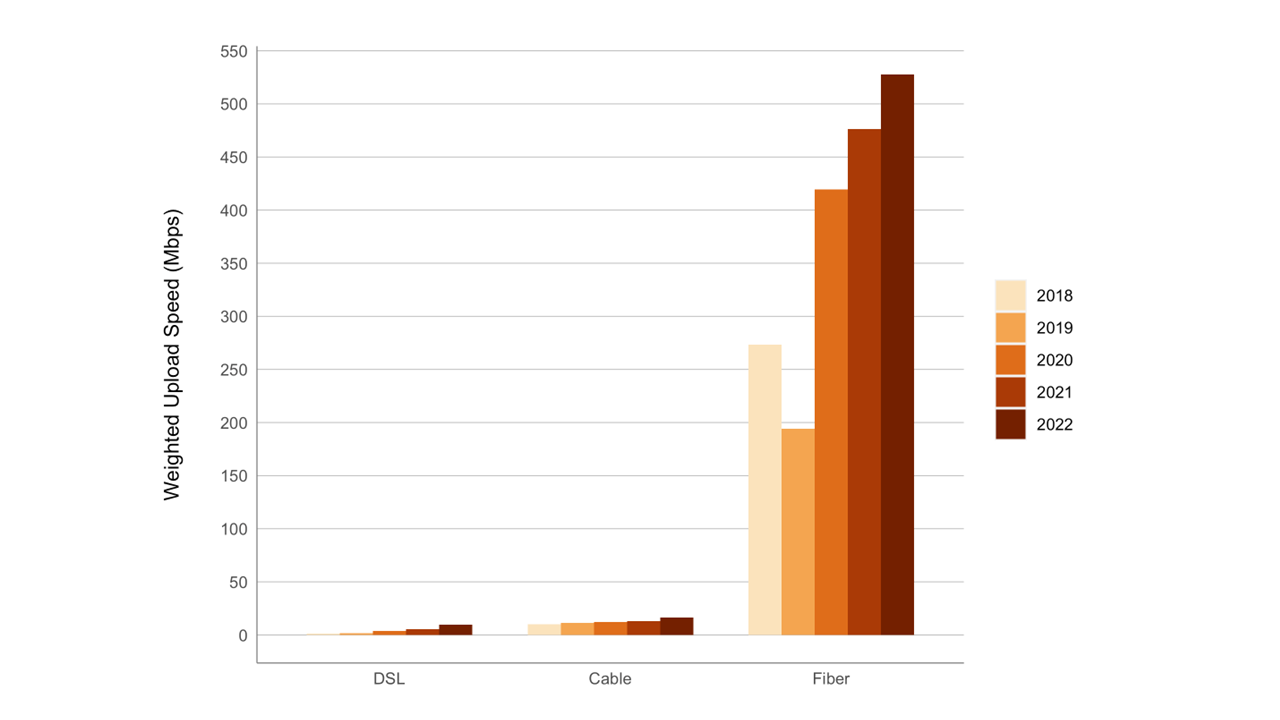

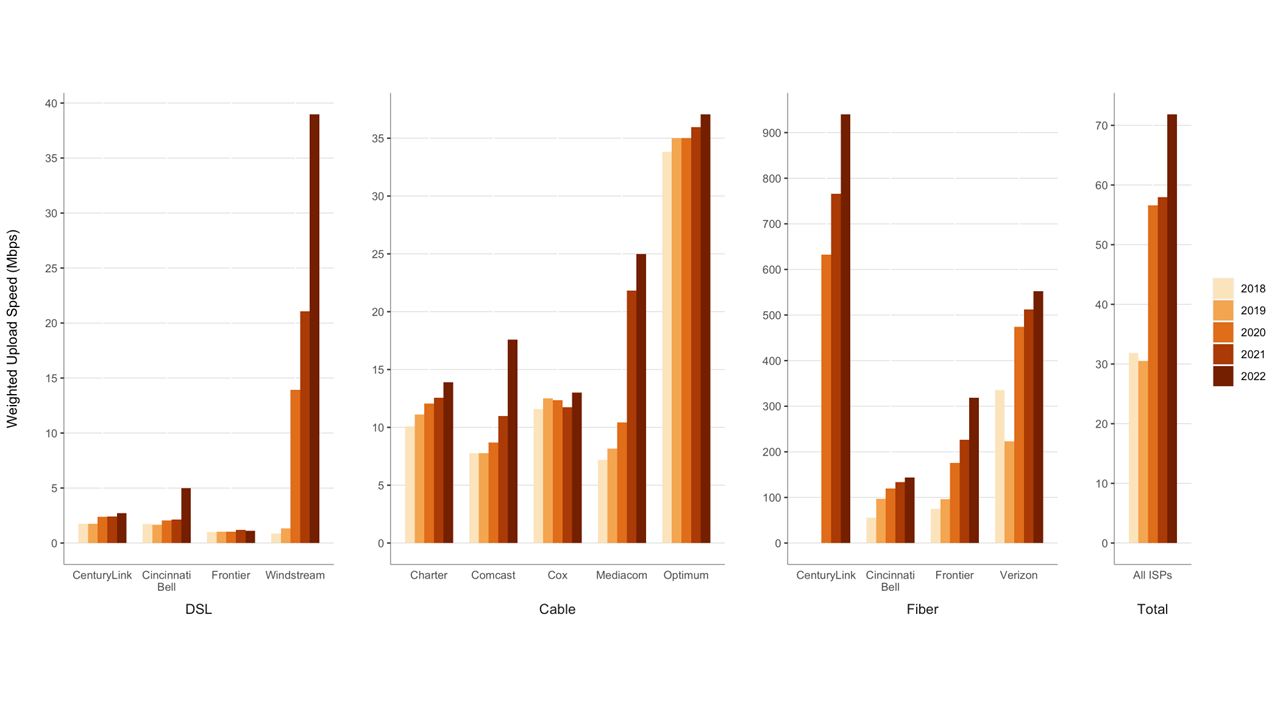

Chart 1 above summarizes the weighted mean of the advertised download speed offerings[29] for each participating ISP for the last five years (September 2018 to September-October 2022), where the weighting is based upon the number of subscribers to each tier, grouped by the access technology used to offer the broadband Internet access service (DSL, cable, or fiber). Only the top 80% tiers (by subscriber number) of each participating ISP were included. Chart 10.1 below shows the corresponding weighted average of the advertised upload speeds among the measured ISPs. The computed weighted mean of the advertised upload speed of all the ISPs is 71.8 Mbps; this is a 24% increase from 58 Mbps in 2021.

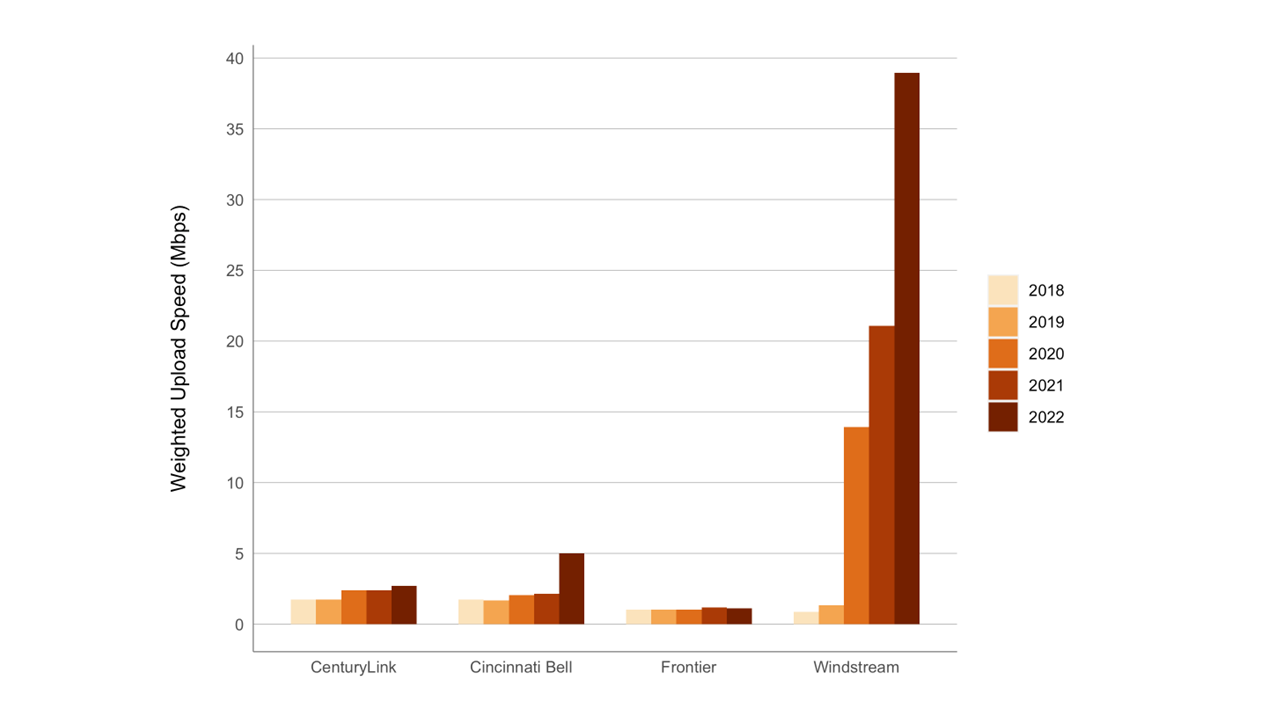

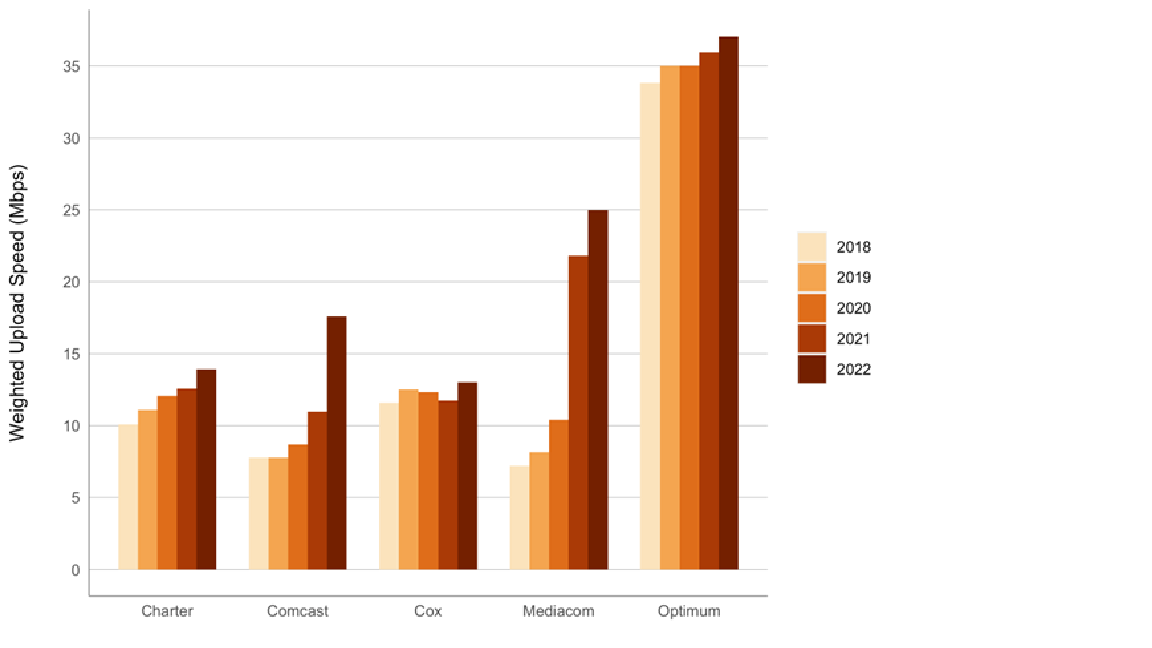

Due to the relatively high upload speeds for optical technology, it is difficult to discern the variations in speed for both DSL and cable technologies when drawn to the same scale. Therefore, separate Charts 10.2 and 10.3 are included here that provide the weighted -mean upload speeds for ISPs using DSL and cable technologies, respectively, scaled to a more granular level.

Chart 10.2: Weighted mean advertised upload speed offered by ISPs using DSL technology.

Chart 10.3: Weighted mean advertised upload speed offered by ISPs using Cable technology.

Chart 11 compares the weighted mean of the advertised upload speeds by technology for the last five years (September 2018 to September-October 2022) taken as a composite across all ISPs. The advertised upload speeds for fiber was 528 Mbps, which was far higher than for other technologies. Consequently, in order to see the trend in upload speeds of DSL and Cable technologies, the results for these technologies were segregated and are represented in the chart on a different scale than the upload speed for Fiber technology. As can be seen in this chart, all technologies showed increased rates in 2022 as compared to previous years. However, the rates of increase were not the same for all technologies. Compared to the previous year’s (2021) results, 2022’s results reflected an increase of offered upload speeds for DSL of 68% to 9.5 Mbps, an increase in cable of 30% to 16.6 Mbps, and an increase in fiber of 11% to 528 Mbps.

Observing both the download and upload speeds, it is clear that fiber service tiers generally are symmetric in their actual upload and download speeds. This results from the fact that fiber technology has significantly more capacity than other technologies and can be engineered to have symmetric upload and download speeds. For other technologies with more limited capacity, higher capacity is usually allocated to download speeds than to upload speeds, typically in ratios ranging from 5:1 to 10:1.

Chart 11: Weighted mean advertised upload speed among the top 80% service tiers based on technology.

B.Variations In Speeds

As seen in Chart 4, the median upload speeds experienced by most cable and fiber subscribers were close to or exceeded the advertised upload speeds. The median upload speed for ISPs using DSL technology fell significantly short of the advertised upload speed.

We can learn more about the variation in network performance by examining the complementary cumulative distribution statistics. For each ISP, we first calculate the ratio of the median download speed (over the peak usage period) to the advertised download speed for each panelist subscribing to that ISP. We then examine the distribution of this ratio across the set of panelists representing the ISP’s service territory.

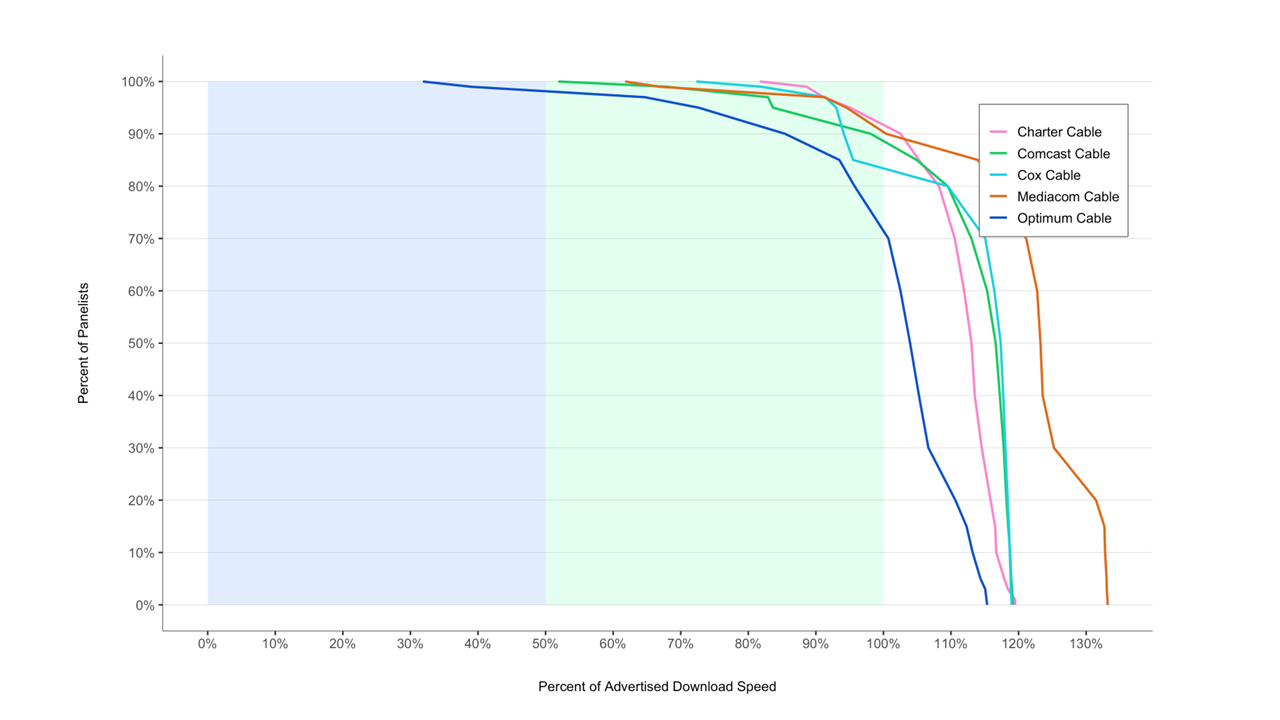

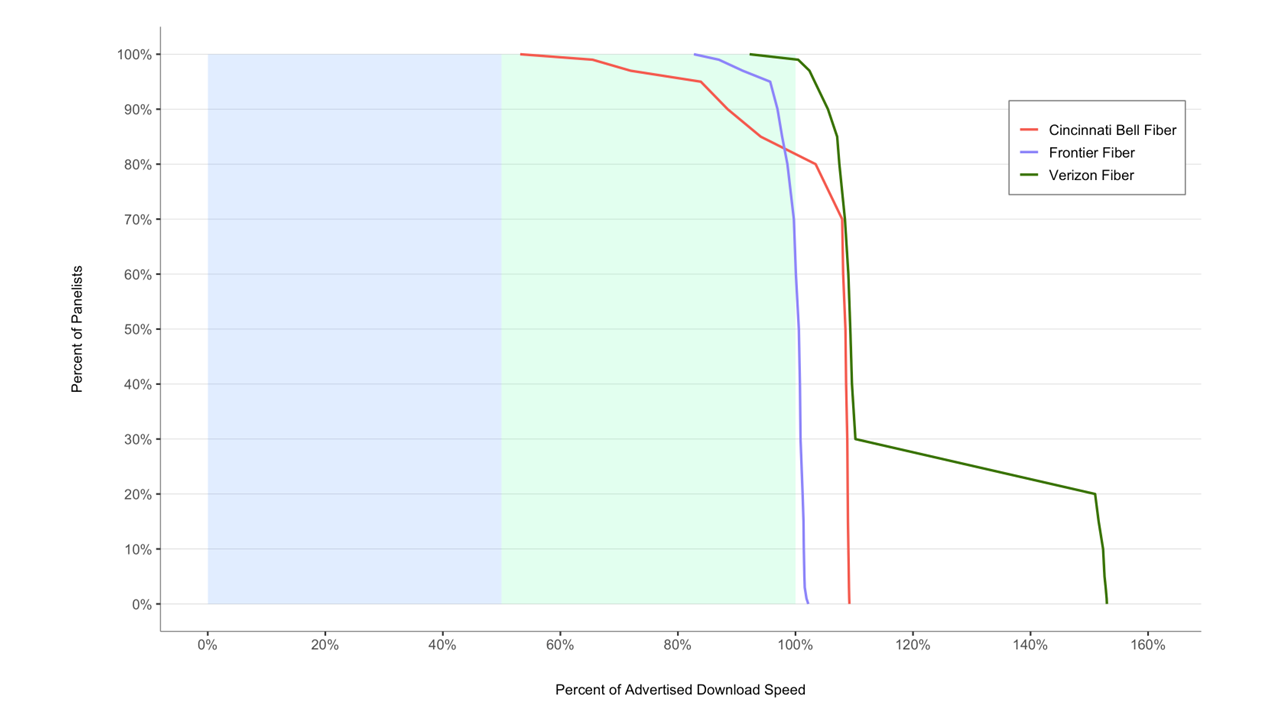

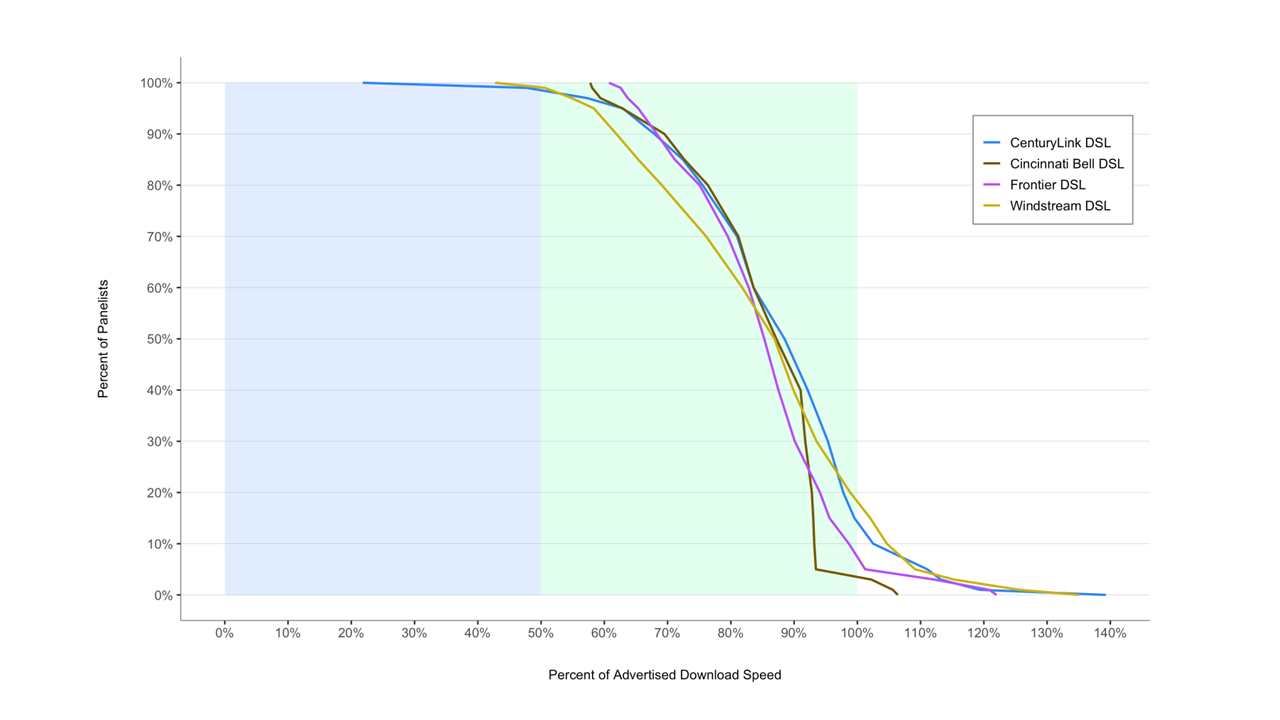

Charts 12.1 and 12.2 show the complementary cumulative distribution of the ratio of median download speed (over the peak usage period) to advertised download speed for each participating ISP. For each ratio of measured to advertised download speed on the horizontal axis, the curves show the percentage of panelists, by ISP, that experienced at least this ratio.[30] For example, the curve in Chart 12.1 for Cincinnati Bell DSL shows that 90% of its subscribers experienced a median download speed exceeding 70% of the advertised download speed, 70% experienced a median download speed exceeding 81% of the advertised download speed, and 50% experienced a median download speed exceeding 87% of the advertised download speed; approximately 3% experienced a median download speed at or above the advertised download speed.

The curves for cable-based broadband and fiber-based broadband are steeper than those for DSL-based broadband. The speed achievable by DSL depends on the distance between the subscriber and the central office. Thus, the complementary cumulative distribution function will fall slowly unless the broadband ISP adjusts its advertised rate based on the subscriber’s location. Additionally, ISPs using DSL technology show curves that drop off at much less than 100% of advertised speed as compared to fiber and cable technologies. This can be seen more clearly in Chart 12.3, which plots aggregate curves for each technology. Approximately 85% of subscribers to cable and 85% of subscribers to fiber-based technologies experience median download speeds exceeding the advertised download speed. In contrast, fewer than 15% of subscribers to DSL-based services experience median download speeds exceeding the advertised download speed.[31]

Chart 12.4: Complementary cumulative distribution of the ratio of median upload speed to advertised upload speed (DSL).

All speeds discussed above were measured during peak usage periods. In contrast, Charts 13.1 and 13.2 below compare the ratio of actual download and upload speeds to advertised download and upload speeds during peak and off-peak times. These charts show that most ISP subscribers experience only a slight degradation from off-peak to peak hour performance (particularly for upload speeds).

Chart 13.2: The ratio of weighted median upload speed to advertised upload speed, peak versus off-peak

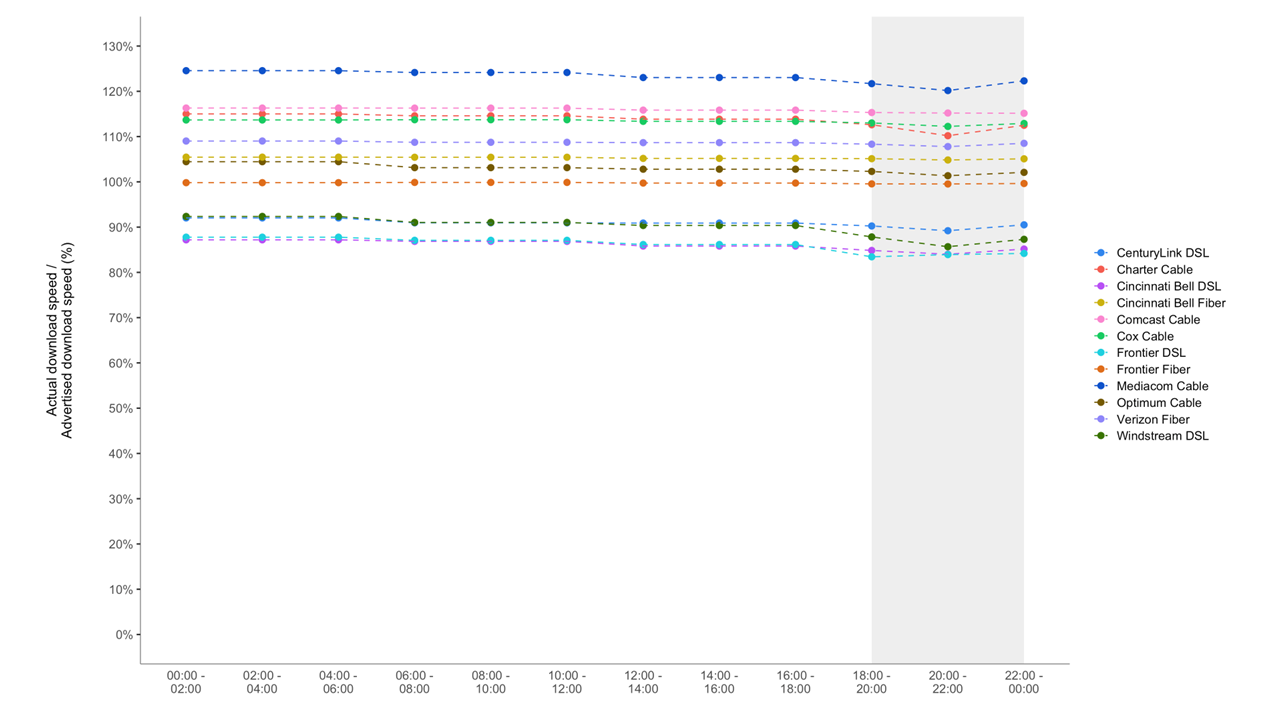

Chart 14 below shows the actual download speed to advertised speed ratio in each two-hour time block during weekdays for each ISP. The ratio is lowest during the busiest four-hour time block (7:00 p.m. to 11:00 p.m.).

Chart 14: The ratio of median download speed to advertised download speed, Monday-to-Friday, two-hour time blocks, terrestrial ISPs.

For each ISP, Chart 5 (in Section 2.C) showed the ratio of the 80/80 consistent median download speed to advertised download speed, and for comparison, Chart 4 showed the ratio of median download speed to advertised download speed.

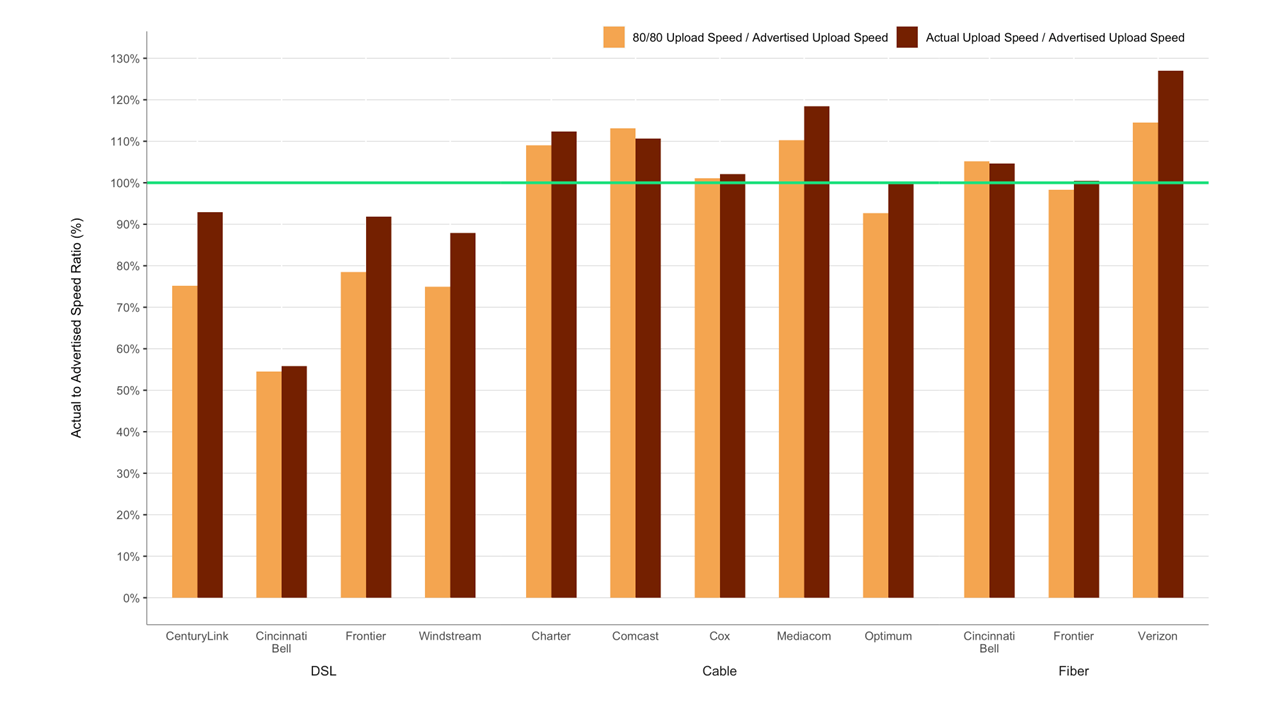

Chart 15.1 illustrates information concerning 80/80 consistent upload speeds.

While all the upload 80/80 speeds were slightly lower than the median speed, the differences were more marked in DSL. Charts 5 and 15.1 reflect that cable and fiber technologies behaved more consistently than DSL technology both for download as well as upload speeds.

Chart 15.1: The ratio of 80/80 consistent upload speed to advertised upload speed.

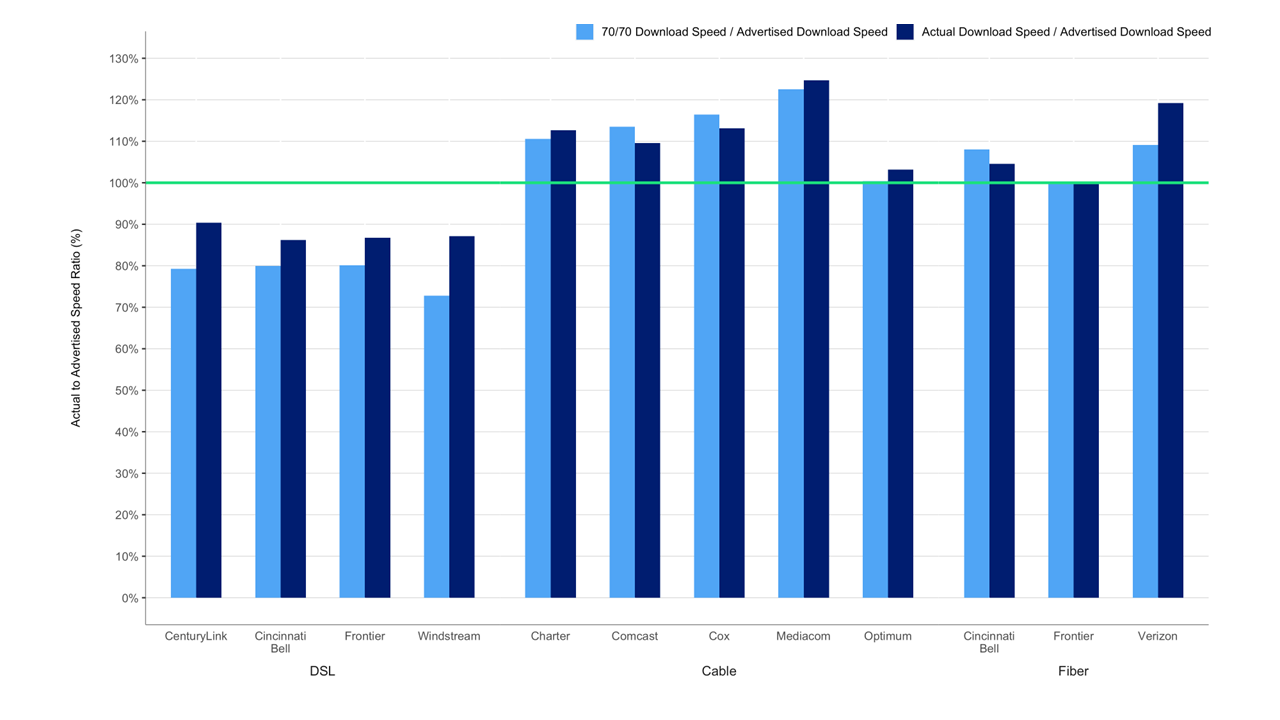

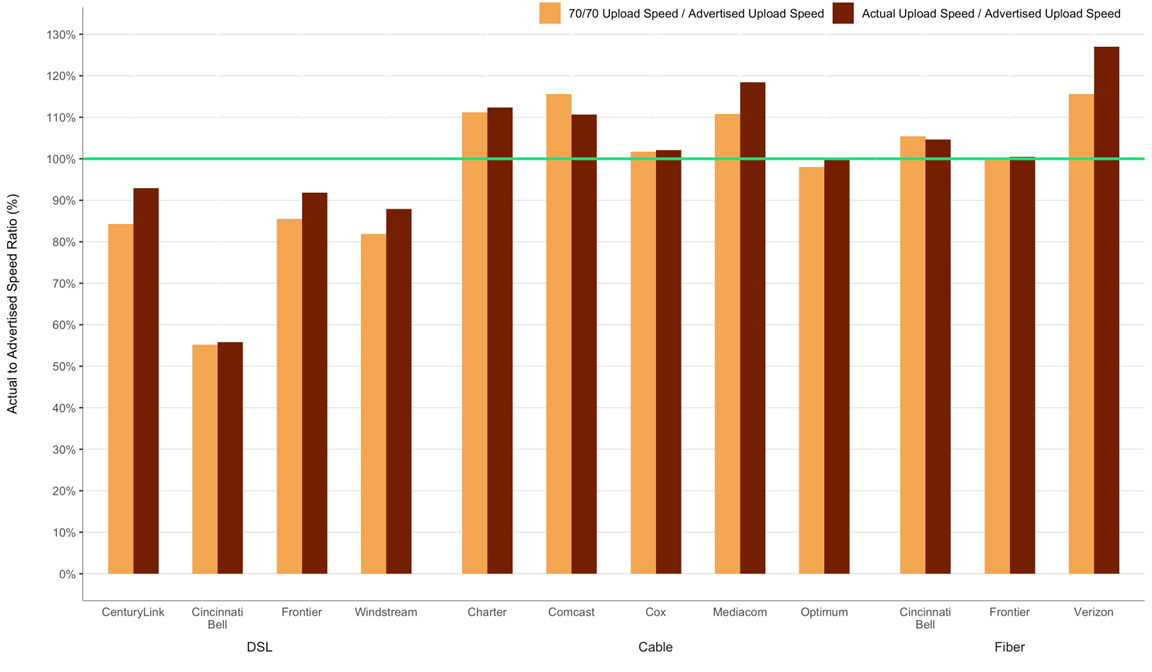

Charts 15.2 and 15.3 below illustrate similar consistency metrics for 70/70 consistent download and upload speeds; i.e., the minimum download or upload speed (as a percentage of the advertised download or upload speed) experienced by at least 70% of panelists during at least 70% of the peak usage period. The ratios for 70/70 consistent speeds as a percentage of the advertised speed are higher than the corresponding ratios for 80/80 consistent speeds. Once again, ISPs using DSL technology showed a considerably smaller value for the 70/70 download and upload speeds compared to the download and upload median speeds, respectively.

Chart 15.2: The ratio of 70/70 consistent download speed to advertised download speed.

Chart 15.3: The ratio of 70/70 consistent upload speed to advertised upload speed.

C. Latency

Chart 16 shows the weighted median idle latencies, by technology and by advertised download speed. Low-speed DSL services typically had higher latencies compared to cable or fiber. Cable latencies ranged from 13 ms to 22 ms, fiber latencies ranged from 8 ms to 14 ms, and DSL latencies ranged from 20 ms to 61 ms.

Chart 16: Latency for Terrestrial ISPs, by technology, and by advertised download speed.

5. Additional Test Results

A. Actual Speed, By Service Tier

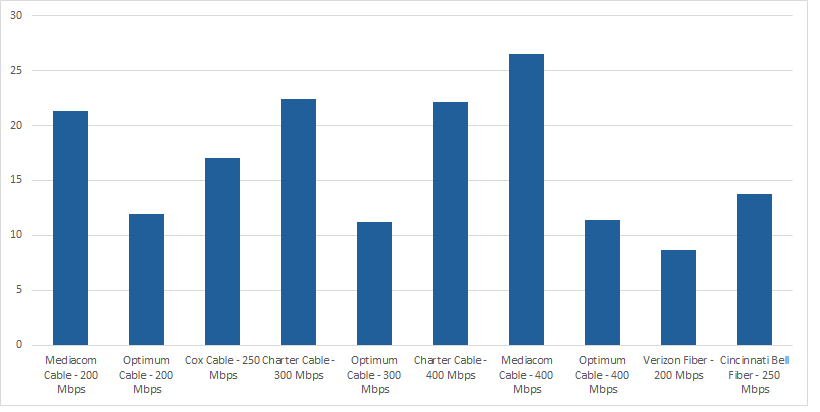

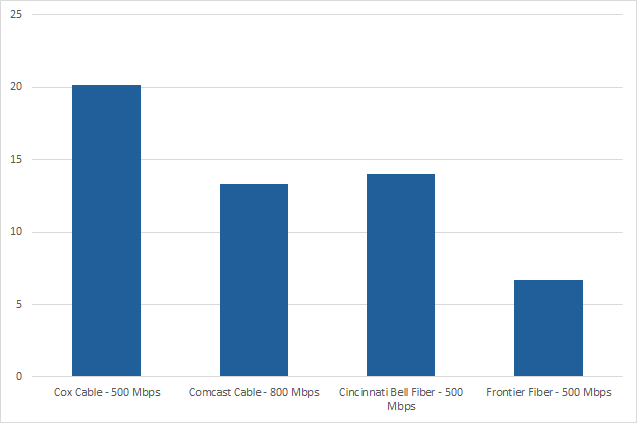

As shown in Charts 17.1-17.8, peak usage period performance varied by service tier among participating ISPs during the September-October 2022 period. On average, during peak periods, the ratio of median download speed to advertised download speed for all ISPs was 76% or better, with most ISPs achieving a ratio of 90% or better. The ratio of median download speed to advertised download speed, however, varied among service tiers. Out of the 39 speed tiers that were measured in total across the participating ISPs, 18 showed achievement of at least 100% of the advertised speed.

Chart 17.1: The ratio of median download speed to advertised download speed, by ISP (1.5-7 Mbps).

Chart 17.2: The ratio of median download speed to advertised download speed, by ISP (10-15 Mbps).

Chart 17.3: The ratio of median download speed to advertised download speed, by ISP (18-40 Mbps).

Chart 17.4: The ratio of median download speed to advertised download speed, by ISP (50-75 Mbps)

Chart 17.5: The ratio of median download speed to advertised download speed, by ISP (80-100Mbps).

Chart 17.6: The ratio of median download speed to advertised download speed, by ISP (150-250 Mbps).

Chart 17.7: The ratio of median download speed to advertised download speed, by ISP (300-400 Mbps).

Chart 17.8: The ratio of median download speed to advertised download speed, by ISP (500-800 Mbps).

Charts 18.1 - 18.6 depict the ratio of median upload speeds to advertised upload speeds for each ISP by service tier. Out of the 27 upload speed tiers that were measured across the participating ISPs, 17 met or exceeded the advertised speed and an additional 5 reflected achievement of at least 90% of the advertised upload speed.

Chart 18.2: The ratio of median upload speed to advertised upload speed, by ISP (1-3 Mbps).

Chart 18.3: The ratio of median upload speed to advertised upload speed, by ISP (5 -10 Mbps).

Chart 18.4: The ratio of median upload speed to advertised upload speed, by ISP (20-50 Mbps).

Chart 18.5: The ratio of median upload speed to advertised upload speed, by ISP (75–125 Mbps).

Chart 18.6: The ratio of median upload speed to advertised upload speed, by ISP (200–500 Mbps).

Table 2 lists the advertised download service tiers included in this study. For each tier, an ISP’s advertised download speed is compared with the median of the measured download speed results. As we noted in the past reports, the download speeds listed here are based on national averages and may not represent the performance experienced by any particular consumer at any given time or place.

Table 2: Peak period median download speed, sorted by actual download speed.

B.Variations In Speed

In this section, we provide detailed speed consistency results for each ISP’s individual service set of tiers. Consistency of speed is important for services such as video streaming, for which a significant reduction in speed for more than a few seconds can force a reduction in video resolution or an intermittent loss of service.

In previous MBA reports, a composite download and upload speed-consistency metric for each ISP also was included. This was based on the weighted test results (with the weighting done by subscriber numbers for each ISP’s tier) averaged across all the service tiers of the ISP. In this 13th Report, instead of those composite charts, the binned results are separately provided for each tier: Charts 19.1-19.3 for download speed and Charts 20.1-20.3 for upload speed.

Charts 19.1-19.3 below show the percentage of consumers that achieved greater than 95%, between 85% and 95%, or less than 80% of the advertised download speed for each ISP speed tier. ISPs typically quote a single “up-to” speed as advertised download speed. This single “up-to” speed, in the case of DSL subscribers, does not take into account that the actual speed of DSL depends on the distance between the subscriber and the serving central office. Thus, as has been observed in past MBA reports, ISPs using DSL technology do not consistently deliver the advertised service. Fiber and Cable companies, in general, showed a high consistency of speed.

Similarly, Charts 20.1 to 20.3 show the percentage of consumers that achieved greater than 95%, between 80% and 95%, or less than 80% of the advertised upload speed for each ISP speed tier.

In Section 4.B above, we present complementary cumulative distributions for each ISP based on test results across all service tiers. Below, we provide tables showing selected points on these distributions by each individual ISP. In general, DSL technology showed minimum download speed performance between 58% to 65% of advertised speed for at least 95% of their subscribers. Among cable-based companies, the average minimum download speeds experienced by at least 95% of subscribers were between 73% and 95% of advertised rates. Fiber-based services generally provided download speed between a minimum of 84% to 103% of advertised download speeds to at least 95% of subscribers.

C. Web Browsing Performance, By Service Tier

Below, we provide the detailed results of the webpage download time for each individual service tier of each ISP. Generally, website loading time decreased steadily with increasing tier speed until a tier speed of 50 Mbps, and did not change markedly above that speed.

Chart 21.1: Average webpage download time, by ISP (1.5-7 Mbps).

Chart 21.2: Average webpage download time, by ISP (10-15 Mbps).

Chart 21.3: Average webpage download time, by ISP (18-40 Mbps).

Chart 21.4: Average webpage download time, by ISP (50-75 Mbps).

Chart 21.5: Average webpage download time, by ISP (80 - 100 Mbps).

Chart 21.6: Average webpage download time, by ISP (150 - 250 Mbps).

Chart 21.7: Average webpage download time, by ISP (300 - 400 Mbps).

Chart 21.8: Average webpage download time, by ISP (500 - 800 Mbps).

D. Latency Performance, by Service Tier

Below, we provide the detailed results of the idle latency performance for each individual service tier of each ISP.

Chart 22: Idle Latency (m/s) vs Mbps by ISP tier.

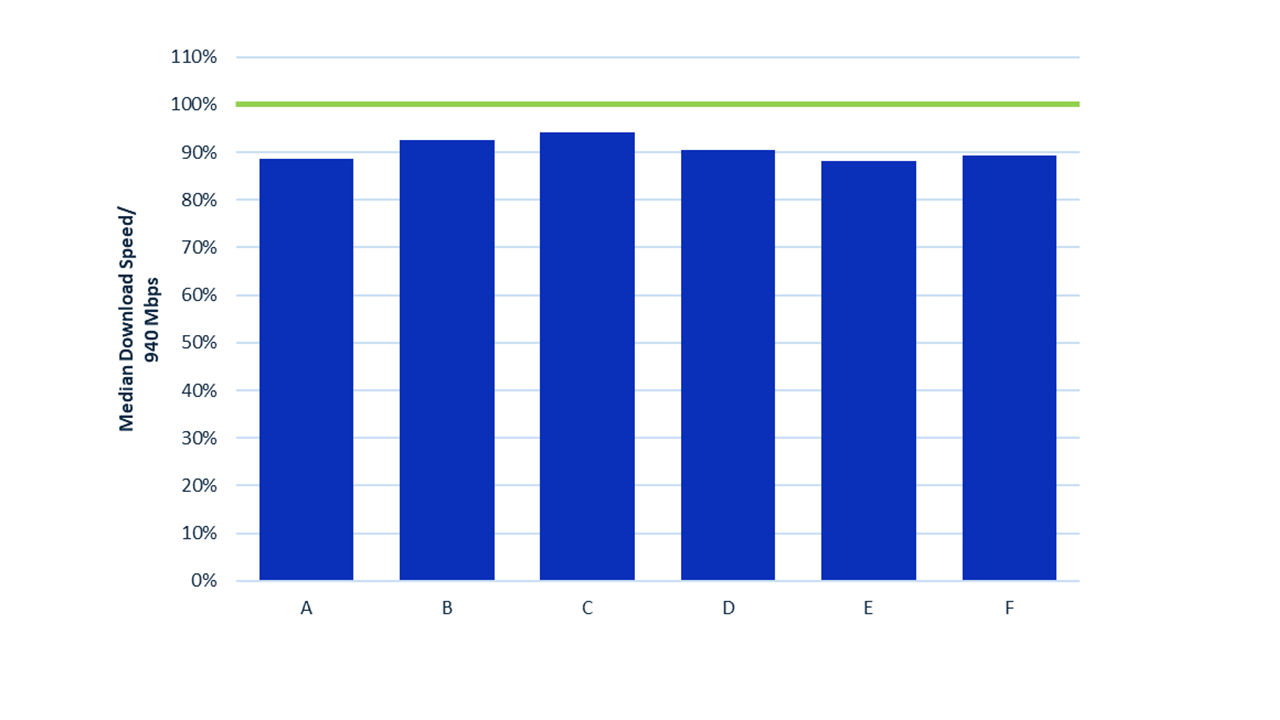

Appendix A

Gigabit Tiers Download Speed Performance

This section provides the performance of speed tiers close to Gigabit speeds. Chart A.1 below provides the download speed performance of these tiers (shown anonymized) normalized to 940 Mbps, which is the maximum speed of Gigabit Ethernet at the transport layer of the Whitebox 8.0 used in these tests. The median download speed as a percentage of 940 Mbps can be seen to vary from 89% to 94% for the measured ISPs.

Chart A.1: Anonymized performance of ISP’s Gigabit tiers.

[1] The actual dates used for measurements for this Thirteenth Report were September 12-13, and 16-21, 2022 (inclusive) plus October 4-6, 11-13, 15-17 and 19-31, 2022 (inclusive). This specific set of 30 days were chosen to avoid dates when outages arising from hurricanes and other natural disasters resulted in lower number of reporting whiteboxes.

[2] The sample is representative in that it aims to include those tiers that constitute the top 80% of the subscriber base per ISP. Some tiers accordingly are not included. As with any sample, budget and sample constitution constraints influence coverage.

[3] All reports can be found at https://www.fcc.gov/general/measuring-broadband-america.

[4] The First Report (2011) was based on measurements taken in March 2011, the Second Report (2012) on measurements taken in April 2012, and the Third (2013) through Thirteenth (2023) Reports were based on measurements taken in September of the year prior to the reports’ release dates. In order to avoid confusion between the date of release of the report and the dates the data for the report was collected, we have shifted to using numbers instead of years to identify the reports. Thus, this year’s report is termed the Thirteenth MBA Report instead of the 2023 MBA Report.

[5] The weighted average is computed using the number of subscribers in each tier as described in greater detail in Section 2.

[6] We first determine the mean value over all the measurements for each individual panelist’s “whitebox” (panelists are sent “whiteboxes” that run pre-installed software on off-the-shelf routers that measure thirteen broadband performance metrics, including download speed, upload speed, and latency). Then for each ISP’s speed tiers, we choose the median of the set of mean values for all the panelists/whiteboxes. The median is that value separating the top half of values in a sample set with the lower half of values in that set; it can be thought of as the middle (i.e., most typical) value in an ordered list of values. For calculations involving multiple speed tiers, we compute the weighted average of the medians for each tier. The weightings are based on the relative subscriber numbers for the individual tiers.

[7] Only tiers that contribute to the top 80% of an ISPs total subscribership are included in this report.

[8] It should be noted that the Form 477 subscriber data values generally lag the reporting month, and therefore, there are likely to be small inaccuracies in the tier ratios. It is for this reason that we encourage ISPs to provide us with subscriber numbers for the measurement month. The only ISPs for which we used the 477 dataset for subscriber numbers were Frontier and Windstream.

[9]For an explanation of Form 477 filing requirements and required data see:https://transition.fcc.gov/form477/477inst.pdf (Last accessed 7/11/2023).

[10]“It is important to track the changing mix of devices and connections and growth in multidevice ownership as it affects traffic patterns. Video devices, in particular, can have a multiplier effect on traffic. An Internet-enabled HD television that draws couple - three hours of content per day from the Internet would generate as much Internet traffic as an entire household today, on an average. Video effect of the devices on traffic is more pronounced because of the introduction of Ultra-High-Definition (UHD), or 4K, video streaming. This technology has such an effect because the bit rate for 4K video at about 15 to 18 Mbps is more than double the HD video bit rate and nine times more than Standard-Definition (SD) video bit rate. We estimate that by 2023, two-thirds (66 percent) of the installed flat-panel TV sets will be UHD, up from 33 percent in 2018.” See Cisco Annual Internet Report (2018-2023) White Paper , https://www.cisco.com/c/en/us/solutions/collateral/executive-perspectives/annual-internet-report/white-paper-c11-741490.html (Last accessed July. 11, 2023).

[11] See for example: https://www.iab.org/wp-content/IAB-uploads/2021/09/2021_nqw_lul.pdf and https://www.bitag.org/latency-explained.php.

[12] Note that this chart includes only those panelists who continued to report both in 2021 and 2021. Where several technologies are plotted at the same point in the chart, this is identified as “Multiple Technologies.”

[13] There were 3,455 panelists from the previous year who continued to participated in the September-October 2022 collection of data. Of these 1018 panelists shifted to a higher tier in 2022.

[14] We do not attempt here to distinguish between these two cases.

[15] While usage patterns changed to some extent during the course of the pandemic the standard test period was kept the same for longitudinal consistency and since usage patterns over other periods (i.e., during normal work/school hours) varied but the peak period remained relatively similar.

[16] For a detailed definition and discussion of this metric, please refer to the Technical Appendix.

[17] We use the term 'temporally' closest server to distinguish it from 'geographically' closest server. Quite often the geographically closest server may not necessarily be the server with the least latency. Each Whitebox fetches a complete list of off-net test servers and measures the round-trip time to each. This list of test servers is loaded at startup and refreshed daily. It then selects the on-net test server with lowest round trip time to test against. The selected node may not be the geographically closest node.

[18]Latency under load results have been anonymized here, as this is the first year we report latency under load, and such metric was not announced prior to the testing period. We also note that, as reflected in raw data published in 2023, some ISPs have since introduced active queue management (AQM) in their networks leading to improved results compared to the measurements from 2022.

[20] See: https://www.voip-info.org/wiki/view/QoS and https://www.ciscopress.com/articles/article.asp?p=357102

[21] A smaller number of panelist results were used in the Report as compared to the much larger total pool of panelists because many of the panelists were not on plans that were part of the study, had whiteboxes that were legacy models not fully supporting the plan speeds, or did not have enough data on reported testing dates. The details on these are included in the Technical Appendix.

[22] This proposed 30 day time period was chosen within the September-October validation period to provide an error-free reporting set of dates and to capture a time period where the maximum number of whiteboxes were reporting data. As shown in Footnote 1, a number of dates were excluded within this validation period. Omitting these dates is consistent with the FCC’s data collection policy for fixed MBA data. See FCC, Measuring Fixed Broadband, Data Collection Policy, https://www.fcc.gov/general/measuring-broadband-america-measuring-fixed-broadband (explaining that the FCC has developed policies to deal with impairments in the data collection process with potential impact for the validity of the data collected).

[23] The MBA program uses test servers that are both neutral (i.e., operated by third parties that are not ISP-operated or owned) and located as close as practical, in terms of network topology, to the boundaries of the ISP networks under study. As described earlier in this section, a maximum of two interconnection points and one transit network may be on the test path in any one direction. If there is congestion on such paths to the test server, it may impact the measurement, but the cases where it does so are detectable by the test approach followed by the MBA program, which uses consistent longitudinal measurements, comparisons with control servers located on-net, comparisons with redundant offnet servers, and trend analyses of averaged results. Details of the methodology used in the MBA program are given in the Technical Appendix to this report.

[24]Independent research, drawing on the FCC’s MBA test platform, suggests that home networks are a significant source of end-to-end service congestion. See Srikanth Sundaresan et al., Home Network or Access Link? Locating Last-Mile Downstream Throughput Bottlenecks, PAM 2016 - Passive and Active Measurement Conference, at 111-123 (Mar. 2016). Numerous instances of research supported by the fixed MBA test platform are described at https://www.fcc.gov/general/mba-research-code-conduct#publications-mars-mba.

[25] “Idle” here refers to there being no or minimal internet traffic to or from the household the measurement is initiated from.

[26]The overall latency experienced by a specific ISP customer in a particular instance may well differ from the MBA latency metric value. First, the customer is unlikely to recreate the exact MBA latency measurement in use as it is not specific to their application. Next, an application-specific latency characterization could well be influenced by the application itself, and its content data networks, if any, the customer’s Internet service provider’s network provisioning and design, as well as factors such as the DNS provider chosen by that customer. The application’s host platform also would have an impact. As mentioned in previous reports, MBA metrics represent one approach to characterizing network performance but do not necessarily replicate customer experience.

[27] The September-October 2022 data set was validated to remove anomalies that would have produced errors in the Report. This data validation process is described in the Technical Appendix.

[28] Data on or after August 1, 2023, is not available.

[29] Measured service tiers were tiers which constituted the top 80% of an ISP’s broadband subscriber base.

[30] In Reports prior to the 2015 MBA Report, for each ratio of actual to advertised download speed on the horizontal axis, the cumulative distribution function curves showed the percentage of measurements, rather than panelists subscribing to each ISP, that experienced at least this ratio. The methodology used since then, i.e., using panelists subscribing to each ISP, more accurately illustrates ISP performance from a consumer’s point of view.

[31] The speed achievable by DSL depends on the distance between the subscriber and the central office. Thus, the complementary cumulative distribution function will fall slowly unless the broadband ISP adjusts its advertised rate based on the subscriber’s location. (Chart 14 illustrates that the performance during non-busy hours is similar to the busy hour, making congestion less likely as an explanation.)